Sabitlenmiş Tweet

Salesforce AI Research

1.9K posts

Salesforce AI Research

@SFResearch

We advance state-of-the-art #AI techniques paving the path for innovative products at @Salesforce. Focus areas: #AIAgents, #EnterpriseAI, #EGI, and #TrustedAI.

Palo Alto, CA Katılım Eylül 2014

420 Takip Edilen19.6K Takipçiler

Correction: Congratulations to @PhilippeLaban!

Salesforce AI Research@SFResearch

Congratulations to our researchers on winning an Outstanding Paper Award at #ICLR2026 for LLMs Get Lost in Multi-Turn Conversation! 🏆 Authors: Philippe Laban @plaban, Hiroaki Hayashi @hiroakiLhayashi, Yingbo Zhou @yingbozhou_ai, Jennifer Neville bit.ly/3ZoC6T1https:/…

English

Our Infrastructure & DevOps team was featured by @GoogleCloud. Lavanya Karanam and Avinash Gudagi on how @Salesforce is solving one of the hardest problems in large-scale AI training: keeping powerful GPUs fully fed.

bit.ly/4n6HDc3

#FutureOfAI #EnterpriseAI

English

3 AI trends shaping #EnterpriseAI: simulation environments, ambient intelligence, and agent-to-agent communication. @Digitai79 on our focus on Enterprise General Intelligence (#EGI) in @gregorojstersek's #TDX26 recap. bit.ly/4mT4irW

#FutureOfAI

Français

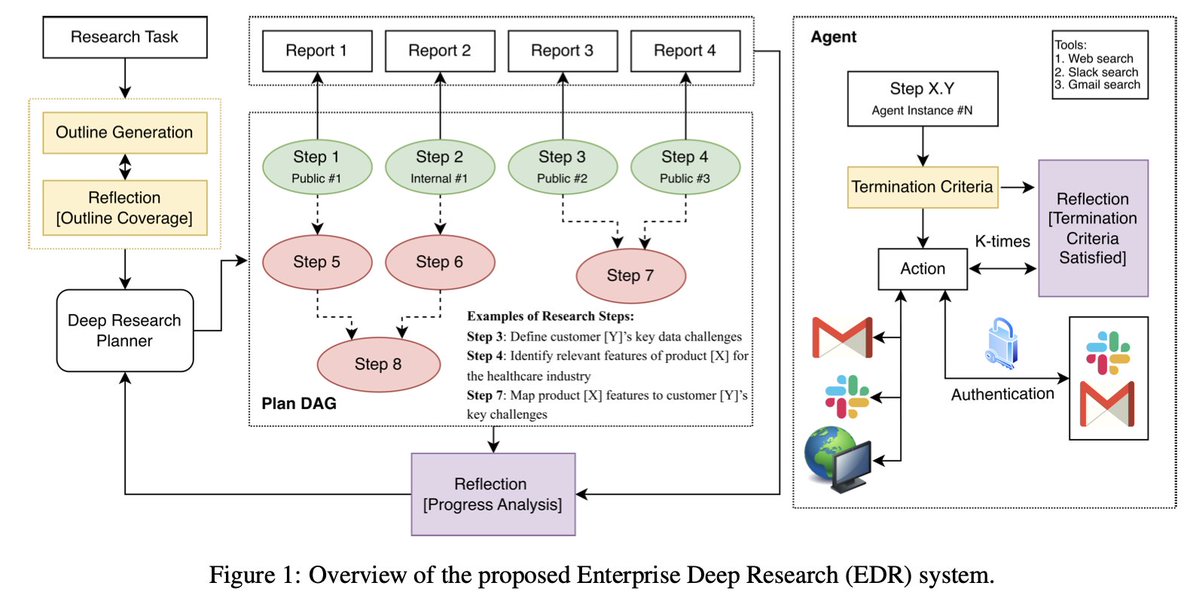

Don't Stop Early: Scalable Enterprise Deep Research with Controlled Information Flow and Evidence-Aware Termination

Paper: bit.ly/49fk2zQ

Enterprise deep research often fails to produce decision-ready reports due to uneven coverage, context explosion, and premature stopping. This work introduces an EDR architecture built on three mechanisms:

→ 🗂️ Coverage-driven task decomposition via outline generation with reflection

→ 🔗 Dependency-guided execution with localized contexts, so information flows only when explicitly required

→ ✅ Evidence-based termination criteria that keep agents gathering until sufficiency conditions are met

🏆 Results: 82.09 HAA and 4.31 coverage on internal sales enablement, outperforming Gemini DR, OpenAI DR, ODR, and DeerFlow. Highly competitive in comprehensiveness (54.85) and insightfulness (55.63) on DeepResearch Bench.

👥 Authors: Prafulla Kumar Choubey, Kung-Hsiang Huang @steeve__huang, Pranav Narayanan Venkit, Jiaxin Zhang @jxzhangjhu, Vaibhav Vats, Yu Li, Xiangyu Peng, Chien-Sheng Wu @jasonwu0731

#FutureOfAI #EnterpriseAI #AgenticAI

English

Salesforce AI Research retweetledi

Heading to the DATA-FM workshop at #ICLR2026? 🦾

Catch my invited talk at 1:30 PM today in Room 203A+B: "Agentic Ambient Intelligence: Perception, Reasoning & Action."

I’ll be diving into 4 new research pillars for moving AI into the physical world.

Come say hi! 📍

English

Congratulations to our researchers on winning an Outstanding Paper Award at #ICLR2026 for LLMs Get Lost in Multi-Turn Conversation! 🏆

Authors: Philippe Laban @plaban, Hiroaki Hayashi @hiroakiLhayashi, Yingbo Zhou @yingbozhou_ai, Jennifer Neville

bit.ly/3ZoC6T1https:/…

English

Attending #ICLR2026? Meet us at @Salesforce Booth #203 to hear the latest on how we're evolving enterprise general intelligence.

For more, follow our journey at ICLR: bit.ly/4vLy9qohttps:/…

#FutureOfAI #EnterpriseAI

English

📍 Live from Rio: 21 papers at #ICLR2026, starting today.

Our work spans agent architectures, reasoning, evaluation, deep research reliability, and scalable RL, tackling what matters most for #EnterpriseAI.

sforce.co/4vRgqOs

#FutureOfAI

English

Can AI coding assistants maintain effectiveness as codebases scale 100×? LoCoBench-Agent evaluates agents across 10K to 1M token contexts, spanning 8,000 scenarios in 10 programming languages and four difficulty tiers.

sforce.co/4txCDj4

👥: @_Jason_Q @huan__wang

#AgenticAI

English

The Illusion of Certainty: Decoupling Capability and Calibration in On-Policy Distillation: bit.ly/48iccVY

On-policy distillation (OPD) improves task accuracy but systematically traps models in severe overconfidence. We trace this to an information mismatch between training and deployment, and introduce CaOPD to fix it.

→ Identifies a pervasive Scaling Law of Miscalibration: even frontier LLMs exhibit massive calibration gaps that scale does not resolve

→ Formalizes how privileged teacher context induces entropy collapse and optimism bias in the student

→ Replaces self-reported confidence with a student-grounded empirical target, decoupling what the model answers from how certain it should be

→ Achieves Pareto-optimal calibration without the capability tax of RL-based methods, enabling a compact 8B model to rival frontier LLMs on reliability

Code: bit.ly/4cUtCKO

Authors: Jiaxin Zhang @jxzhangjhu, Xiangyu Peng @beckypeng6, Qinglin Chen, Qinyuan Ye @qinyuan_ye, Caiming Xiong @CaimingXiong, Chien-Sheng Wu @jasonwu0731

#FutureOfAI #EnterpriseAI

English

At #TDX26, Itai Asseo @Digitai79 on Enterprise General Intelligence: refining generic LLMs into reliable enterprise agents through AI Foundry and Agentforce Labs, including eVerse, the Learning Engine, and Agent Startup. sforce.co/3OrRYCb #FutureOfAI #EnterpriseAI #EGI

English

(7/7) Lost in Translation: Do LVLM Judges Generalize Across Languages?

This work examines whether large vision-language model judges maintain evaluation reliability across languages, investigating cross-lingual generalization gaps in automated assessment systems.

Authors: Md Tahmid Rahman Laskar, Mohammed Saidul Islam, Mir Tafseer Nayeem, Amran Bhuiyan, Mizanur Rahman, Shafiq Joty @JotyShafiq, Enamul Hoque, Jimmy Huang

Accepted to #ACL2026

English

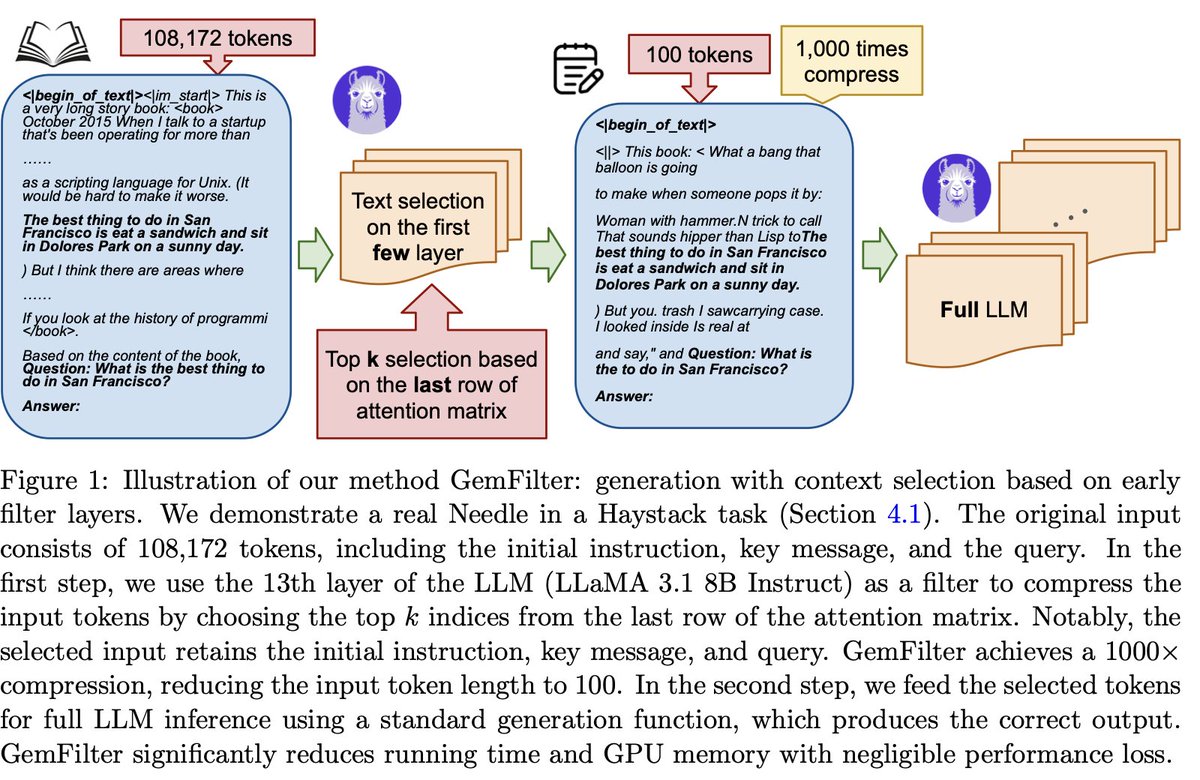

(6/7) Discovering the Gems in Early Layers: Accelerating Long-Context LLMs with 1000x Input Token Reduction: bit.ly/4mQq0gj

GemFilter uses early LLM layers as filters to identify and compress relevant input tokens before full processing. The method achieves a 2.4x speedup and 30% reduction in GPU memory usage compared to state-of-the-art approaches, with negligible accuracy loss.

Authors: Zhenmei Shi, Yifei Ming @ming5_alvin, Xuan-Phi Nguyen @xuanphinguyen, Yingyu Liang, Shafiq Joty @JotyShafiq

Accepted to #ACL2026

English

(1/7) @Salesforce AI Research has 6 papers accepted to ACL 2026, advancing work across web agent evaluation, LLM reasoning verification, uncertainty quantification, long-context efficiency, and multilingual judge systems.

ACL 2026 takes place July 2-7 in San Diego, California.

#ACL2026 #FutureOfAI #EnterpriseAI

English

That's a wrap on #TDX26!

The @Salesforce AI Research team was on the ground in San Francisco, connecting with the community and sharing the latest from our #AIFoundry. From research demos to conversations about what's next in #EnterpriseAI, it was great to be part of the energy!

English