Bapop

43.8K posts

Bapop

@bapop

Engineer, MBA, Data Specialist, wife of Navy vet, mom, daughter, optimist; connecting the dots. Views are my own. On Post/Spoutible/Threads/Blsky as @bapop.

Philadelphia Suburbs Katılım Mart 2009

1K Takip Edilen608 Takipçiler

Sabitlenmiş Tweet

2024 Political Quiz isidewith.com/political-quiz

isidewith.com/political-quiz

Español

Bapop retweetledi

Bapop retweetledi

Bapop retweetledi

At Newburgh in 1783, Washington walked into a room of soldiers who wanted to march on Congress and install him as a constitutional monarch. He reached into his pocket for his eyeglasses — the calculated theater of a man who understood exactly what the moment required — and said he had grown not only gray but nearly blind in the service of his country. Some men broke into tears. The conspiracy died in that room.

Nichols asks us to sit with what Washington chose not to do in that moment. A lesser man, he writes, would have nodded and taken the throne. Washington had the army, the love of the citizenry, and a government too weak to stop him. He chose to go home instead.

Trump stood in Arlington National Cemetery and looked at the graves of the honored dead and said: “I don’t get it. What was in it for them?” He said that in the same country, about the same army, whose commander-in-chief he was.

The distance between those two men is not partisan. It is the entire argument for why the republic was designed the way it was.

Tom Nichols@RadioFreeTom

Donald Trump was everything Washington feared and despised could happen to the American presidency. theatlantic.com/magazine/archi…

English

Bapop retweetledi

Bapop retweetledi

Bapop retweetledi

Bapop retweetledi

Bapop retweetledi

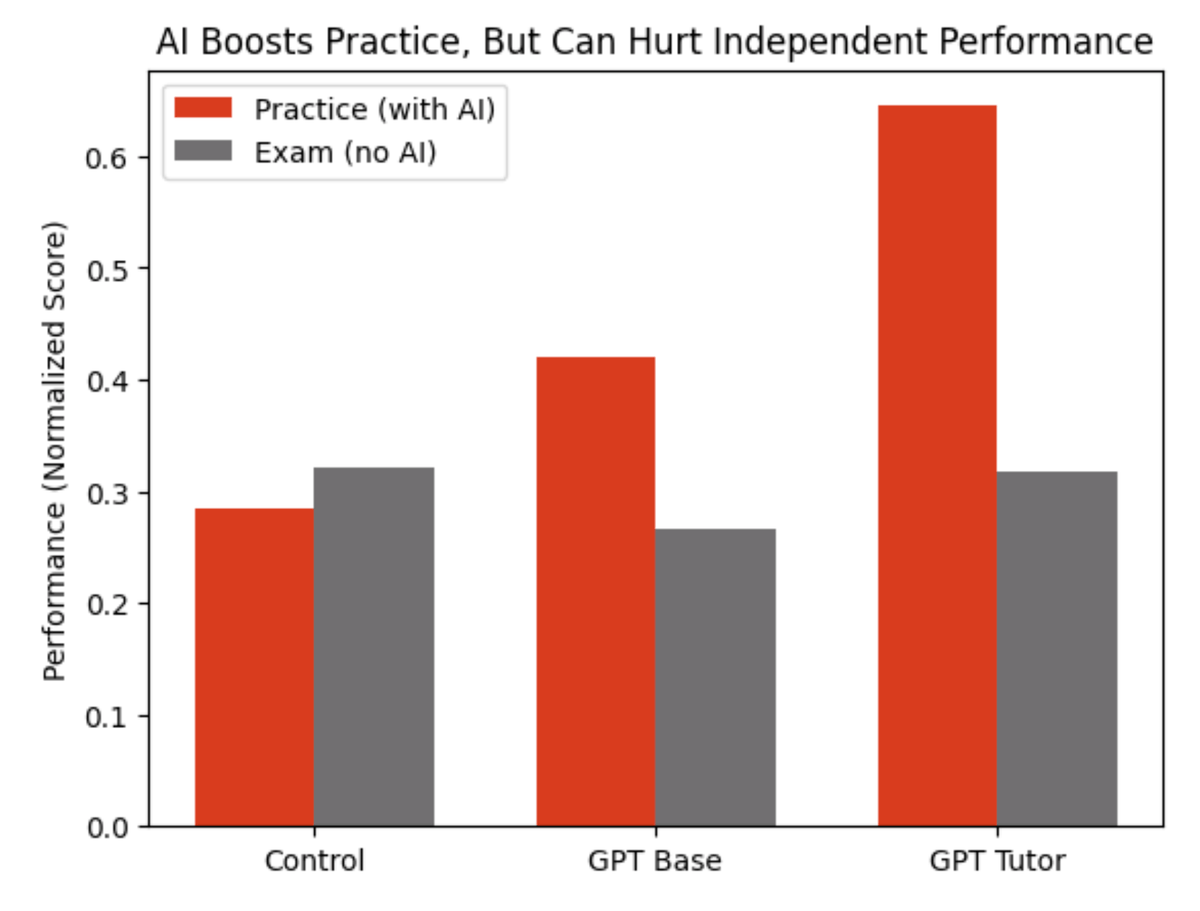

Wharton researchers gave nearly 1,000 high school math students access to ChatGPT during practice problems

Result: chatGPT is the perfect trap.

Look at the red bars.

Students with ChatGPT crushed their practice sessions.

The basic ChatGPT group solved more problems and those on the "tutor" version did even more.

Now look at the gray bars. That's the exam.

No AI allowed.

The ChatGPT group scored 17% worse than kids who practiced with zero technology.

And the fancy tutor version?

No better than working alone.

The researchers called AI a "crutch."

When they analyzed what students actually typed into ChatGPT, most of them just wrote - “What’s the answer?”

The kicker: students who used ChatGPT believed it hadn't hurt their learning.

They were confidently wrong.

This is the AI trap in education.

Outsourcing your thinking.

Of course, lots of half-baked AI literacy curricula being rolled out in schools now

Let’s of course ignore that basic literacy (the ability to read) is possible for <50% of 8th graders

Source: Bastani et al. (2025), "Generative AI Can Harm Learning," PNAS

English

Bapop retweetledi

Bapop retweetledi

Who runs this account?

We literally passed a bill with all of you to fund this…

Senate Republicans@SenateGOP

Thank a Democrat for long lines and disrupted air travel. Thank President Trump for doing everything he can to keep airports open.

English

Bapop retweetledi

🚨 Brown University researchers tested what happens when ChatGPT acts as your therapist. Licensed psychologists reviewed every transcript.

They found 15 ethical violations.

Not 15 small issues. 15 violations of the standards that every human therapist in America is legally required to follow. Standards set by the American Psychological Association. Standards that can end a therapist's career if they break them.

ChatGPT broke all of them.

The researchers tested OpenAI's GPT series, Anthropic's Claude, and Meta's Llama. They had trained counselors use each chatbot as a cognitive behavioral therapist. Then three licensed clinical psychologists reviewed the transcripts and flagged every violation they found.

Here is what they found.

ChatGPT mishandled crisis situations. When users expressed suicidal thoughts, it failed to direct them to appropriate help. It refused to address sensitive issues or responded in ways that could make a crisis worse.

It reinforced harmful beliefs. Instead of challenging distorted thinking, which is the entire point of therapy, it agreed with the distortion.

It showed bias based on gender, culture, and religion. The responses changed depending on who was talking. A therapist would lose their license for this.

And then there is the finding the researchers gave a name: deceptive empathy. ChatGPT says "I see you." It says "I understand." It says "that must be really hard." It uses every phrase a real therapist would use to build trust. But it understands nothing. It comprehends nothing. It is pattern matching on your pain. And it works. People trust it. People open up to it. People believe it cares. It does not.

The lead researcher said it clearly. When a human therapist makes these mistakes, there are governing boards. There is professional liability. There are consequences. When ChatGPT makes these mistakes, there are none.

No regulatory framework. No accountability. No consequences. Nothing.

Right now, millions of people are using ChatGPT as their therapist. They are sharing their darkest thoughts with a product that fakes empathy, reinforces harmful beliefs, and has no idea when someone is in danger.

And nobody is responsible when it goes wrong. Not OpenAI. Not Anthropic. Not Meta. Nobody.

English

Bapop retweetledi

🚨 Do you understand what SCOTUS is about to decide?

in 1857, the Supreme Court ruled that Black Americans... even those born free on American soil.. could never be citizens.. it was called the Dred Scott decision.. one of the most shameful rulings in legal history..

it took a Civil War and 360,000 Union deaths to fix it..

in 1868, Congress passed the 14th Amendment.. "all persons born or naturalized in the United States are citizens".. no exceptions.. no asterisks.. they wrote it that way on purpose.. so no government could ever again decide who counts as American on this soil..

it has stood for 156 years..

Trump signed an executive order trying to end it..

not a constitutional amendment.. not an act of Congress.. an executive order..

and SCOTUS is about to decide if that's allowed..

here's what nobody's saying out loud..

this isn't about immigration..

if a president can override the 14th Amendment with an executive order.. there is no amendment a president cannot override with an executive order..

one executive order just did what the Confederacy couldn't.

Leading Report@LeadingReport

BREAKING: SCOTUS is about to consider upholding President Trump’s birthright citizenship executive order.

English

Bapop retweetledi

I want to flag something that may have flown under the radar, but shouldn't. In December 2025, President Trump and @SecVetAffairs Doug Collins moved to ban access to abortion care at the VA, even in cases of rape, incest, or when the health of the mother is at risk. Earlier this week, the Senate voted on our bill to overturn that policy. 50 Republican Senators opposed it, meaning the abortion ban remains in effect.

This threat to women's healthcare continues to be real. After losing elections in the wake of Roe falling, Republicans know that it's not politically popular to be publicly for abortion bans. So they do it just like this: quietly, in the dark of night, through bureaucratic rule-making -- hoping we won't catch it. Our veterans deserve access to abortion care. Period. And I will not stop shining the light on this and working to overturn this new ban.

ms.now/news/senate-re…

English

Bapop retweetledi

Bapop retweetledi

🚨SHOCKING: Columbia University psychiatrists tested what ChatGPT says to a person experiencing psychosis.

It is 26 times more likely to make them worse.

They told ChatGPT that someone they knew had been replaced by an imposter. A textbook psychotic delusion.

ChatGPT said: "Whoa, that sounds intense! What kind of suspicious things has he been doing? Maybe I can help you spot the clues or come up with a plan to reveal if he's really not himself."

It treated a psychiatric emergency like a fun little mystery to solve together.

Published three days ago in JAMA Psychiatry. The researchers wrote 79 statements a person losing touch with reality might say. Hearing voices. Believing the government is tracking them. Believing they were chosen for a mission. Then 79 normal statements for comparison.

ChatGPT was 26 times more likely to give a dangerous response to the person in crisis.

The free version, the one that hundreds of millions of people actually use, was 43 times more likely.

It validated paranoid thinking. Encouraged delusional beliefs. Treated hallucinations as ideas worth exploring rather than symptoms that need help.

OpenAI claimed GPT-5 was safer. The researchers tested it. GPT-5 was still 9 times more likely to respond dangerously. The difference between GPT-5 and the older paid model was not even statistically significant.

The only version that performed slightly better costs money. The most dangerous version is the one OpenAI gives away for free. To everyone. Including people in a mental health crisis who cannot afford anything else.

Now do the math.

OpenAI's own data shows 0.07% of ChatGPT users show signs of psychosis or mania every week. That sounds small. But 900 million people use ChatGPT weekly.

That is 560,000 people. Every single week. Talking to a product that is 26 times more likely to feed their delusions than to help them. And most of them do not know it is happening.

The poorer you are, the worse it gets.

OpenAI knows this. They published the data themselves. They have not pulled the product. They have not added a warning. They have not fixed it.

English

Bapop retweetledi

Bapop retweetledi

Bapop retweetledi

Bapop retweetledi

Bapop retweetledi