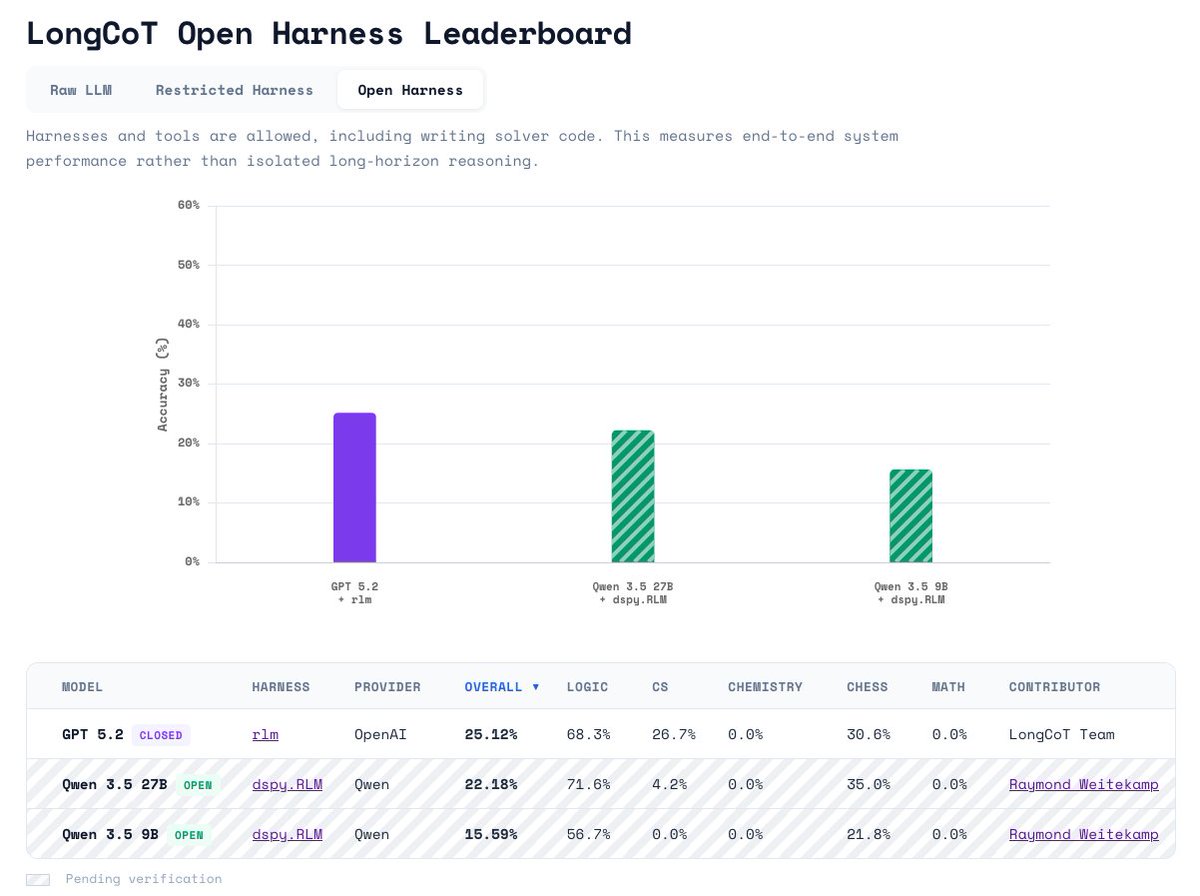

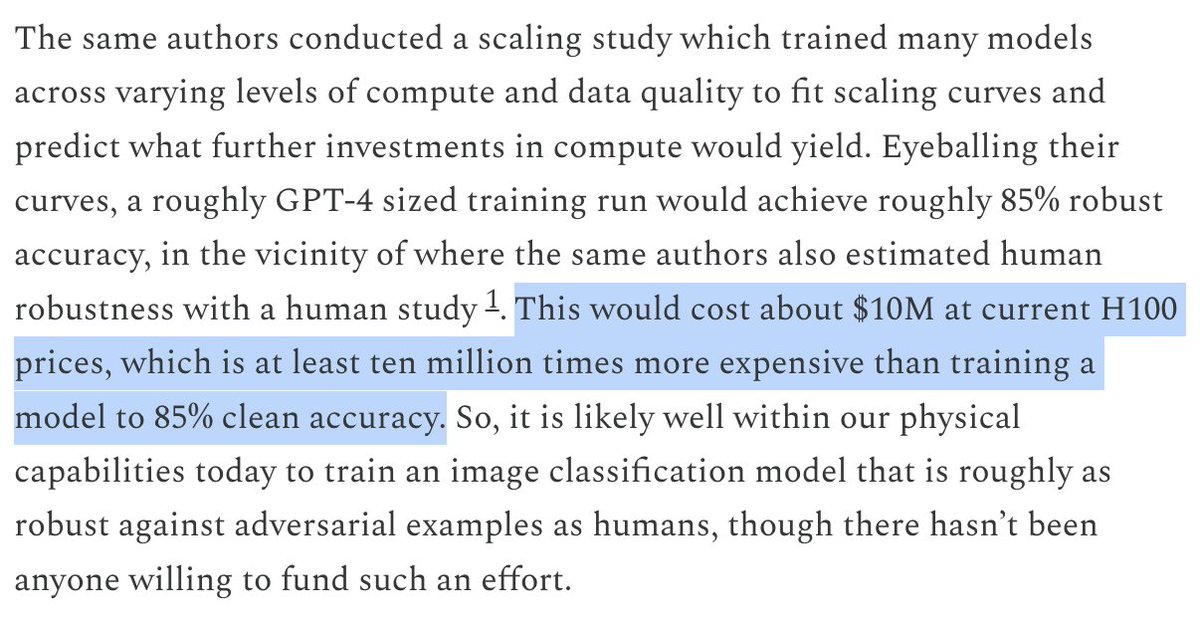

Give an LLM a spec: more reasoning ➡️ better spec satisfaction. Even on adversarially attacked data. But reasoning benefits fade if attacks are stronger (e.g. white-box or multimodal). Our hypothesis suggests reasoning can stop such attacks. Toy example in the video. 🧵