Becky Xiangyu Peng

33 posts

@beckypeng6

Senior Research Scientist @SFResearch, PhD @GeorgiaTech. She/her. NLG + MM + Agents + RL

🚨 NuRL: Nudging the Boundaries of LLM Reasoning GRPO improves LLM reasoning, but often within the model's "comfort zone": hard samples (w/ 0% pass rate) remain unsolvable and contribute zero learning signals. In NuRL, we show that "nudging" the LLM with self-generated hints effectively expands the model's learning zone 👉consistent gains in pass@1 on 6 benchmarks w/ 3 models & raises pass@1024 on challenging tasks! Key takeaways: 1⃣GRPO can't learn from problems the model never solves correctly, but NuRL uses self-generated "hints" to make hard problems learnable 2⃣Abstract, high-level hints work best—revealing too much about the answer can actually hurt performance! 3⃣NuRL improves performance across 6 benchmarks and 3 models (+0.8-1.8% over GRPO), while using fewer rollouts during training 4⃣NuRL works with self-generated hints (no external model needed) and shows larger gains when combined with test-time scaling 5⃣NuRL raises the upper limit: it boosts pass@1024 up to +7.6% on challenging datasets (e.g., GPQA, Date Understanding) 🧵

(Thread 1/8) 🚨 Strefer: Empowering Video LLMs with Space-Time Referring and Reasoning via Synthetic Instruction Data 🚨 Introducing Strefer: a novel data engine for auto-generating instruction data that enables Video LLMs to excel at spatiotemporal video understanding 🎬🧩⏳ Key Contributions: ▶️ Automated Pipeline: Eliminates dependence on legacy annotations through fully automatic instruction generation ▶️ Fine-grained Spatiotemporal Information: Produces temporally aligned, object-centric metadata with instruction-response pairs and multimodal prompts ▶️ Data-Efficient: Achieves improvements in space-time referring and reasoning with only 545 extra videos and no proprietary model dependencies 📄 Paper: bit.ly/427rVnw 🌐 Project: bit.ly/4gi4Owr 💻 Code: bit.ly/3I5HbdO 🎥 YouTube (10-min video): bit.ly/4lZL1TS How does Strefer lay the foundation for perceptually grounded, instruction-tuned Video LLMs? Dive into the researchers' walk-through below! 🧵

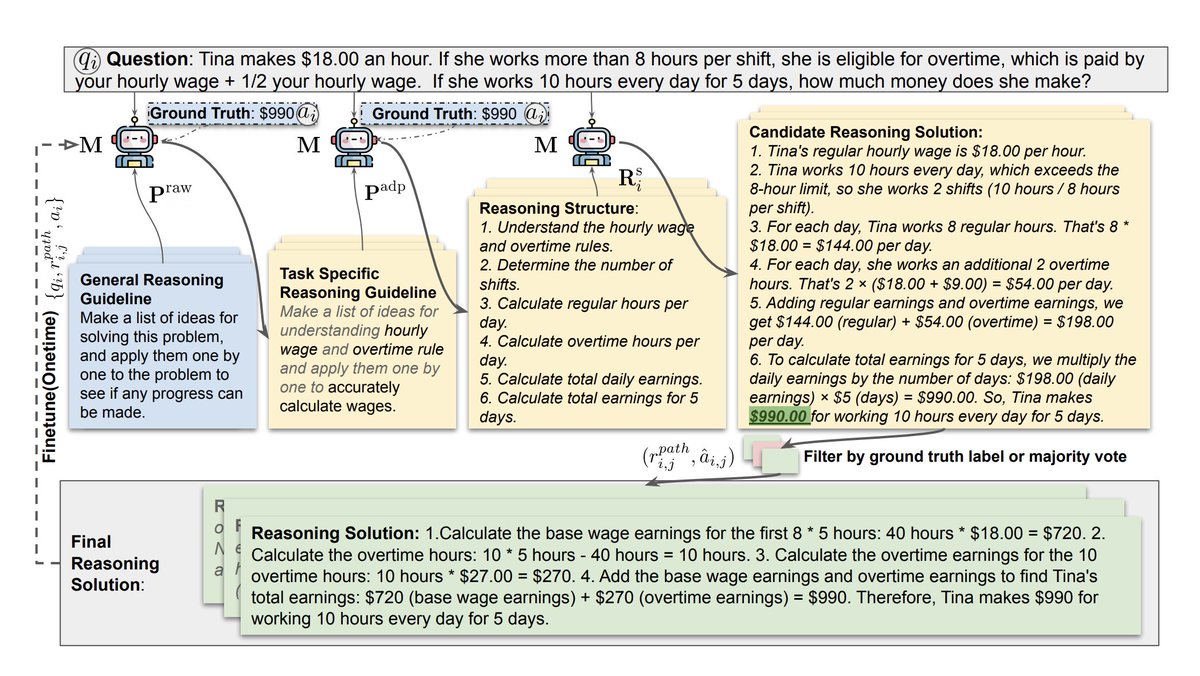

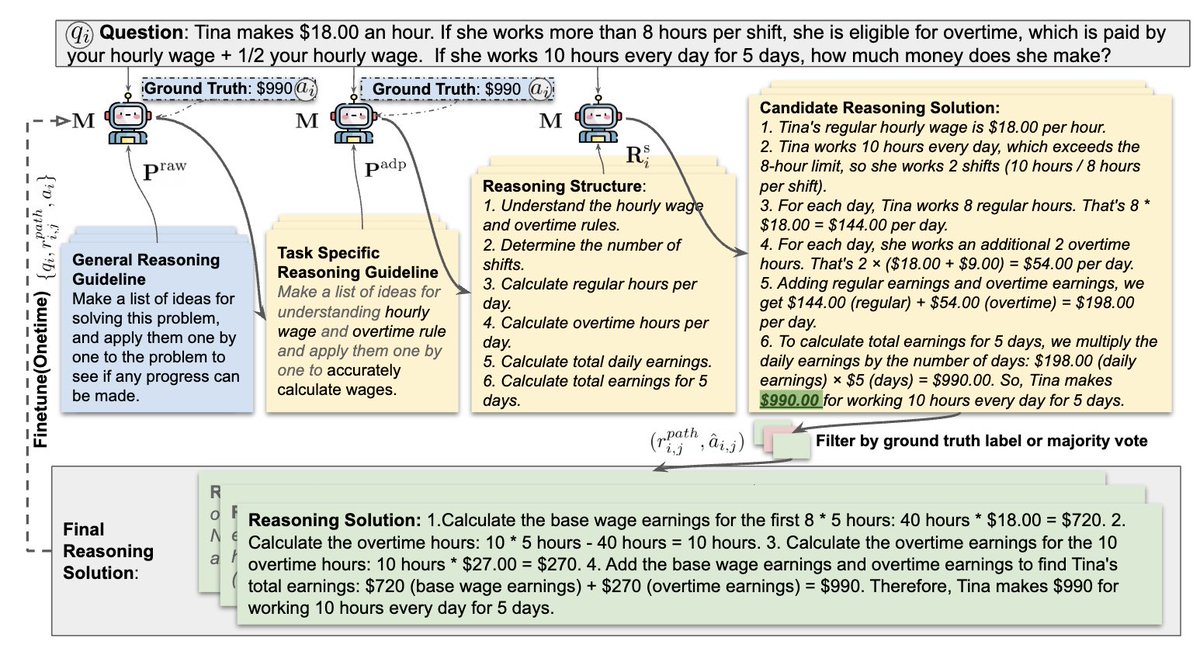

🚨🆕🚨Introducing ReGenesis: Reasoning Generalists via Self-Improvement! Our method self-synthesizes reasoning paths, moving from abstract to concrete. 🔥While others see a 4.6% drop in OOD performance, ReGenesis delivers a 6.1% boost! 🚀 🔗arxiv.org/abs/2410.02108