Ran Xu

92 posts

Ran Xu

@stanleyran

Research Director @ Salesforce AI Research

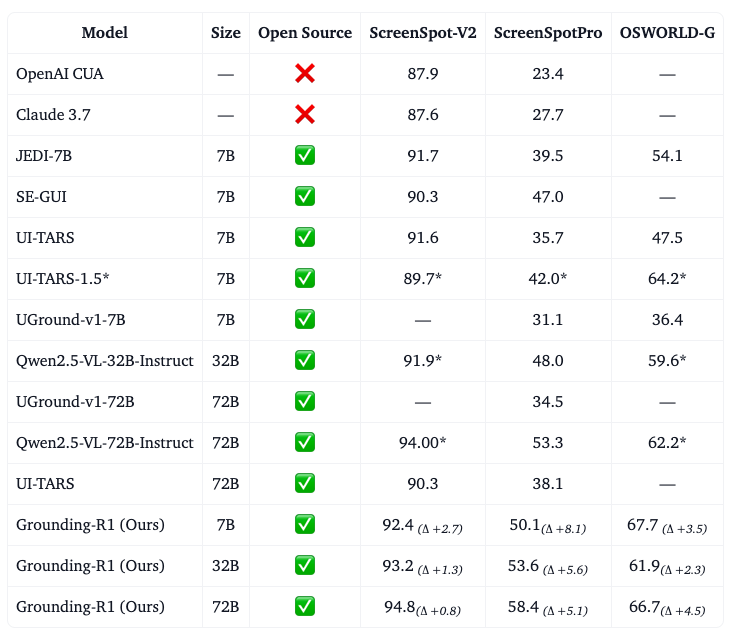

Meet AGUVIS: A pure vision-based framework for autonomous GUI agents, operating seamlessly across web, desktop, and mobile platforms without UI code. Key Features & Contributions 🔍 Pure Vision Framework: First fully autonomous pure vision GUI agent capable of performing tasks independently without relying on closed-source models 🔄 Cross-Platform Unification: Unified action space and plugin system that works consistently across different GUI environments 📊 Comprehensive Dataset: Large-scale dataset of GUI agent trajectories with multimodal grounding and reasoning 🧠 Two-Stage Training: Novel training pipeline focusing on GUI grounding followed by planning and reasoning 💭 Inner Monologue: Explicit planning and reasoning capabilities integrated into the model training Project Page: aguvis-project.github.io Paper: huggingface.co/papers/2412.04… GitHub: github.com/xlang-ai/aguvis

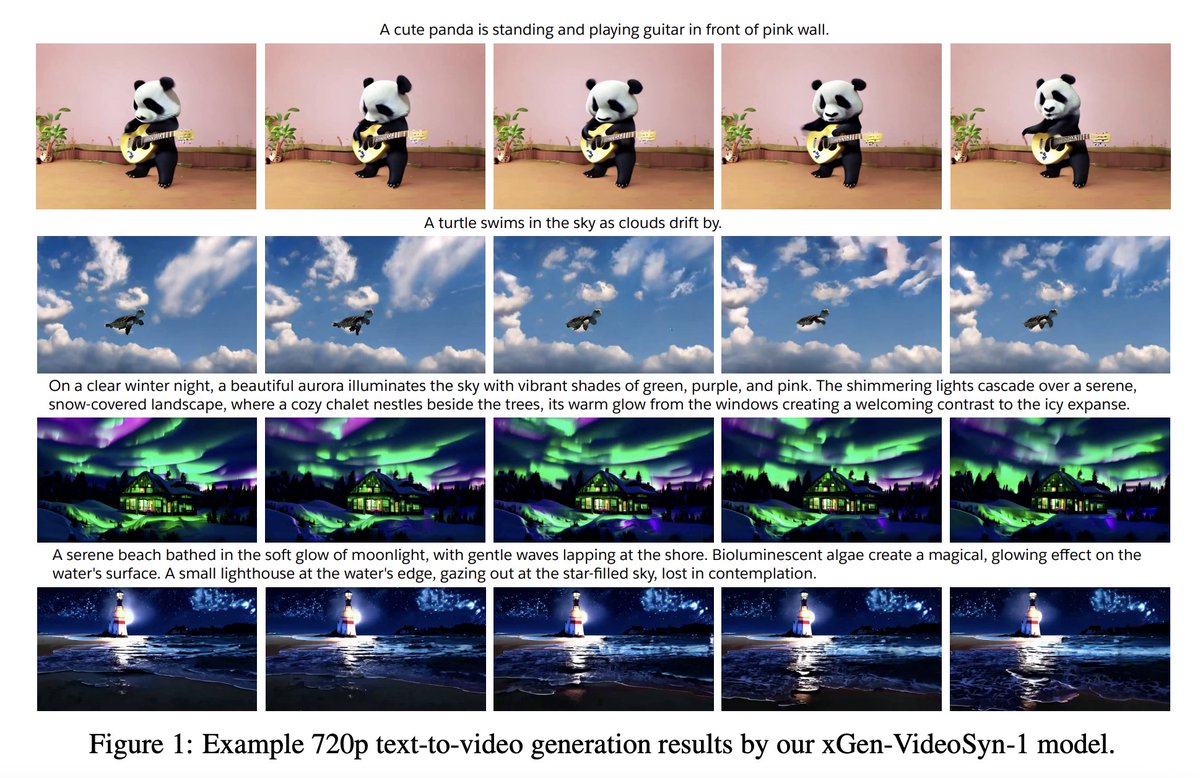

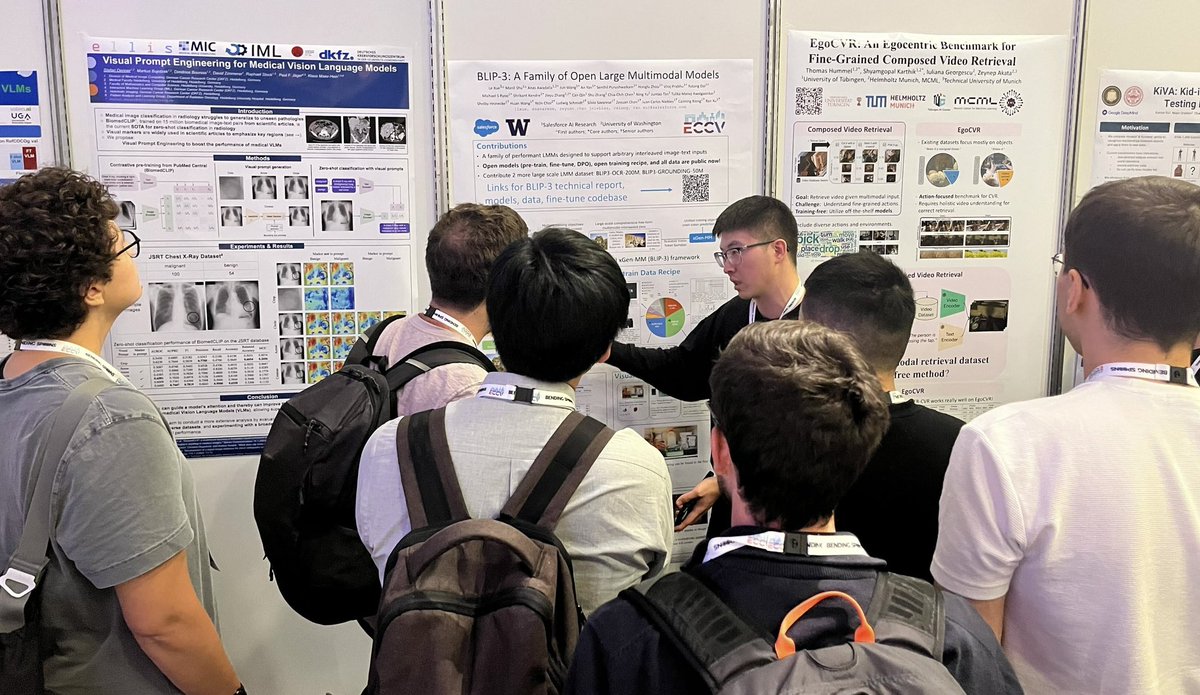

🇮🇹🚀💥Headed to #ECCV2024? Bookmark this for a deep dive into our team’s groundbreaking research across multiple domains.👇 SUNDAY 29th SEPT (All times CEST) xGen-VideoSyn-1: Setting new standards in text-to-video synthesis 10:38 — 11:20am Room: Amber 7+ 8 📝 AI4VA Workshop: bit.ly/4ej1qQ0 📚Paper: arxiv.org/pdf/2408.12590 — MONDAY 30 SEPT: ECCV2024 Workshop on Multimodal Agents 8:30am — 12:30pm Room: Amber 7 + 8 📝Workshop: multimodalagents.github.io BootPIG: Bootstrapping zero-shot personalized image generation 17:40 —17:55 (5:40pm - 5:55pm) Room Space 2 📝Synthetic Data4CV Workshop: bit.ly/47WRidH 📚Poster / Paper: arxiv.org/pdf/2401.13974 xGen-MM (BLIP-3): A groundbreaking family of multimodal models 16:00 — 20:00 (4-8pm) Rom: Amber 5 📝EVAL-FoMo 24 Workshop: bit.ly/4eksONQo 📚Poster / Paper: arxiv.org/pdf/2408.08872 — WEDNESDAY 2 OCT LayoutDETR: Redefining multimodal layout design 10:30am — 12:30pm 📚Poster / Paper: arxiv.org/pdf/2212.09877 X-InstructBLIP: Pioneering cross-modal reasoning 16:30 —18:30 (4:30 - 6:30pm) 📚Poster / Paper: arxiv.org/pdf/2311.18799 — FRIDAY 4 OCT SQ-LLaVA: Self-questioning in vision-language AI 10:30am - 12:30pm 📚Poster / Paper: arxiv.org/pdf/2403.11299 See you in Milan, @eccvconf 🤖 #AIResearch #ComputerVision

Introducing the full xLAM family, our groundbreaking suite of Large Action Models! 🚀 From the 'Tiny Giant' to industrial powerhouses, xLAM is revolutionizing AI efficiency! #AIResearch #AIEfficiency 🤗 Hugging Face Collection: bit.ly/4faoYaQ 🤩 Research Blog bit.ly/3MxliCZ 🗞️ Press Release: sforce.co/3XzaOt9 Meet the family: • xLAM-1B / TINY: Our 1B parameter marvel, ideal for on-device AI. Outperforms larger models despite its compact size • xLAM-7B / SMALL: Perfect for swift academic exploration with limited GPU resources. • xLAM-8x7B / MEDIUM: Mixture-of-experts model balancing latency, resources, and performance for industrial applications. • xLAM-8x22B / LARGE: Our large-scale model for optimal performance in high-resource environments. 🎉 Huge congrats to the team of AI scientists who brought xLAM series to life! Zuxin Liu @LiuZuxin Shirley Kokane @KokaneShirley Ming Zhu @ming_zhu0527 Tian Lan @TLan001 Jianguo Zhang @JianguoZhang3 Thai Hoang @TeeH912. Caiming Xiong @CaimingXiong Silvio Savarese @silviocinguetta