Ben Miller

616 posts

Ben Miller

@bensen

🇪🇺🇦🇹 Tech-Enthusiast. AI Tinkerer. Into OpenClaw. Former Tech Editor. Prev Apple PR; Burson - Qualcomm, Google, Accenture. Supporter of 🇺🇦.

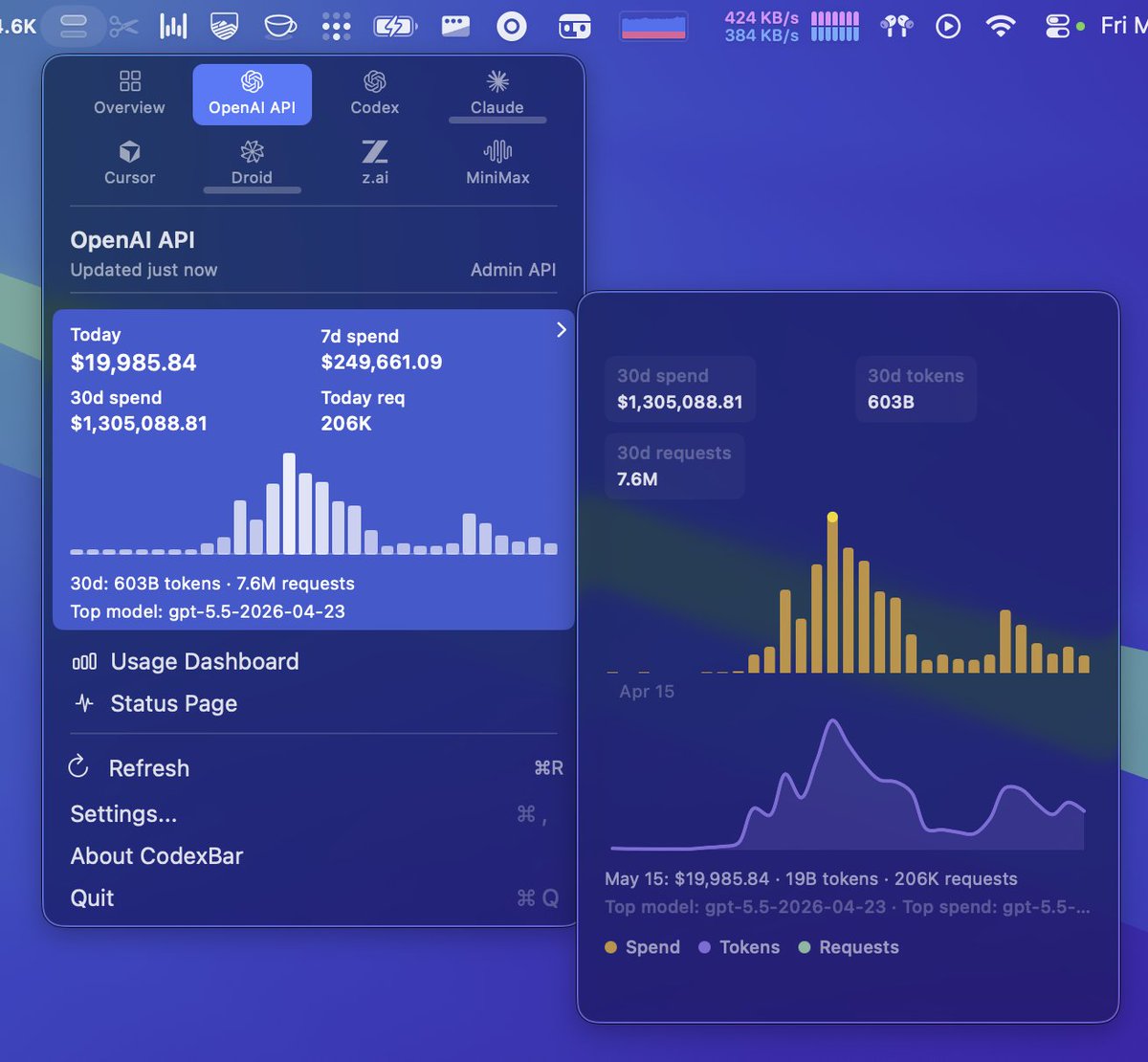

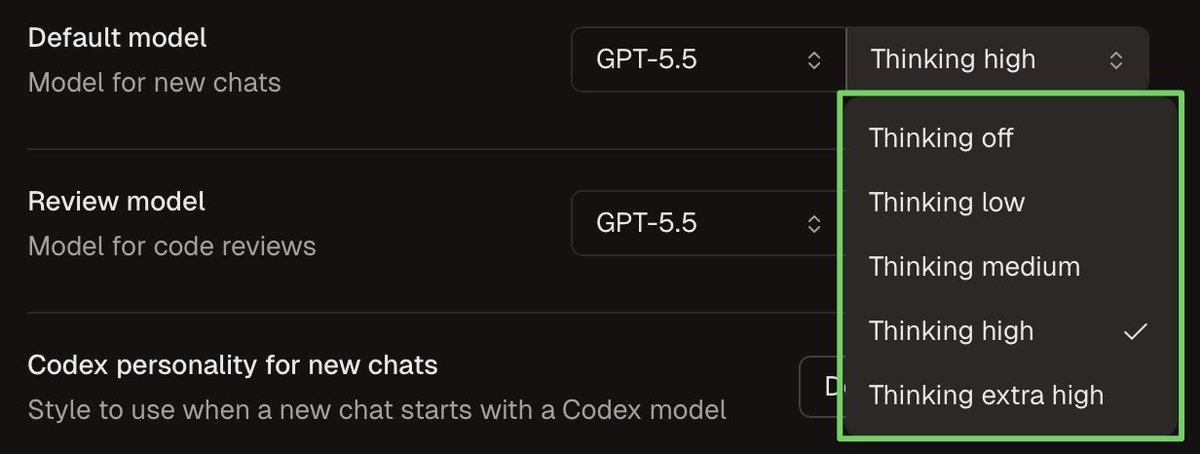

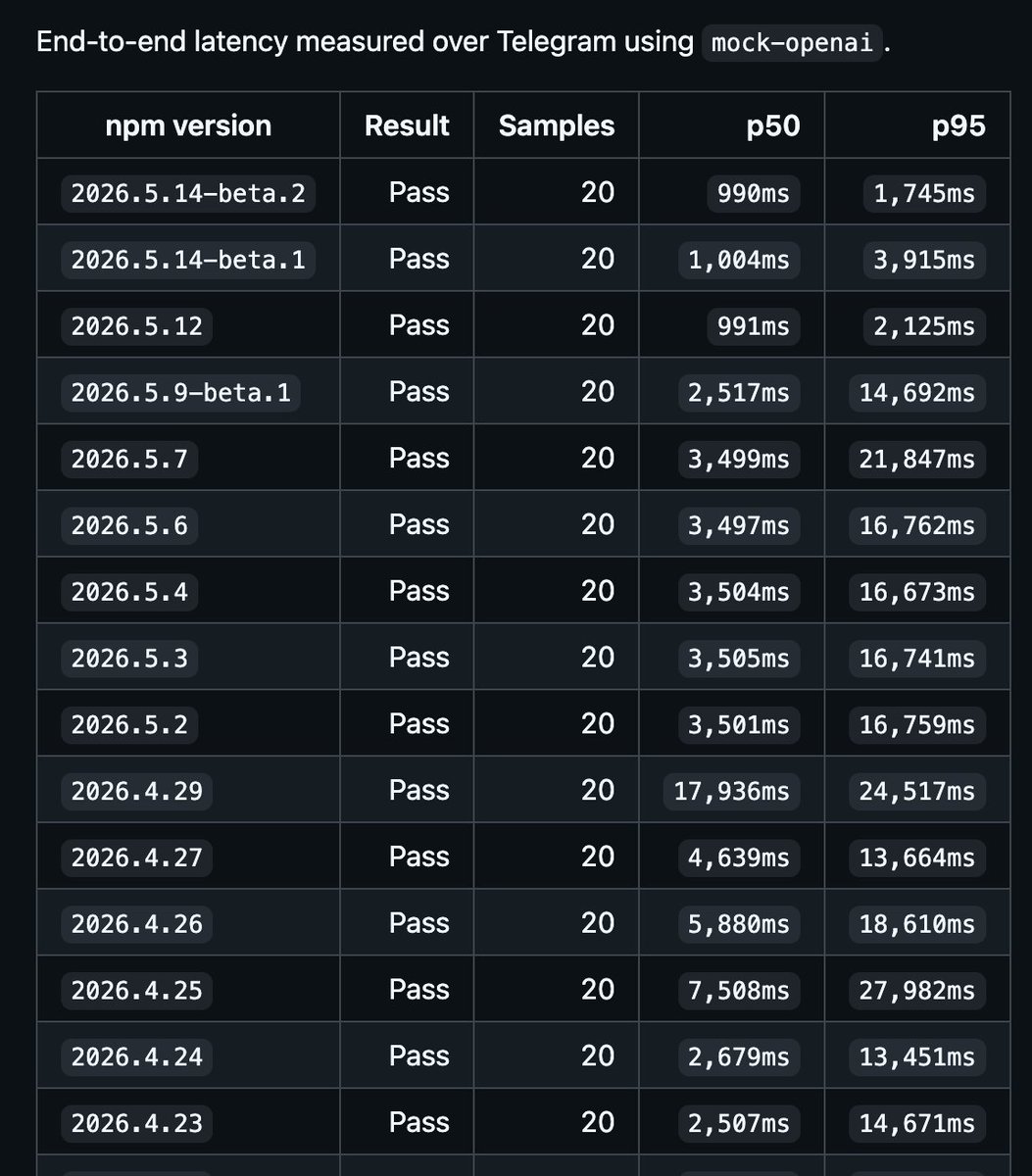

Your ChatGPT subscription now powers an OpenClaw agent that genuinely feels magical to talk to. Previous OpenClaw releases had OpenAI models running, but they never quite let the models reach their full potential. That changes today. Personality is now deliberate, tool calls land exactly where they should, and your agent actually follows through on what it says it will do. OpenClaw is now running on top of the Codex harness by default. In handing the inner loop to OpenAI's native Codex harness, we eliminated the conflicting instructions and duplicate tools that used to make the model hesitate. What we stripped out under the hood: - Duplicate tools (no more guessing between Codex native vs OpenClaw versions) - Conflicting instructions (no more NO_REPLY vs message tool ambiguity) - Leaked context (heartbeat logic only appears on actual heartbeat turns) Less context bloat. More room for the agent to think. And here's what we inherited for free, thanks to the Codex App Server: - Searchable dynamic tools. Roughly 5,500 fewer upfront tokens per turn, which means faster and cheaper. - Auto-Review mode using the built-in Codex guardian. - OpenAI's native plugins (Calendar, Email, Drive) running in the same thread. For you, the result is a personal agent that actually feels personal. It picks up where you left off across any channel, handles things before they hit your radar, and only breaks your flow when it has something genuinely worth showing you. For developers, the result is stability. Because the inner loop runs on OpenAI’s native Codex harness, every upstream improvement lands in your agent automatically. To get started, paste this in terminal: > openclaw onboard That is the whole setup.

Introducing npx claude-p A dropin replacement for claude -p