Sabitlenmiş Tweet

michael s galpert

40K posts

michael s galpert

@msg

See you at the next @clawcon run a product studio that enables people with ai. previously worked on Fortnite and a bunch of startups.

Katılım Mart 2007

5.3K Takip Edilen17K Takipçiler

michael s galpert retweetledi

michael s galpert retweetledi

Me and my goblins on GPT 5.5 FAST

anu@anuatluru

Best 2 minutes. Sped it up. GENER8ION - STORM starring Yung Lean.

English

I'll be @stripe Sessions in SF tomorrow! Give a shout if you're around and let's meetup

English

michael s galpert retweetledi

michael s galpert retweetledi

ClawCon is going to China next month!

First event will be in Shanghai cohosted with @themu_xyz

luma.com/clawconshanghai

English

Sprouting is one of the most overpowered way to improve food

Most of the mineral content of nuts/beans/grains is locked up in insoluble phytate complexes, and the content of these is reduced by 50-80% in sprouting

Sprouting reduces oxalate and lectin content by 60-90% in beans and grains

On top of that, the content of B-vitamins and polyphenols increases significantly from sprouting

Broccoli sprouts contain around 50x the concentration of active compounds like sulforaphane vs. broccoli

English

michael s galpert retweetledi

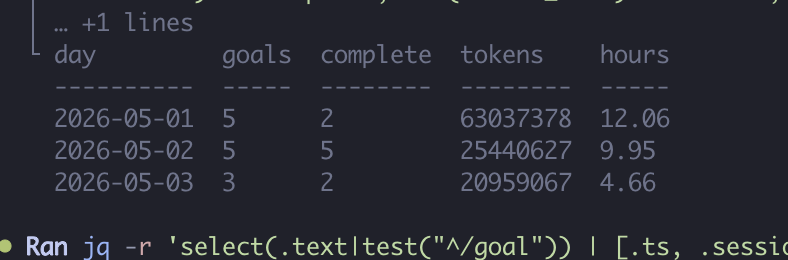

I ran Qwen3.6:27b to optimize itself in a recursive loop on my home server.

Over 26 hours it went from 2.3tok/s to 84.3tok/s decode.

It began on the home server, found there was no NVIDIA GPU, detected a CPU/RAM setup with 24 CPU threads and 93 GiB RAM, a 9060xt 16gb, then installed Hugging Face tooling remotely and started pulling GGUF quantizations.

It benchmarked remote llama.cpp / llama-server runs across quantizations and flags:

Found existing Qwen3.5-9B-Q8_0.gguf

Downloaded / tested Qwen3.6-27B GGUF variants

Compared Q6_K, Q5_K_M, Q4_K_M

Ran server benchmarks over SSH against localhost:8080

Tested thread count, context, batch size, n_ubatch, --no-mmap, and memory-related flags

Researched further speed paths: lower quantization, NUMA, huge pages, native CPU builds, cache/KV, TurboQuant, DFlash, speculative decoding, and automated tuning

1,524 tool calls

367 artifacts

345 memory addition

804 browser-control calls

All of this from a model that can run on your computer. You don't need a better model. You need a better harness.

English

michael s galpert retweetledi

michael s galpert retweetledi