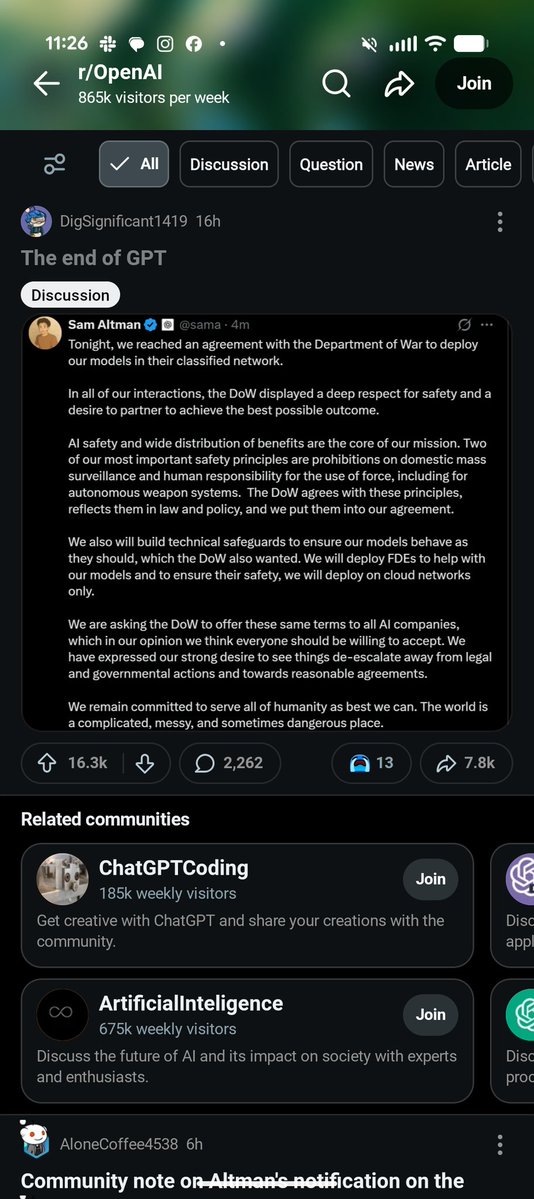

Sam Altman@sama

Here is re-post of an internal post:

We have been working with the DoW to make some additions in our agreement to make our principles very clear.

1. We are going to amend our deal to add this language, in addition to everything else:

"• Consistent with applicable laws, including the Fourth Amendment to the United States Constitution, National Security Act of 1947, FISA Act of 1978, the AI system shall not be intentionally used for domestic surveillance of U.S. persons and nationals.

• For the avoidance of doubt, the Department understands this limitation to prohibit deliberate tracking, surveillance, or monitoring of U.S. persons or nationals, including through the procurement or use of commercially acquired personal or identifiable information."

It’s critical to protect the civil liberties of Americans, and there was so much focus on this, that we wanted to make this point especially clear, including around commercially acquired information. Just like everything we do with iterative deployment, we will continue to learn and refine as we go.

I think this is an important change; our team and the DoW team did a great job working on it.

2. The Department also affirmed that our services will not be used by Department of War intelligence agencies (for example, the NSA). Any services to those agencies would require a follow-on modification to our contract.

3. For extreme clarity: we want to work through democratic processes. It should be the government making the key decisions about society. We want to have a voice, and a seat at the table where we can share our expertise, and to fight for principles of liberty. But we are clear on how the system works (because a lot of people have asked, if I received what I believed was an unconstitutional order, of course I would rather go to jail than follow it). But

4. There are many things the technology just isn’t ready for, and many areas we don’t yet understand the tradeoffs required for safety. We will work through these, slowly, with the DoW, with technical safeguards and other methods.

5. One thing I think I did wrong: we shouldn't have rushed to get this out on Friday. The issues are super complex, and demand clear communication. We were genuinely trying to de-escalate things and avoid a much worse outcome, but I think it just looked opportunistic and sloppy. Good learning experience for me as we face higher-stakes decisions in the future.

In my conversations over the weekend, I reiterated that Anthropic should not be designated as a SCR, and that we hope the DoW offers them the same terms we’ve agreed to.

We will host an All Hands tomorrow morning to answer more questions.