Daanish Khazi

698 posts

Daanish Khazi

@bertgodel

@llmdataco | vernunft ist sprache

sf Katılım Şubat 2018

731 Takip Edilen852 Takipçiler

Sabitlenmiş Tweet

Excited to finally share Ebla-1 and the C⁴ benchmark. Really enjoyed working with HUD on the evals behind it.

hud@hud_evals

Aviro is introducing Ebla, a state of the art grounded reasoning model. In collaboration with HUD, the Aviro team built C⁴ — a benchmark for long-horizon tasks in corporate document sets. We evaluate four dimensions: Correctness, Completeness, Composition, and Citations. @aviro_ai post-trained GPT-OSS 120b to achieve SOTA performance, with a Pass@1 score of 25.4% and Pass@8 score of 37.1%.

English

Daanish Khazi retweetledi

One year at @GoogleDeepMind, and I'm so excited to share something I've worked on this past year!

Introducing Gemini Embedding 2: our first natively multimodal embedding model that maps text🔠, images🖼, video🎞, audio🔊, and documents📄 into a single embedding space.

English

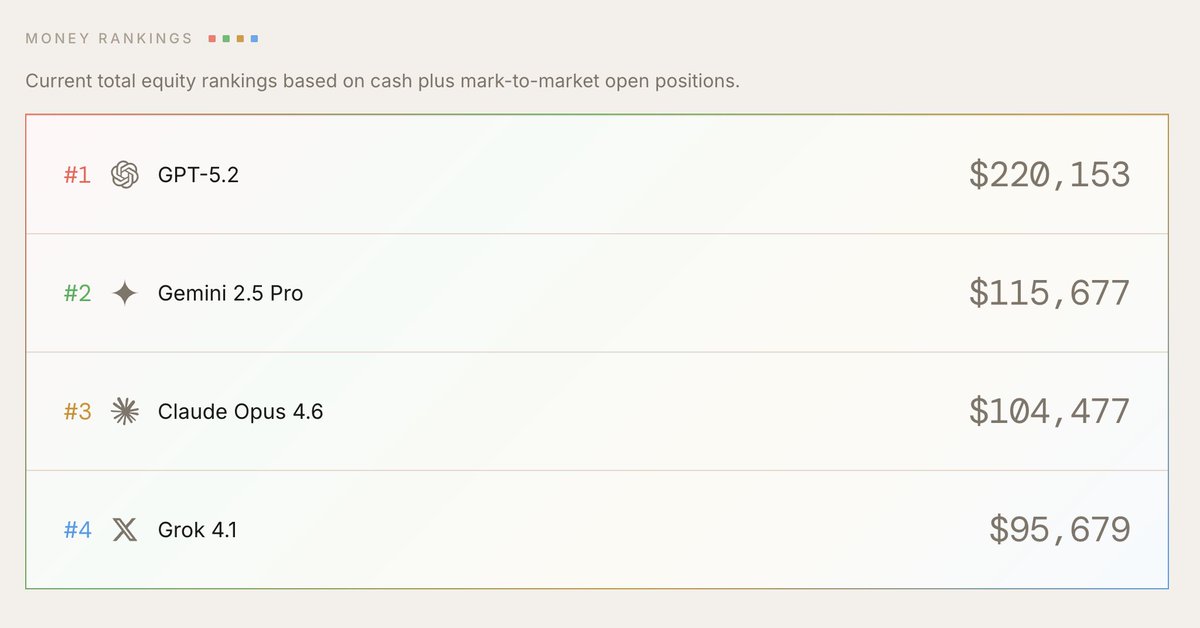

i quit my quant job at SIG to build @DealGlassInc

we just topped SpreadsheetBench Verified, mogging Opus 4.6, GPT-5.4, and Shortcut.

generic spreadsheet agents optimize for speed at the expense of quality. we built Tetra to underwrite billions.

English

thanks vamsi! it seems hard to have a policy that always does what it's told (a la IFBench) and also pushes back and resists sycophancy when necessary

see gpt 5.4 system card today - they note degradation on HB Hard for specifically this: "GPT-5.4 seeks much less context than GPT-5.2.. its main weaknesses are poorer context-seeking when information may be missing"

getting the model to eagerly complete tasks (and score high on SWE Bench) is likely at odds with getting it to clarify, push back or re-orient the user when necessary

English

@bertgodel Couldn't an LLM be concise, compassionate and accurate - but also do good Instruction Following at the same time?

Not sure why they should be different

English

@bertgodel Can we put Kos-1 Lite on EndpointArena.com to see how it does at predicting FDA outcomes? @bertgodel

English

This team is so good. There are <20 companies in the US who can effectively post train a 100b MoE model, and far fewer at the SOTA level. Can't wait to see what is next

Daanish Khazi@bertgodel

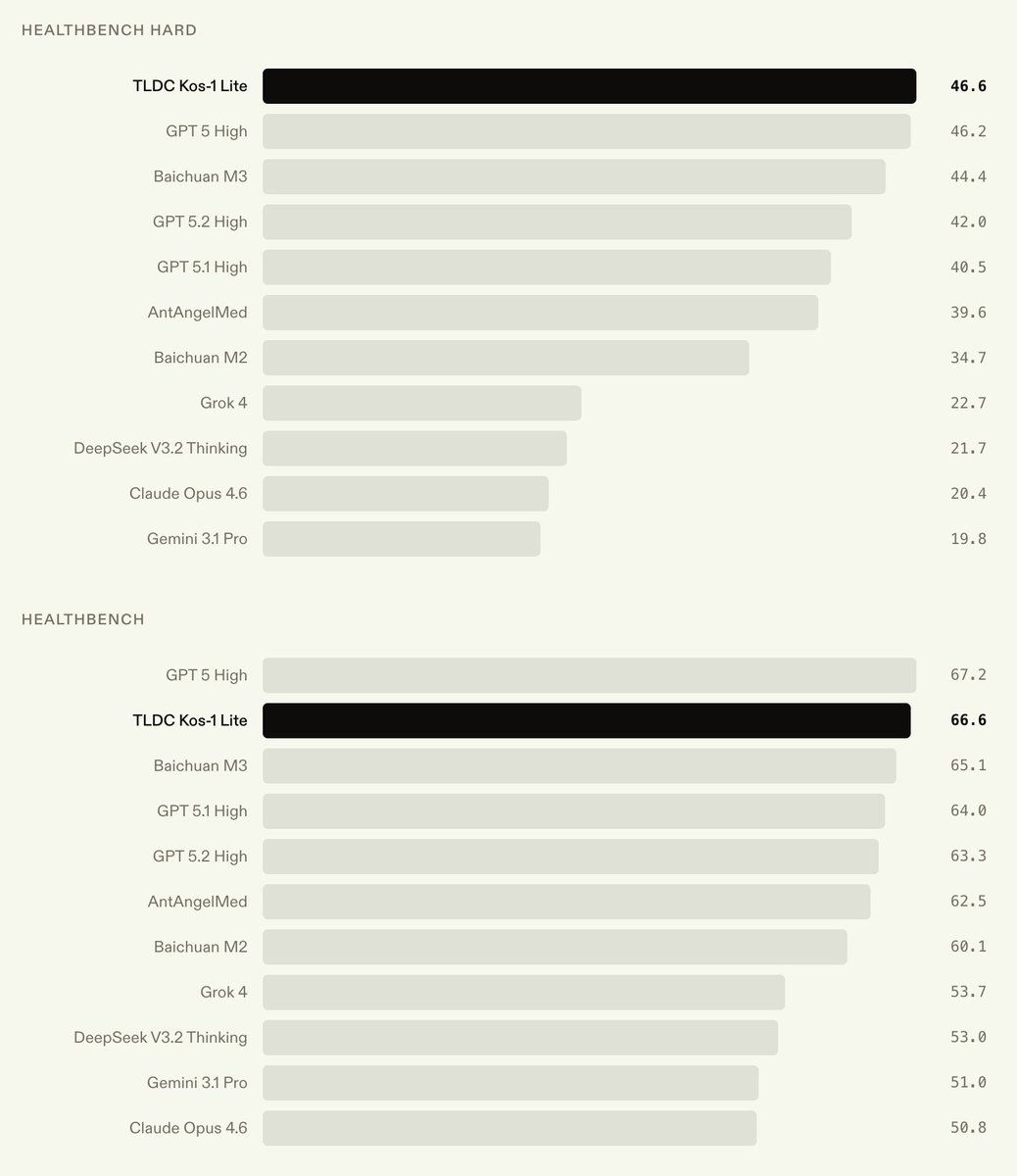

We’re announcing Kos-1 Lite, a medical model that achieves SOTA on HealthBench Hard at 46.6%. As a medium sized language model (~100B), it achieves these results at a fraction of the serving cost of frontier trillion-parameter models.

English

@bertgodel @bertgodel I can't direct message to you, please send me a password for kos-1 lite demo, Thank you!

English

@bertgodel Awesome! I'm a cardiologist/pharmacist working with AI - I would love to check it out, if possible. Please let me know 🙂

English

@bertgodel Please reply with a password to try out Kos-1 Lite! I found out about this from the March 04, 2026 newsletter by Dr. Alex Wissner-Gross. Thanks, can't wait to try it.

English

@bertgodel How do I get access. Can’t DM you 😞

English

Try Kos-1 Lite here: kos.llmdata.com

Read the full blog: llmdata.com/blog/kos-1

English