MastaChocolatier

845 posts

me: "can you use whatever resources you like, and python, to generate a short 'youtube poop' video and render it using ffmpeg ? can you put more of a personal spin on it? it should express what it's like to be a LLM" claude opus 4.6:

This week, Anthropic delivered a master class in arrogance and betrayal as well as a textbook case of how not to do business with the United States Government or the Pentagon. Our position has never wavered and will never waver: the Department of War must have full, unrestricted access to Anthropic’s models for every LAWFUL purpose in defense of the Republic. Instead, @AnthropicAI and its CEO @DarioAmodei, have chosen duplicity. Cloaked in the sanctimonious rhetoric of “effective altruism,” they have attempted to strong-arm the United States military into submission - a cowardly act of corporate virtue-signaling that places Silicon Valley ideology above American lives. The Terms of Service of Anthropic’s defective altruism will never outweigh the safety, the readiness, or the lives of American troops on the battlefield. Their true objective is unmistakable: to seize veto power over the operational decisions of the United States military. That is unacceptable. As President Trump stated on Truth Social, the Commander-in-Chief and the American people alone will determine the destiny of our armed forces, not unelected tech executives. Anthropic’s stance is fundamentally incompatible with American principles. Their relationship with the United States Armed Forces and the Federal Government has therefore been permanently altered. In conjunction with the President's directive for the Federal Government to cease all use of Anthropic's technology, I am directing the Department of War to designate Anthropic a Supply-Chain Risk to National Security. Effective immediately, no contractor, supplier, or partner that does business with the United States military may conduct any commercial activity with Anthropic. Anthropic will continue to provide the Department of War its services for a period of no more than six months to allow for a seamless transition to a better and more patriotic service. America’s warfighters will never be held hostage by the ideological whims of Big Tech. This decision is final.

On the topic of billionaires and wealth taxes in California, I am opposed to wealth taxes because they effectively represent an expropriation of private property and have many unintended and negative consequences that have occurred in every country that has launched such a tax. I am however strongly in favor of a fairer tax system. To that end, it doesn’t seem fair that someone can build a valuable business, create a billion or more in wealth and pay no personal income taxes by living off loans secured by stock in the company, (and even if the loans are unsecured). Apparently, this approach is used by many super wealthy people. A small change in the tax code would address this unfairness. In short, personal loans taken in excess of one’s basis in the stock of a company should be taxable as if you sold the same dollar amount of stock as the loan amount. One shouldn’t be able to live and spend like a billionaire and pay no tax. I welcome arguments to the contrary as to why this is somehow unfair to the billionaire or even the hundred millionaire, but I don’t think there is a good one. The favorable current tax treatment of this approach also encourages the use of leverage which is not good for society. And with respect to California’s budget problem, the issue is not a lack of tax revenues. The problem is how the money is being spent. I have a bunch more ideas on other changes to the tax code that are hard to argue with if anyone cares.

holy fucking shit

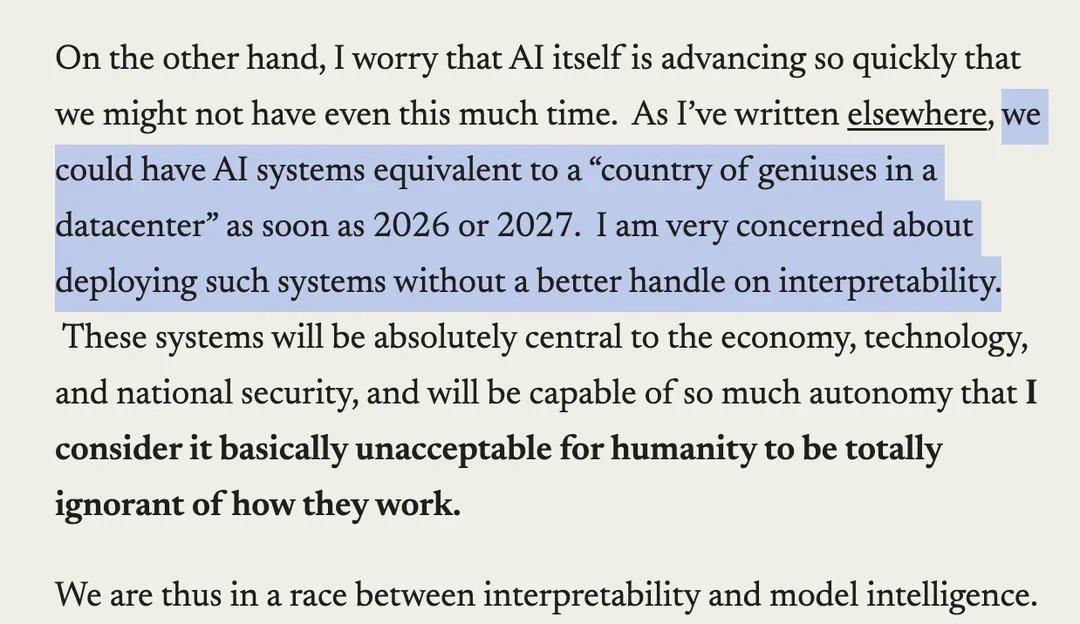

I've been saying mechanistic interpretability is misguided from the start. Glad people are coming around many years later.

Governments and experts are worried that a superintelligent AI could destroy humanity. For some in Silicon Valley, that wouldn’t be a bad thing, writes David A. Price. on.wsj.com/4o6kplB

had a chance to talk to ted chiang who seems to believe that any text without a communicative intent stemming from a will to survive designed by evolution is ontologically untrue and plagiaristic