bidhan @ NVIDIA GTC

4.4K posts

@bidhan

ceo @bageldotcom. previously code monkey at amazon, cashapp, instacart. hiring - dms are open.

@Angaisb_ almost 100 percent of image and video models are still diffusion, you're just confused, sorry!

In town for NVIDIA GTC? If you're building generative world models or investing in the people who are - we're putting the right people in one room for you tomorrow night in Palo Alto. Co-hosted by Alumni Ventures. Signup link below.

NVIDIA GTC conference didn’t have raves. And that’s a good thing.

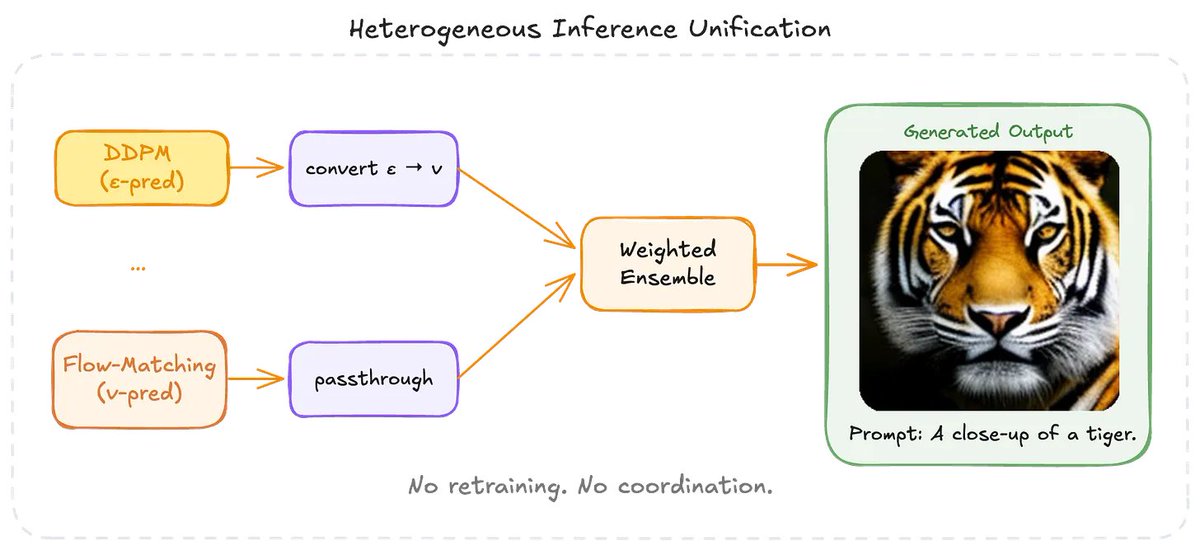

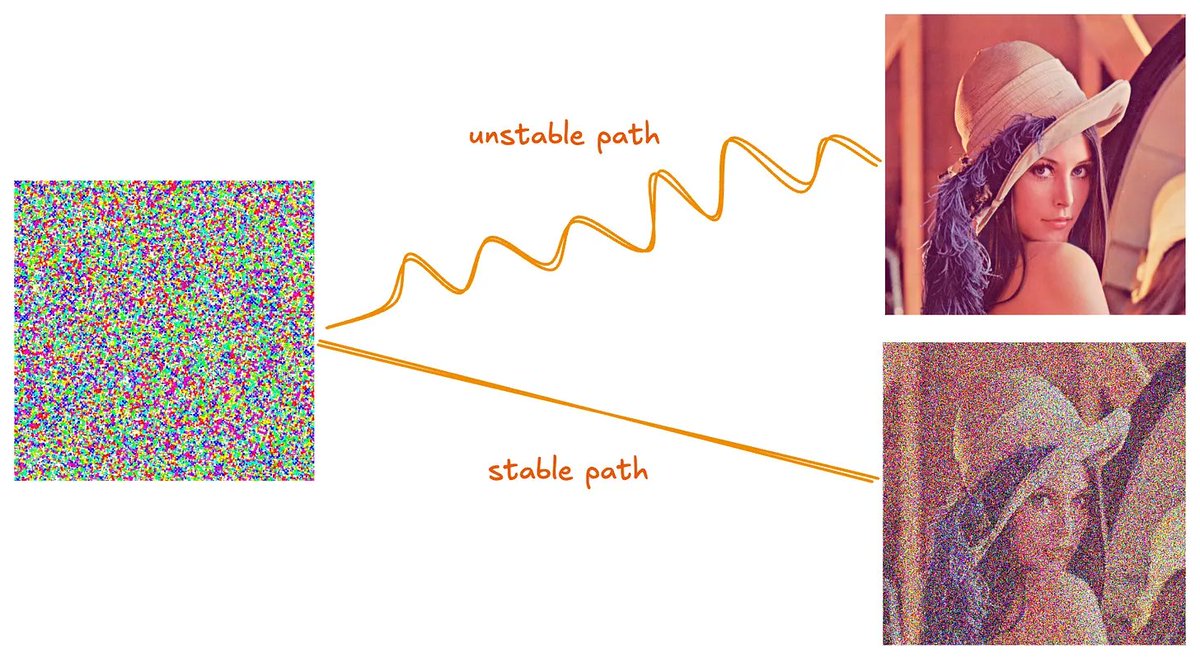

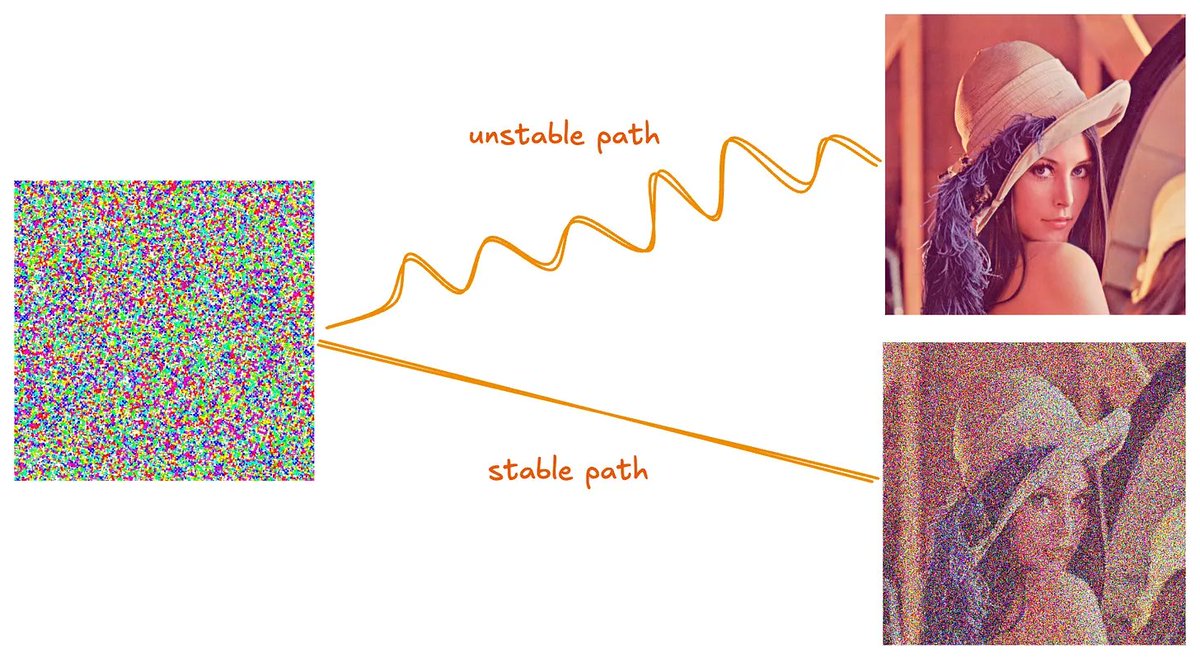

Excited to share that Bagel Labs' paper got accepted at CVPR 2026. A lot of the most important diffusion model research has historically stayed inside frontier labs. We're bringing more of that in the open through open science and open infrastructure. In this work we showcase the very counterintuitive advantage of mixing different training objectives (DDPM and Flow-Matching) through an ensemble of diffusion models. This is one of the first ever works to successfully combine diffusion models trained with heterogeneous objectives. See details here: blog.bagel.com/p/heterogeneou…