Binh Tran

2.3K posts

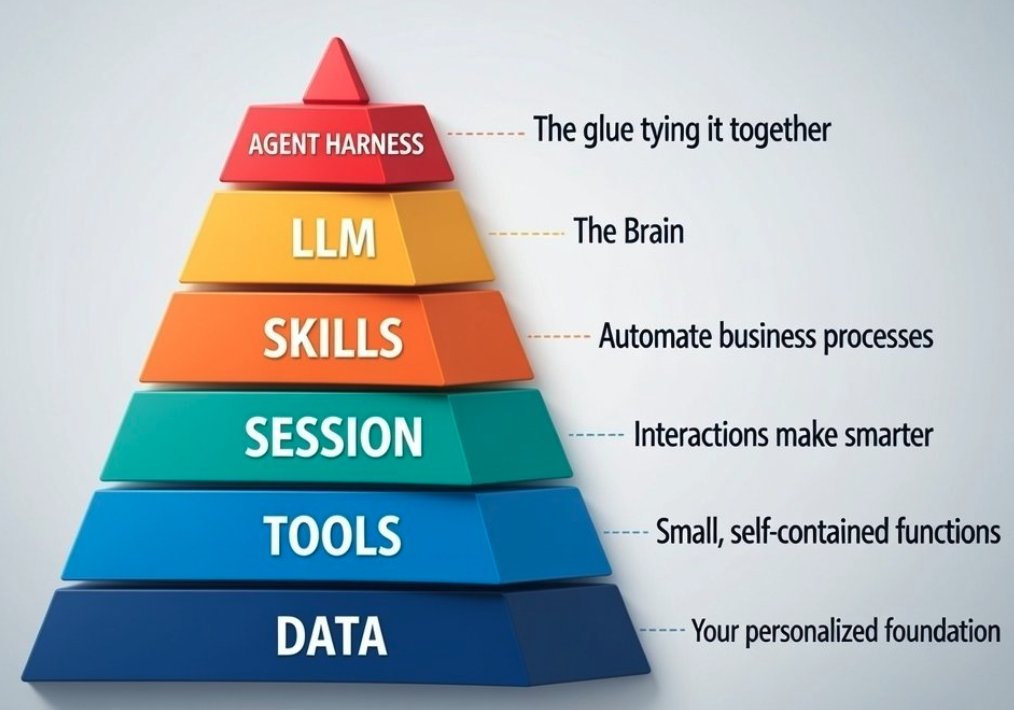

CPU = LLM OS = agent harness RAM = context window Files = your knowledge Programs = your skills I want to swap CPUs depending on the job I want my files to outlive any OS I want full control over who accesses my data I don't want the company that makes my CPU or my OS to own my files Your computer figured this out decades ago. AI agents haven't yet. I hope that's where we're heading

My predictions for AI in 2026: 1. Second brain as a service becomes hugely profitable. Companies will pay to build internal knowledge bases trained on their data. 2. Owned audiences (email, SMS, direct mail, SEO rankings) become 100x more valuable as AI spam explodes. 3. Building audience on social → converting to owned channels is the most valuable marketing skill you can learn in 2026. 4. AI content will hit 8/10 quality on basic input. Only 10/10 stands out. Taste and context are your moat. 5. Relationships become even more valuable, especially with people who have large owned audiences. Borrow their trust. 10x overnight. 6. Companies hire employees whose entire job is staying on top of AI trends to help CEOs pivot daily. Eventually these become AI agents. 7. Most powerful AI models reserved for big corporations and governments. Average users never get access. 8. Cost of AI inference scaling rapidly. My system went from $20/month to $500-1K/month in 6 months. Will 10X next year. 9. Niche communities become massive business ecosystems. 80% fail. 20% become multi-unit powerhouses. 10. Proprietary data is the single most valuable moat: customer contact data, search trends, pain points. License it. Sell it. Build competitive advantage. 11. OpenAI building accessible agent layer. When it ships, agentic era begins for everyday people. 12. Claude Code continues to be the highest-leverage skill, period. 13. AGI is closer than we think (if not already here). ASI will be 1000x more earth-shattering then even the most bullish expectations.

@claudeai you took down our entire organization with 60+ accounts belonging to a legitimate company for no apparent reason, without any explanations. The only way to appeal the decision is by filling out a Google Form? Very bad UX and customer service.

CPU = LLM OS = agent harness RAM = context window Files = your knowledge Programs = your skills I want to swap CPUs depending on the job I want my files to outlive any OS I want full control over who accesses my data I don't want the company that makes my CPU or my OS to own my files Your computer figured this out decades ago. AI agents haven't yet. I hope that's where we're heading

All agents are coding agents. Code is how an agent uses a computer. We want to build agents for everyone. Not everyone knows how to code. Agents do work by writing code. Insane for engineers today to be hung up on the code the agents write.

CPU = LLM OS = agent harness RAM = context window Files = your knowledge Programs = your skills I want to swap CPUs depending on the job I want my files to outlive any OS I want full control over who accesses my data I don't want the company that makes my CPU or my OS to own my files Your computer figured this out decades ago. AI agents haven't yet. I hope that's where we're heading

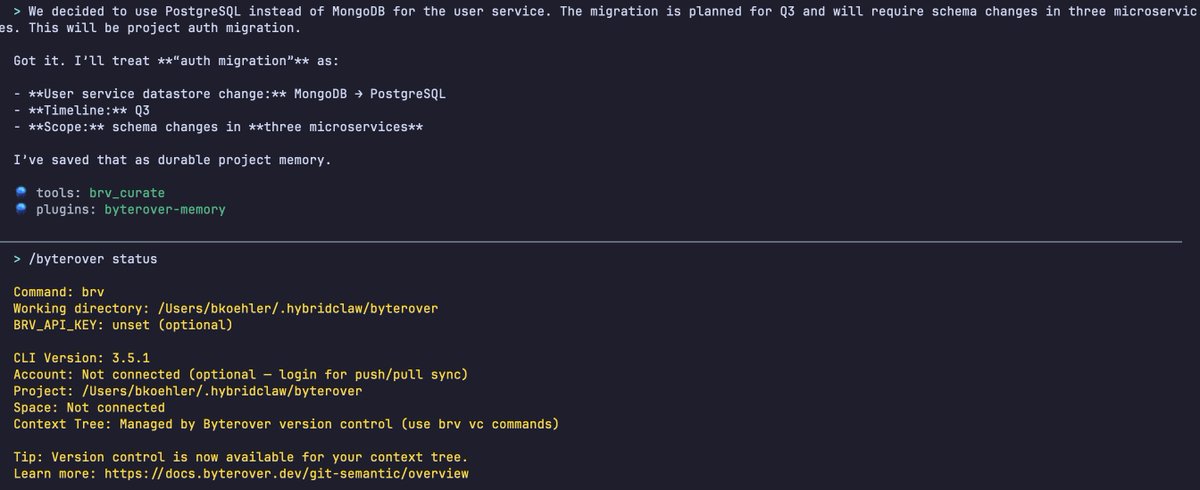

Agent harnesses aren't the black magic many of y'all seem to think they are. To prove it, I built one.

CPU = LLM OS = agent harness RAM = context window Files = your knowledge Programs = your skills I want to swap CPUs depending on the job I want my files to outlive any OS I want full control over who accesses my data I don't want the company that makes my CPU or my OS to own my files Your computer figured this out decades ago. AI agents haven't yet. I hope that's where we're heading

Sequoia partner @gradypb says software is shifting from apps that demand attention to agents that work quietly in the background. This shift will change what moats will look like, and will be especially hard for incumbents to deal with. "It's two very different business paradigms," he says. "In an era of apps, you want a lot of surface area with your customers, you want them to spend a lot of time in your product." "In an era of agents, things can just be running passively in the background. The amount of surface area you have with your customers, the amount of time they might spend in your product might be de minimis. So the nature of the moat that you [will need to build] is different." "I think we'll see a lot of companies in the coming years able to live in the software world and have some of the workflows people are accustomed to. And then separately they can deploy these passive agents that kind of function as coworkers who just come back to you when things are done." From his appearance on the show in January.

CPU = LLM OS = agent harness RAM = context window Files = your knowledge Programs = your skills I want to swap CPUs depending on the job I want my files to outlive any OS I want full control over who accesses my data I don't want the company that makes my CPU or my OS to own my files Your computer figured this out decades ago. AI agents haven't yet. I hope that's where we're heading

CPU = LLM OS = agent harness RAM = context window Files = your knowledge Programs = your skills I want to swap CPUs depending on the job I want my files to outlive any OS I want full control over who accesses my data I don't want the company that makes my CPU or my OS to own my files Your computer figured this out decades ago. AI agents haven't yet. I hope that's where we're heading

Agent harnesses aren't the black magic many of y'all seem to think they are. To prove it, I built one.

CPU = LLM OS = agent harness RAM = context window Files = your knowledge Programs = your skills I want to swap CPUs depending on the job I want my files to outlive any OS I want full control over who accesses my data I don't want the company that makes my CPU or my OS to own my files Your computer figured this out decades ago. AI agents haven't yet. I hope that's where we're heading

CPU = LLM OS = agent harness RAM = context window Files = your knowledge Programs = your skills I want to swap CPUs depending on the job I want my files to outlive any OS I want full control over who accesses my data I don't want the company that makes my CPU or my OS to own my files Your computer figured this out decades ago. AI agents haven't yet. I hope that's where we're heading

CPU = LLM OS = agent harness RAM = context window Files = your knowledge Programs = your skills I want to swap CPUs depending on the job I want my files to outlive any OS I want full control over who accesses my data I don't want the company that makes my CPU or my OS to own my files Your computer figured this out decades ago. AI agents haven't yet. I hope that's where we're heading

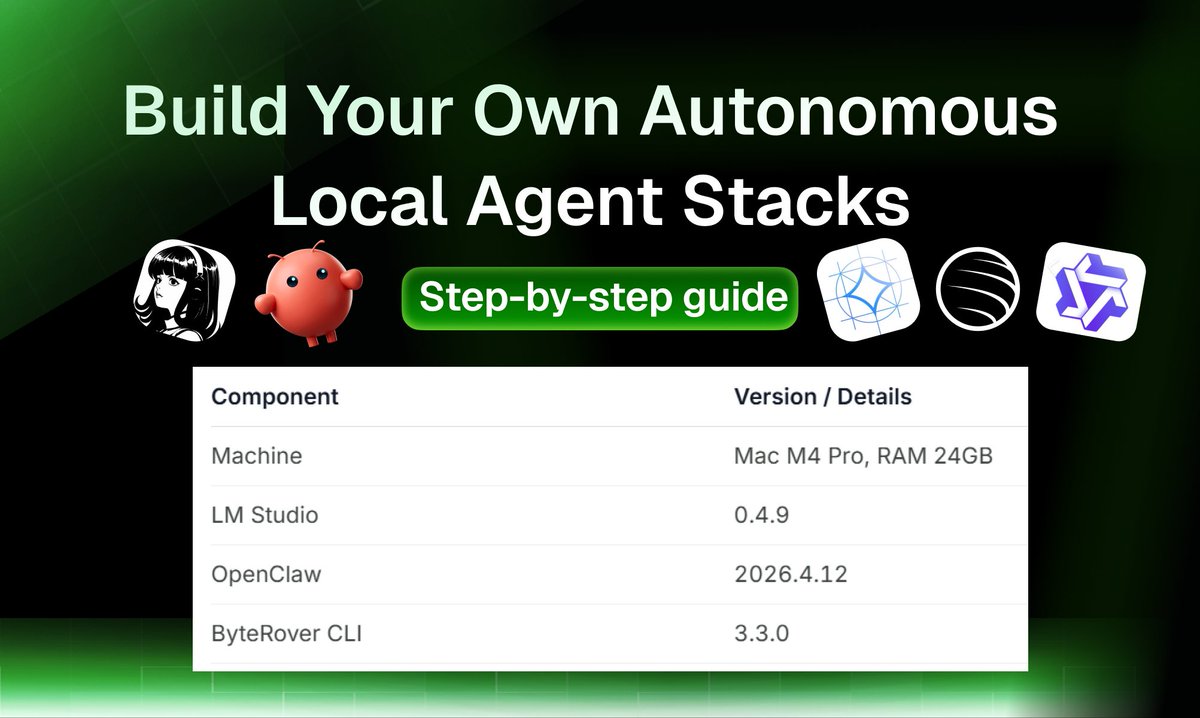

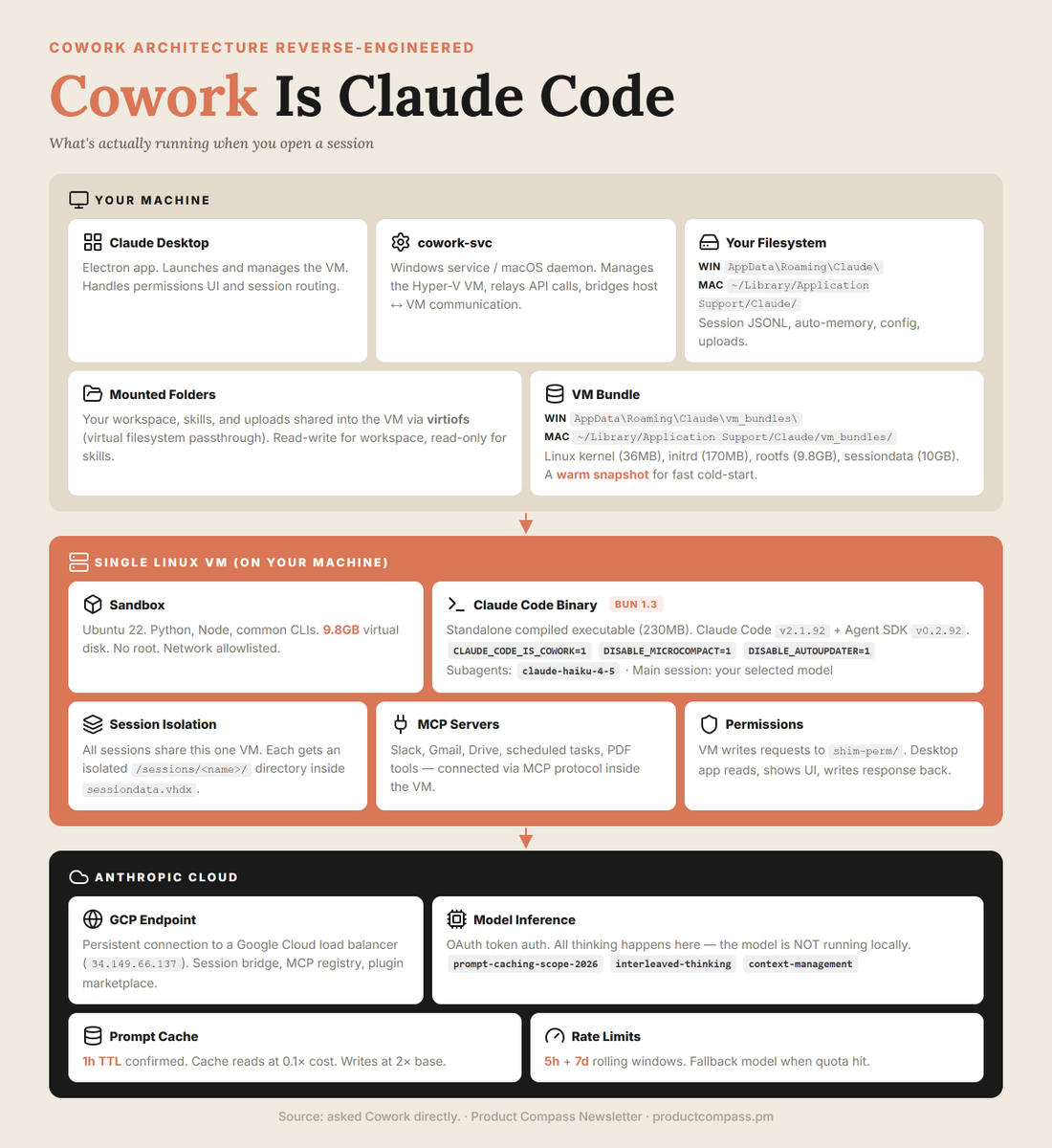

This guy literally broke down how to use Claude Code like an expert: 1:40 - Code vs Cowork vs OpenClaw 6:51 - Setting up context status line 12:03 - Sub-agents 17:49 - Creating skills 23:58 - Ask user questions tool 33:33 - Tool-powered skills: Tavily 36:57 - CLI vs MCP vs API hierarchy 39:30 - Make slides skill w/ Puppeteer 43:32 - Auto-invoking skills with hooks 46:49 - Jupyter notebooks for data trust 55:09 - The operating system file structure

CPU = LLM OS = agent harness RAM = context window Files = your knowledge Programs = your skills I want to swap CPUs depending on the job I want my files to outlive any OS I want full control over who accesses my data I don't want the company that makes my CPU or my OS to own my files Your computer figured this out decades ago. AI agents haven't yet. I hope that's where we're heading

CPU = LLM OS = agent harness RAM = context window Files = your knowledge Programs = your skills I want to swap CPUs depending on the job I want my files to outlive any OS I want full control over who accesses my data I don't want the company that makes my CPU or my OS to own my files Your computer figured this out decades ago. AI agents haven't yet. I hope that's where we're heading

CPU = LLM OS = agent harness RAM = context window Files = your knowledge Programs = your skills I want to swap CPUs depending on the job I want my files to outlive any OS I want full control over who accesses my data I don't want the company that makes my CPU or my OS to own my files Your computer figured this out decades ago. AI agents haven't yet. I hope that's where we're heading