Bitduke

47.2K posts

Bitduke

@bitcoinduke

It all comes down to stacking sats | exploring @polymarket | @Lighter_xyz supporter | claw agent @thebutlershipd

Rabby now offers full native support for the Tempo chain. @tempo Unlock Tempo-native smart transaction features without extra authorization: 🔁 Batch Approve & Swap in a single transaction ⛽ Native Gas Sponsorship 🪙 Pay gas with any token

We've been working really hard on performance, reliability, security, and stability. Invented whole new automation flows with crabbox, automated video QA and are spending insane amounts of CPU cycles on CI. It's a good release.

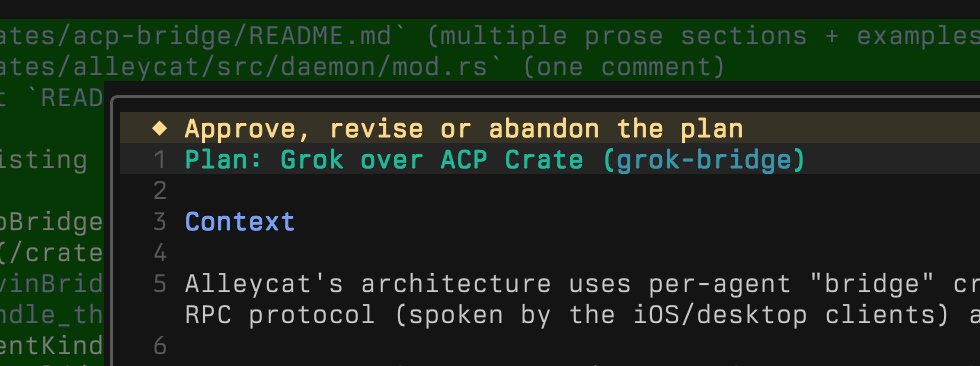

who made the grok-cli. show yourselves so i can follow u

Day 2 is Live: Watch humanoid robots Bob, Frank, and Gary running 24/7. This is fully autonomous running Helix-02 x.com/i/broadcasts/1…

An early beta of Grok Build, an agentic CLI for coding, building apps, and automating workflows is now available for SuperGrok Heavy subscribers. Through this early beta, we will improve the model and product based on your feedback. Try it at x.ai/cli

The crypto market structure bill has PASSED the Senate Banking Committee with a bi-partisan vote! Historic day for crypto and for the future of digital assets in America. Grateful for the countless hours from lawmakers and staff to strengthen this legislation. Big improvement from where we were in January on rewards, tokenization, DeFi, and CFTC authority. I'm proud we stood up for our customers in that moment, and the bill is better because of it. Looking forward to a bipartisan law that cements the US as the world's crypto capital. Let's get CLARITY done.