Q Blocks

92 posts

Q Blocks

@blocks_q

Decentralised computing platform to help ML teams get 10x more affordable computing

We have successfully fine tuned LLaMA 3 using the @monsterapis GPT instruct tune method. Overnight we have built 17 unique models and are testing now. Early results? Very robust and quite extraordinary. No other 8B model can get close to these outputs. I am floored.

LoRA is a genius idea. To understand the fine-tuning of Large Language Models, you must understand how LoRA works. By the end of this post, you'll know everything important about how it works. Large Language Models are good generalists, but they have little specialization. We train them in many different tasks, so they know a bit about everything but not enough about anything. Think of a kid who can play three different sports at a high level. While he can be proficient across the board, he won't get a scholarship unless he specializes. That's how the kid can reach his full potential. We can do the same with these large models. We can train them to solve a particular task and nothing else. We call this process "fine-tuning." We start with everything the model knows and adjust its knowledge to help it improve on the task we care about. Fine-tuning is revolutionary, but it's not free. Fine-tuning a large model takes time, care, and lots of money. Many companies can't afford the process. Some can't pay for the hardware. Some can't hire people who know how to do it. Most companies can't do either. That's where LoRA comes in. We realized we could approximate a large matrix of parameters using the product of two smaller matrices. There was a lot of wasted space within these large models. What would happen if we find a new, more optimal representation? Did you ever buy a map at a gas station? Giant pages showing every small road, path, and lake around you. They were exhaustive but hard to navigate. These are like parameters in a large model. LoRA turns a gas station map into a cartoon treasure map. Every useless parameter is gone. Only two roads, a palm tree, and a cross pointing at the treasure. We don't need to fine-tune the entire model anymore. We can only focus on the small treasure map that LoRA gives us. It's a mind-blowing trick. We can train the small approximation matrices from LoRA instead of fine-tuning the entire model. LoRA is cheaper, faster, and uses less memory and storage space. You can also merge the approximation matrices with the model during deployment time. They work like simple adapters. You load up the one you need to solve a problem and use a different one for the next task. Then, we have QLoRA, which makes the process much more efficient by adding 4-bit quantization. QLoRA deserves its own separate post. The team at @monsterapis has created an efficient no-code LoRA/QLoRA-powered LLM fine-tuner. What they do is pretty smart: They automatically configure your GPU environment and fine-tuning pipeline for your specific model. For example, if you want to fine-tune Mixtral 8x7B on a smaller GPU, they will automatically use QLoRA to keep your costs down and prevent memory issues. The @monsterapis platform specializes in no-code LoRA-powered fine-tuning. It's the fastest and most affordable offering for fine-tuning models in the market. They sponsored me and gave me 10,000 free credits for anyone who uses the code "SANTIAGO" in their dashboard: monsterapi.ai/finetuning If you want to read their latest updates, get free credits and special offers, join their Discord server: discord.com/invite/mVXfag4… TL;DR: • Traditional fine-tuning trains the entire model. It requires a complex setup, higher memory, and expensive hardware. • LoRA: Trains a small portion of the model. It's faster, requires much less memory, and affordable hardware. • QLoRA: Much more efficient than LoRA, but it requires a more complex setup. • No-code fine-tuning with LoRA/QLoRA: The best of both worlds. Low cost and easy setup.

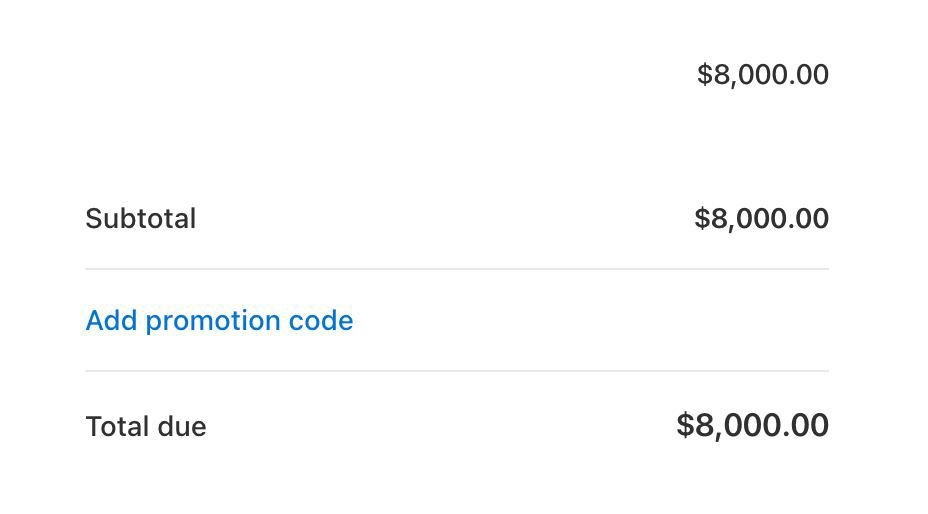

How to fine-tune Llama 2 without writing a single line of code. I taught Llama 2 to classify the sentiment of movie reviews. Setting everything up took me 10 minutes. The fine-tuning process lasted 6 hours. If you aren't familiar with the term "fine-tuning," it’s the process we use to teach a model how to solve a specific task. Large Language Models have general knowledge but struggle to solve particular problems. Fortunately, we can fine-tune these models and make them very good at solving specific tasks. In this example, that task is determining how much a person liked a movie based on its review. Unfortunately, fine-tuning a model is a complex, expensive process. It takes a lot of time, effort, and GPU computing. It's also hard to find experienced people who know how to do it. The team @monsterapis built the first platform that offers no-code fine-tuning of open-source models, which changes everything. That’s the platform I’m using here. Here is what you need to do: 1. Sign up here: monsterapi.ai/signup, and use the code SANTIAGO during sign-up to get 5,000 free API credits. 2. Go to the FineTuning option and select Llama 2 7B. 3. Select your task. I'm using "Text Classification" since we want to classify movie reviews. 4. The last step is to select your dataset. I used the IMDb dataset from HuggingFace. It took a bit under 6 hours to finalize the fine-tuning process. The attached image corresponds to the training loss over the first few steps. I spent 14,000 credits in the process, equivalent to $14.00. That’s one of the @monsterapis’ advantages: Besides not dealing with code, complexity, or hardware, their pricing is very competitive, thanks to their decentralized GPU platform. Here's an article that provides a step-by-step guide for fine-tuning Llama 2: blog.monsterapi.ai/how-to-fine-tu… You can also join @monsterapis’ Discord server for the latest updates, free credits, and special offers: discord.com/invite/mVXfag4… Thanks to the team @monsterapis for partnering with me on this post.

🔥 Únete hoy a esta charla sobre #GenerativeAI impartida por @Gaurav_vij137 y @saurabhvij137, fundadores de @blocks_q, empresa patrocinadora de las GPU VMs del #HackathonSomosNLP. ✨ Anunciarán una sorpresa ✨ ➡️ ¡Únete! Haz clic en "Notificarme" youtube.com/watch?v=3jgh6Z…

Una forma inteligente de entrenar, ajustar y desplegar tus modelos de ML ⚙️ El fundador de @blocks_q, empresa patrocinadora de las GPU VMs del #HackathonSomosNLP, nos explicará cómo ofrecen máquinas a precios tan competitivos y cómo hacer uso de ellas. ➡️youtube.com/watch?v=n7uaOp…

The future of computing is here & it’s exciting! If you're a generative AI dev, try Monster API and take your projects to the next level. monsterapi.ai If you sign up now, you get - 5000 free credits (using GitHub) - 2500 free credits (using another signup method)

We are live