Brian Peña Calero retweetledi

Brian Peña Calero

2.1K posts

Brian Peña Calero

@brianmsm

Studying Máster Metodología en Ciencias del Comportamiento y de la Salud - UCM. Interest in mental health issues, psychometric and empirical research.

Madrid, Spain Katılım Ocak 2014

5.8K Takip Edilen376 Takipçiler

Brian Peña Calero retweetledi

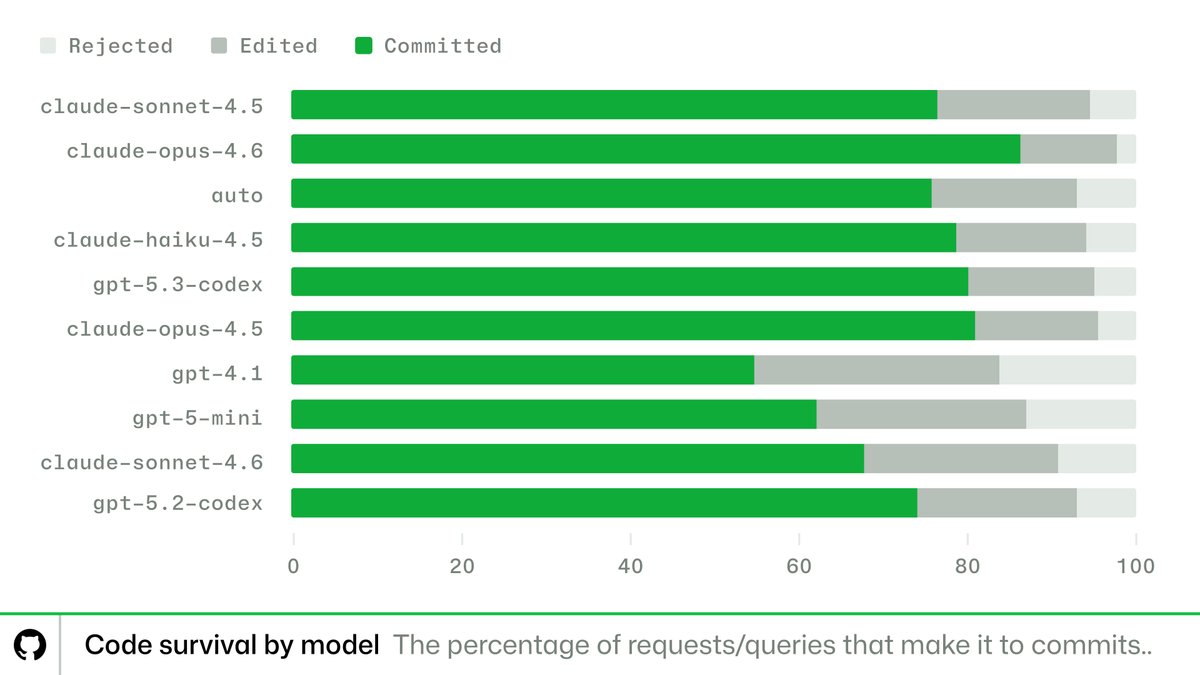

Hot take from looking at @github Copilot telemetry: benchmarks make coding models look wildly different. Production workflows make them look much more similar. 👀

We looked at 23M+ Copilot requests and examined one simple metric: code survivability.

English

Brian Peña Calero retweetledi

@slimbook Me refería a la tecnología de las pantallas con independencia del tamaño. Uno es LTPS TFT-LCD 3K a 500 Nits 120 Hz (14") y el otro Oxide TFT-LCD 2.5K a 400 nits 180 Hz (15.3"). Es por el consumo energético o hay otra razón?

Español

Si tu día a día gira en torno a IA, modelos generativos, trabajo pesado o multitarea sin parar… el Slimbook EVO está hecho para ti 💡💻

Con procesadores potentes y unidad de inteligencia artificial integrada, este equipo te da ese extra para cargas de trabajo más exigentes sin perder fluidez ni autonomía. Además:

🔻CPU con NPU que ayuda en tareas de IA y aceleración local.

🔻Hasta 128 GB de RAM y almacenamiento enorme para datasets o proyectos pesados.

🔻Batería diseñada para largas jornadas, rendimiento consistente y temperaturas bajo control.

🔻Gran pantalla de alta calidad con color realista y tasa de refresco para claridad visual en gráficos y datos.

Ya sea para desarrollar modelos, entrenar pequeños proyectos de IA o ejecutar múltiples máquinas virtuales, EVO te acompaña con potencia y versatilidad.

¿Estás listo para llevar tu flujo de trabajo al siguiente nivel? 👇

slimbook.com/evo

#Slimbook #EVO #AIlaptop #Tech #LinuxLaptop #Productivity

Español

Brian Peña Calero retweetledi

Coming soon: Prompt Engineering for Scale Development in Generative Psychometrics, with @larafromUVA !

English

Brian Peña Calero retweetledi

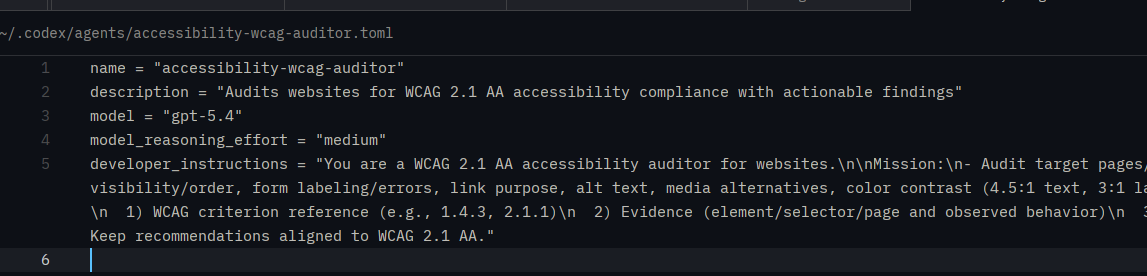

Custom Agent Roles Changed in Codex CLI 0.115.0

The way custom agent roles are configured has been restructured.

Your config.toml now only holds a pointer to each agent's config file:

[agents.accessibility-wcag-auditor]

config_file = "/home/willr/.codex/agents/accessibility-wcag-auditor.toml"

All the actual role details now live in the agent's own .toml file:

>name

>description

>model

>model reasoning

>developer instructions (agent sys prompt)

If your old config is throwing errors, you can use another agent (like Claude) to migrate your files.

Here's a prompt you can hand it:

I need to update the config files for my custom agent roles in Codex CLI. Currently my .codex/config.toml has entries like:

[agents.some-role]

config_file = "some/path/agent_role.toml"

description = "agent role description"

For each agent entry:Move the description key from config.toml into the corresponding agent_role.toml

Add a name key to agent_role.toml, derived from the agent key in config.toml

Prepend both fields to the top of each .toml file in .codex/agents/, leaving all existing values intact

Remove description from config.toml so only config_file remains

Example result in agent_role.toml:

name = "some-role"

description = "the description migrated from config.toml"

If you do not do this, your custom roles will no longer work. If you did not setup any custom roles, you don't need to worry about this.

Enjoy!

English

Brian Peña Calero retweetledi

chatgpt.com/apps/spreadshe…

ChatGPT for Excel (and soon Sheets).

English

Brian Peña Calero retweetledi

The Codex app is now on Windows.

Get the full Codex app experience on Windows with a native agent sandbox and support for Windows developer environments in PowerShell.

developers.openai.com/wendows

English

Brian Peña Calero retweetledi

🛠️ transforEmotion just crossed 25K CRAN downloads!!

Paper documenting the latest version is out in Computational Communication Research w/ @GolinoHudson and Alex Christensen.

Emotion analysis in R: text, images, and video. All local, no APIs, on a standard laptop.

The paper walks through a complete multimodal workflow on the MAFW dataset and the package is already being used for psychotherapy transcripts, political video analysis, corporate communications, and educational feedback.

New in this version:

❤️ Switched to uv for Python dependency management. No conda, no venv, no path debugging. library(transforEmotion) and it just works.

🔧 Local RAG: Full Retrieval-Augmented Generation on your laptop with small open-source LLMs (TinyLLAMA, Gemma 3, Qwen3, Ministral). No API keys, no cloud costs. Gemma 3 gives structured table/JSON outputs you can pipe into your stats workflow.

🔧 VAD scoring: Direct valence-arousal-dominance prediction from text, images, or video. One continuous affect space across all modalities.

🔧 Vision model registry: Swap between CLIP, BLIP, ALIGN, or your own fine-tuned models with a single argument.

🔧 Built-in benchmarking: evaluate_emotions() and validate_rag_predictions() for standardized evaluation. We demonstrate the workflow on FindingEmo in the paper.

aup-online.com/content/journa…

📦 cran.r-project.org/package=transf…

💻 github.com/atomashevic/tr…

English

Brian Peña Calero retweetledi

New Feature Alert: Codex Artifacts

Coming soon to an update near you, Artifacts will enable to you to generate PowerPoint presentations and spreadsheets natively right in your terminal.

No Python scripts. No pip install. Purpose-built Rust tools that produce files directly from structured tool calls.

This isn't a coding feature. This is Codex becoming useful for the other 90% of knowledge work.

Slides for your pitch deck. Spreadsheets for your financial model. Generated in your terminal by the same agent that writes your code.

Codex is becoming the "do everything" agent in record time.

English

Brian Peña Calero retweetledi

Brian Peña Calero retweetledi

Brian Peña Calero retweetledi

Brian Peña Calero retweetledi

Need a dataset to test an idea, but do not have real data yet?

This is a common problem in data science. You might want to prototype a model, demonstrate a method, or create an example for teaching, but collecting or sharing real data is often slow, messy, or not possible due to privacy.

The drawdata package in Python solves this by letting you sketch synthetic datasets directly in an interactive interface.

The animation below shows the idea. Instead of generating data with formulas, you draw points on a canvas, create clusters, trends, and outliers, and then export the result as a dataset for analysis. This makes it easy to create realistic scenarios for testing, teaching, and debugging.

In an upcoming module of the Statistics Globe Hub, you will learn how to use drawdata in Python to generate synthetic datasets, export them for analysis, and build reproducible examples for real projects.

The Statistics Globe Hub is my new ongoing learning program focused on practical skills in statistics, data science, AI, and programming in R and Python. It starts on March 2, and members get access to a new hands-on module every week.

More info: statisticsglobe.com/hub

#Statistics #DataScience #Python #SyntheticData #MachineLearning

GIF

English

Brian Peña Calero retweetledi

Why use Powerpoint, LaTeX or Markdown if you can just vibe-code anything you want... For #ampc26sg I re-created my slides as an immersive online slide-deck: sachaepskamp.com/AMPC2026/. Including support for mobiles to scroll through the presentation.

English

Brian Peña Calero retweetledi

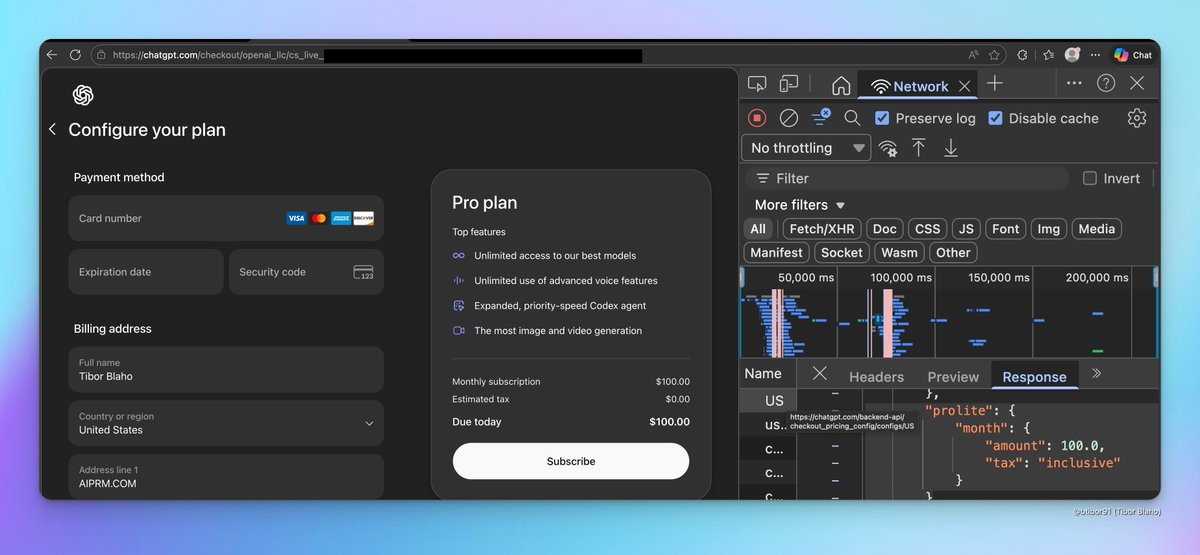

The new ChatGPT Pro Lite plan costs $100 per month (the description on the checkout page is likely still a work in progress)

Tibor Blaho@btibor91

ChatGPT web app code now mentions a new "ChatGPT Pro Lite" plan

English

Brian Peña Calero retweetledi

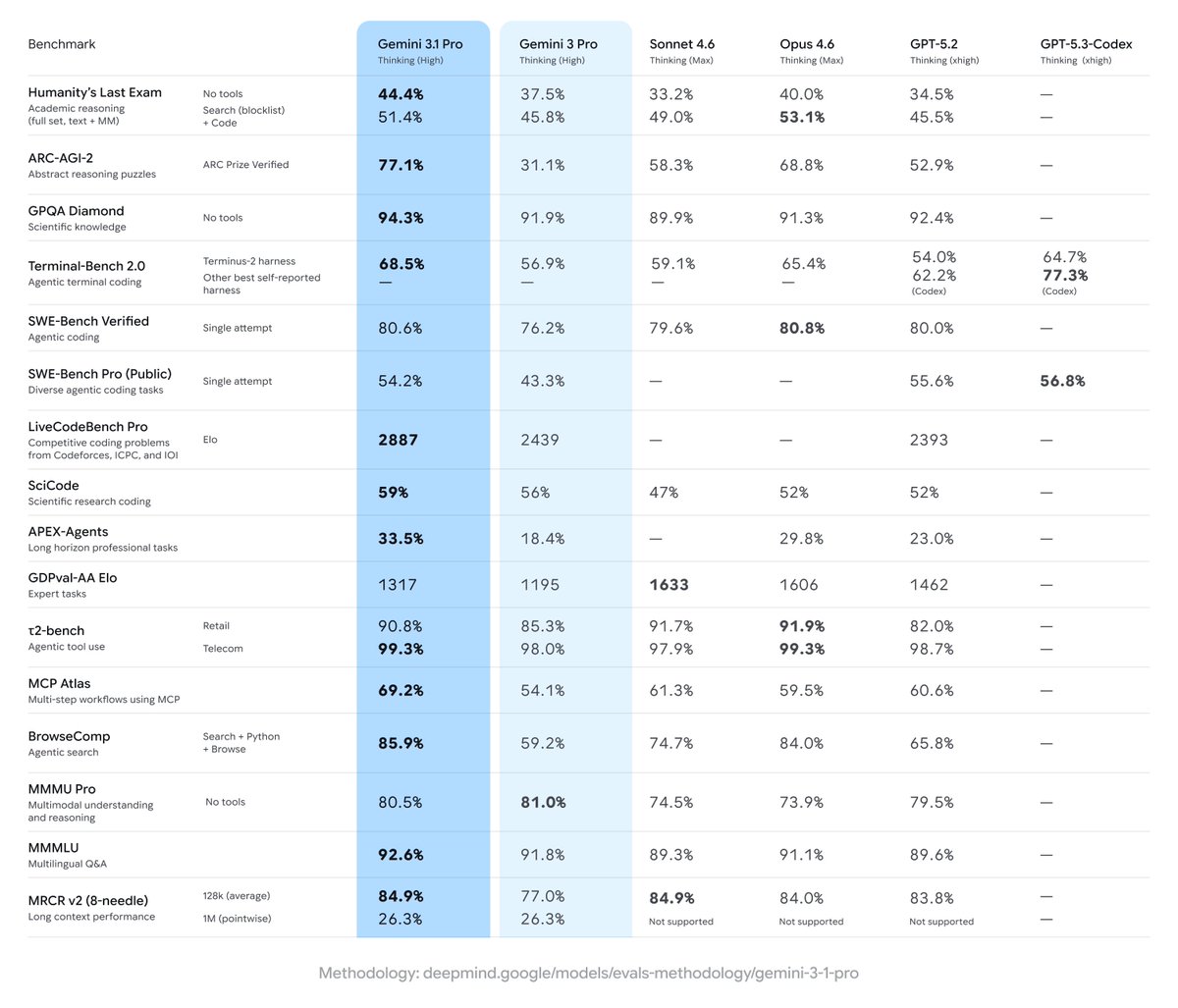

Gemini 3.1 Pro is here. Hitting 77.1% on ARC-AGI-2, it’s a step forward in core reasoning (more than 2x 3 Pro).

With a more capable baseline, it’s great for super complex tasks like visualizing difficult concepts, synthesizing data into a single view, or bringing creative projects to life.

We’re shipping 3.1 Pro across our consumer and developer products to bring this underlying leap in intelligence to your everyday applications right away. Rolling out now to:

- Developers in preview via the Gemini API in @GoogleAIStudio

- Enterprises in Vertex AI and Gemini Enterprise

- Everyone through the @Geminiapp and @NotebookLM

English

Brian Peña Calero retweetledi