Fangyuan Xu

189 posts

Fangyuan Xu

@brunchavecmoi

许方园👩🏻💻phd student @ nyu, interested in natural language processing

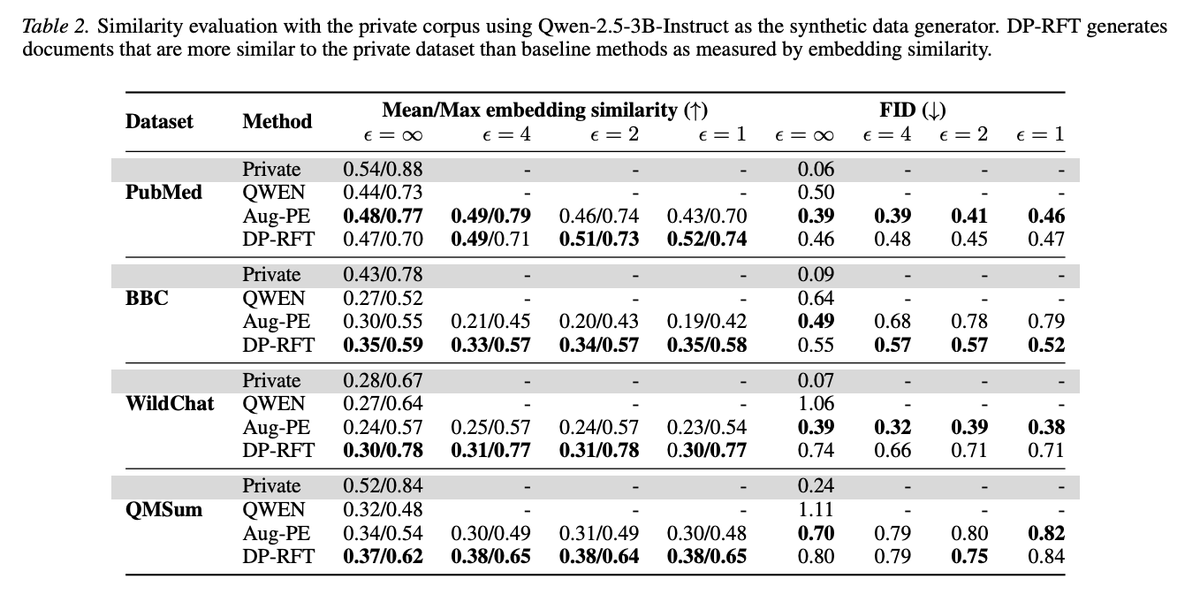

AI agents are running complex workflows, and writing full codebases and docs. But how do you verify these outputs at scale? An LLM judge gives you "3/5" with a broad reason. Which parts caused that score? Any patterns across outputs? We built Evalet 🔬 to fix this. CHI'26

🚀 Introducing AgentIR, a retriever that reads your agent’s mind (literally!) 🧠 Unlike humans, agents explicitly expose thoughts in reasoning tokens. Put them to use! 📈 Simple, substantial gains for agents on BrowseComp-Plus, 35% (BM25) ➡️ 50% (Qwen3-Embed) ➡️ 67% (AgentIR) 🧵

I packaged up the "autoresearch" project into a new self-contained minimal repo if people would like to play over the weekend. It's basically nanochat LLM training core stripped down to a single-GPU, one file version of ~630 lines of code, then: - the human iterates on the prompt (.md) - the AI agent iterates on the training code (.py) The goal is to engineer your agents to make the fastest research progress indefinitely and without any of your own involvement. In the image, every dot is a complete LLM training run that lasts exactly 5 minutes. The agent works in an autonomous loop on a git feature branch and accumulates git commits to the training script as it finds better settings (of lower validation loss by the end) of the neural network architecture, the optimizer, all the hyperparameters, etc. You can imagine comparing the research progress of different prompts, different agents, etc. github.com/karpathy/autor… Part code, part sci-fi, and a pinch of psychosis :)