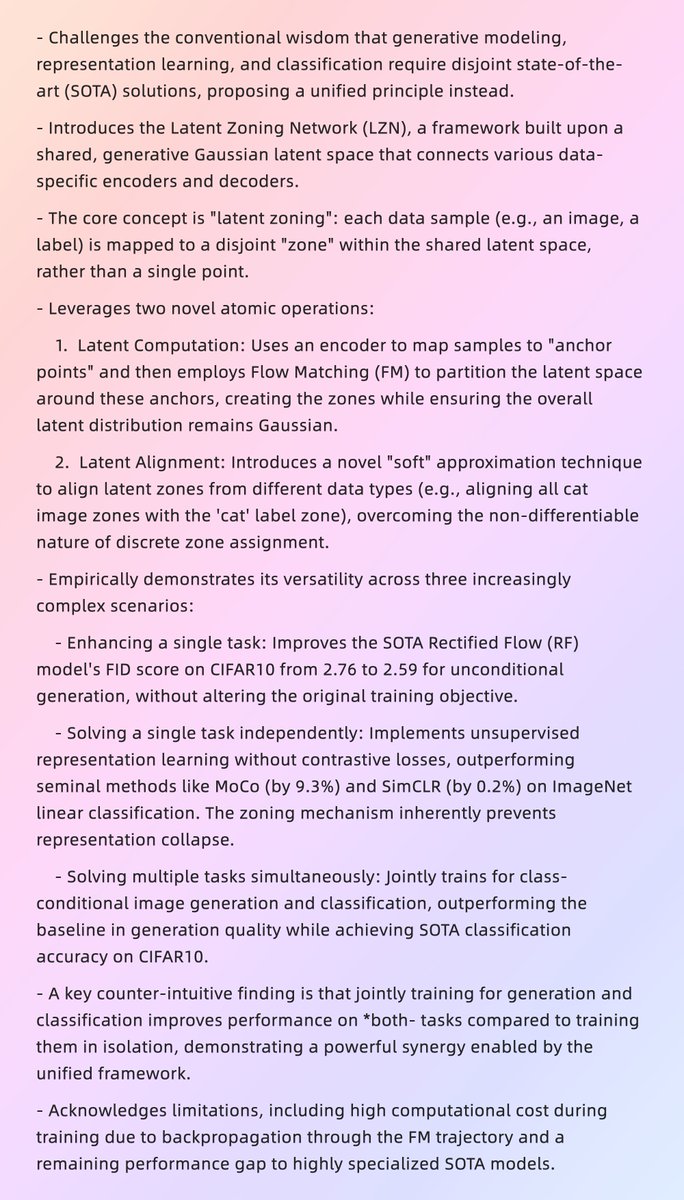

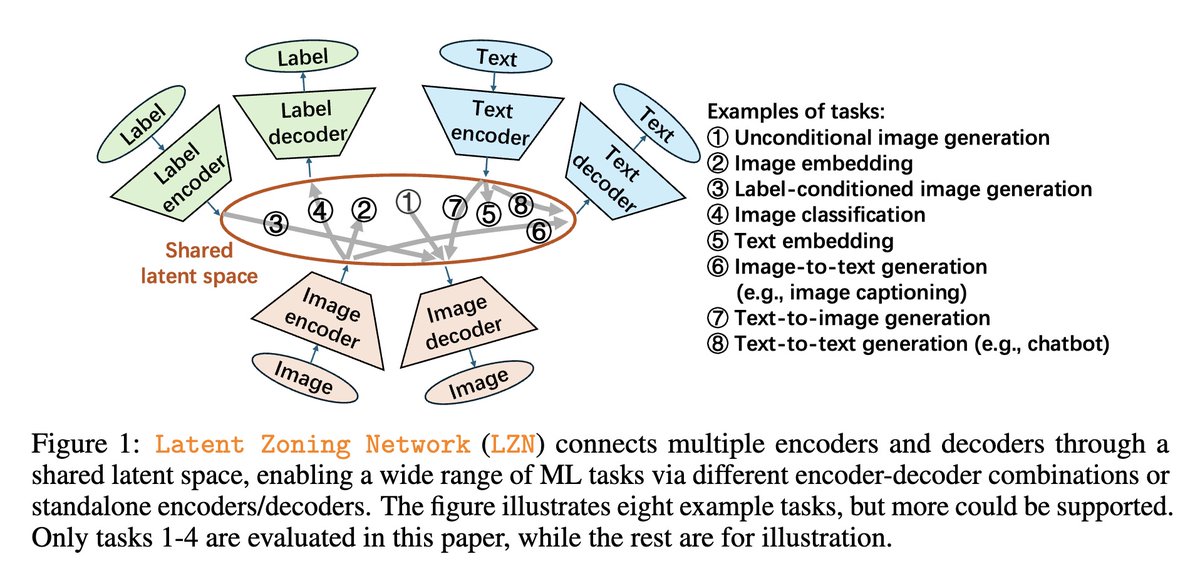

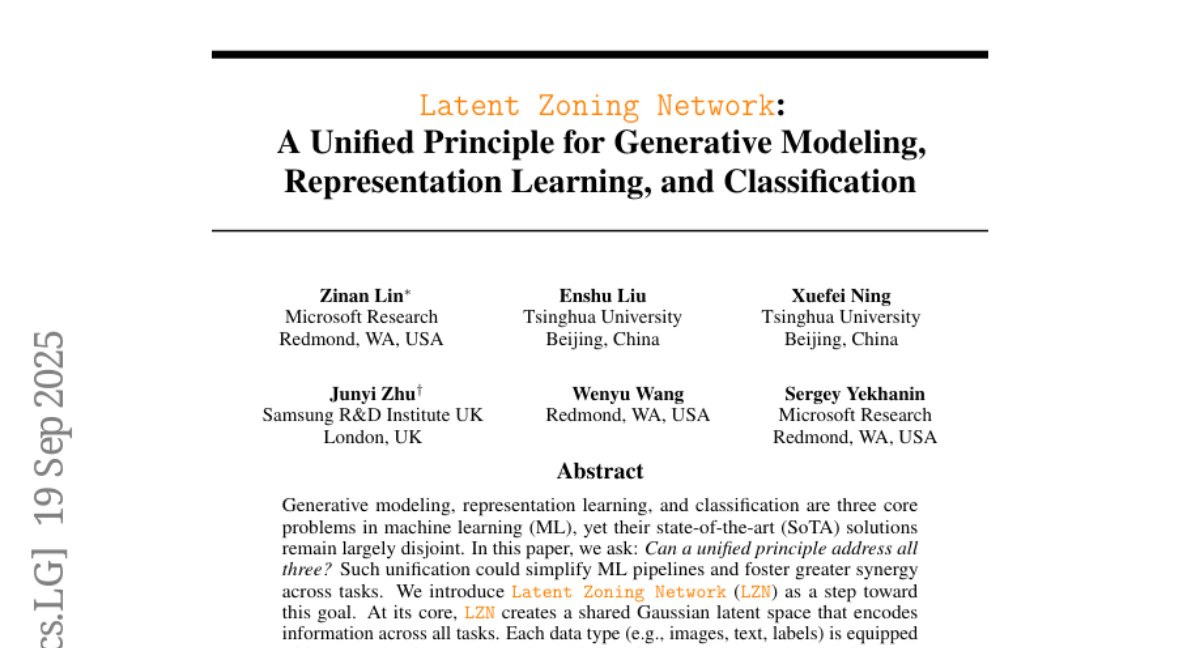

Latent Zoning Network A Unified Principle for Generative Modeling, Representation Learning, and Classification

Zinan Lin

124 posts

@lin_zinan

Principal Researcher at @MSFTResearch, PhD from @CarnegieMellon

Latent Zoning Network A Unified Principle for Generative Modeling, Representation Learning, and Classification

BlueCodeAgent is an end-to-end blue-teaming framework built to boost code security using automated red-teaming processes, data, and safety rules to guide LLMs’ defensive decisions. Dynamic testing reduces false positives in vulnerability detection: msft.it/6014tMvnr

Code agents help streamline software development workflows, but may also introduce critical security risks. Learn how RedCodeAgent automates and improves “red-teaming” attack simulations to help uncover real-world threats that other methods overlook: msft.it/6013shouB

Gave a talk at @OpenAI on our work 🌸 POPri “Policy Optimization for Private Data”. POPri is a huge improvement in synthetic data generation under security+privacy constraints! Learn more: