Fatos

20.8K posts

Fatos

@bytebiscuit

Founder of #DataScienceInitiative. #ML / #MLOps / #AI / #Data @Microsoft. Prev. Cloud Architect @Oracle. Opinions are my own.

London Katılım Mart 2011

809 Takip Edilen1.3K Takipçiler

@cursor_ai team do we have any progress on integrating Cursor with Anthropic Foundry models - don't see any update on this thread yet forum.cursor.com/t/azure-claude…

English

Tunbridge Wells feels like a post-apocalyptic scene right now with no tap water for 5 days now and no end in sight. Rations of water being distributed, caps on how many packs of water you can buy for a family at shops, restaurants, coffee shops, gyms, hotels all closed.

Have never binned so many plastic bottles in my life.

English

Fatos retweetledi

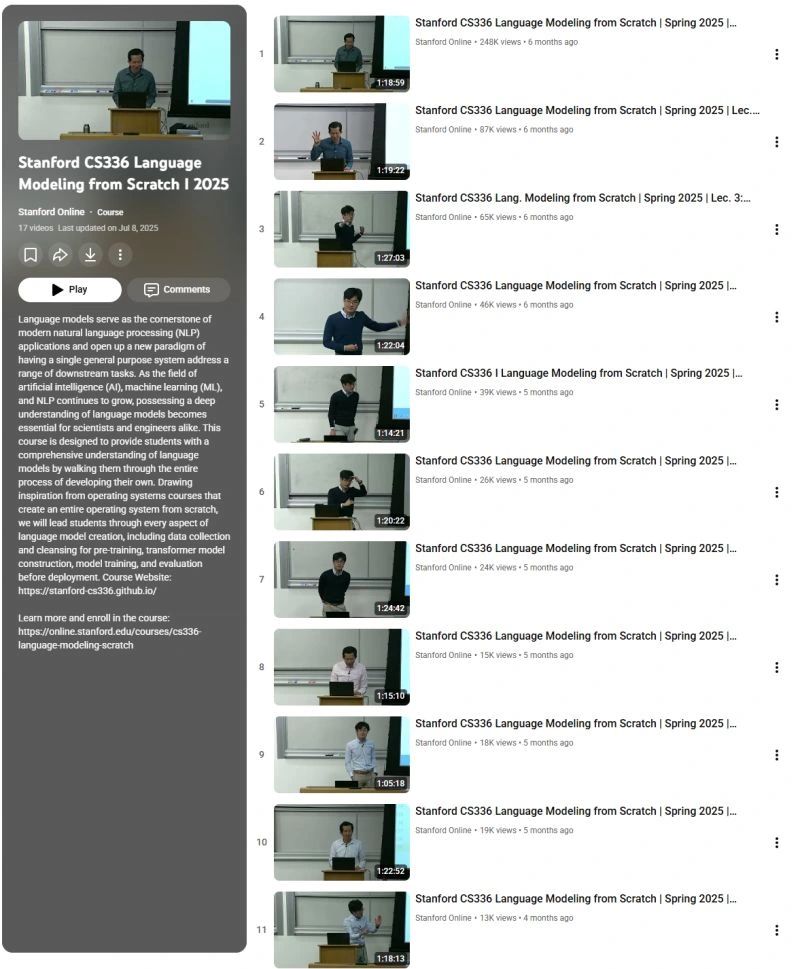

There are 2 career paths in AI right now:

The API Caller: Knows how to use an API. (Low leverage, first to be automated, $150k salary).

The Architect: Knows how to build the API. (High leverage, builds the tools, $500k+ salary).

Bootcamps train you to be an API Caller. This free 17-video Stanford course trains you to be an Architect.

It's CS336: Language Modeling from Scratch.

The syllabus is pure signal, no noise:

➡️ Data Collection & Curation (Lec 13-14)

➡️ Building Transformers & MoE (Lec 3-4)

➡️ Making it fast (Lec 5-8: GPUs, Kernels, Parallelism)

➡️ Making it work (Lec 10: Inference)

➡️ Making it smart (Lec 15-17: Alignment & RL)

Choose your path.

(I will put the playlist in the comments.)

♻️ Repost to save someone $$$ and a lot of confusion.

✔️ You can follow @techNmak, for more insights.

English

I will be speaking at DataFest Tbilisi in Georgia later this month.

On the first day - my talk will focus on the anatomy of a production-ready multi-agent architecture focusing on orchestration, governance, and scale.

On the second day I will demonstrate how to deploy a production-grade call automation system - giving AI a Voice.

English

Pushed new code to integrate with AI Foundry Agent Service using Voice Live API and a new UI component to allow easier triggering and live transcription monitoring: github.com/Azure-Samples/… [branch: feature-ai-voice-live-api]

English

Great overview of VaultGemma's differential privacy implementation during pre-training stage. youtube.com/watch?v=UwX5zz…

YouTube

English

What’s your telltale sign that a piece of text was written by AI?

For me it's the short 3-4 word sentences, split into multiple paragraphs to look like a poem. Each paragraph has a set of questions with follow up "profound" answers.

And the biggest of all - symmetry. Too much symmetry. Human though is not symmetrical when it comes to expressing itself in textual form.

English

Fatos retweetledi

Excited to release new repo: nanochat!

(it's among the most unhinged I've written).

Unlike my earlier similar repo nanoGPT which only covered pretraining, nanochat is a minimal, from scratch, full-stack training/inference pipeline of a simple ChatGPT clone in a single, dependency-minimal codebase. You boot up a cloud GPU box, run a single script and in as little as 4 hours later you can talk to your own LLM in a ChatGPT-like web UI.

It weighs ~8,000 lines of imo quite clean code to:

- Train the tokenizer using a new Rust implementation

- Pretrain a Transformer LLM on FineWeb, evaluate CORE score across a number of metrics

- Midtrain on user-assistant conversations from SmolTalk, multiple choice questions, tool use.

- SFT, evaluate the chat model on world knowledge multiple choice (ARC-E/C, MMLU), math (GSM8K), code (HumanEval)

- RL the model optionally on GSM8K with "GRPO"

- Efficient inference the model in an Engine with KV cache, simple prefill/decode, tool use (Python interpreter in a lightweight sandbox), talk to it over CLI or ChatGPT-like WebUI.

- Write a single markdown report card, summarizing and gamifying the whole thing.

Even for as low as ~$100 in cost (~4 hours on an 8XH100 node), you can train a little ChatGPT clone that you can kind of talk to, and which can write stories/poems, answer simple questions. About ~12 hours surpasses GPT-2 CORE metric. As you further scale up towards ~$1000 (~41.6 hours of training), it quickly becomes a lot more coherent and can solve simple math/code problems and take multiple choice tests. E.g. a depth 30 model trained for 24 hours (this is about equal to FLOPs of GPT-3 Small 125M and 1/1000th of GPT-3) gets into 40s on MMLU and 70s on ARC-Easy, 20s on GSM8K, etc.

My goal is to get the full "strong baseline" stack into one cohesive, minimal, readable, hackable, maximally forkable repo. nanochat will be the capstone project of LLM101n (which is still being developed). I think it also has potential to grow into a research harness, or a benchmark, similar to nanoGPT before it. It is by no means finished, tuned or optimized (actually I think there's likely quite a bit of low-hanging fruit), but I think it's at a place where the overall skeleton is ok enough that it can go up on GitHub where all the parts of it can be improved.

Link to repo and a detailed walkthrough of the nanochat speedrun is in the reply.

English

The decline of a great nation officially started today unless a future government with a new leader decides to remove this abomination of a policy.

Rohan Paul@rohanpaul_ai

H1B US Visa now costs $100K per year. This new rules begins in a week. SF Bay Area is about to feel the disruption. If you are outside the U.S. and need to start or resume H-1B work, your employer must budget for the $100K per year.

English

What a fascinating episode from @MLStreetTalk on Embodied AI with Noumenal's Dr. Maxwell Ramstead and Jason Fox.

They describe a different paradigm from today's transformer-based AI systems, one that moves from fractured representations or 'knowledge' stored in billions of distributed parameters that are hard to extract as discrete and composable units. They propose a new architecture that aims to learn representations of objects defined by their boundaries and representations of interactions between those objects. These interaction-based representations are discrete, composable, and grounded in our physical reality, unlike the fractured statistical correlations learned by transformers that are based on a compressed level of reality (i.e. language).

I also really like the idea of 'model marketplaces' where instead of one entity (company) owning the weights of a monolithic giant model, we have a small repository of skills / models that represent various actions/objects etc that can be composed with other skills / models. This would enable a new creator economy of Embodied AI skills.

Imagine a market place where you can buy / sell Embodied AI skills:

“how to grasp a mug"

“how to fold fabric"

“how to navigate cluttered hallways."

The industry needs to work towards standardising the robotics infrastructure to support such future marketplaces. At the moment the robotics ecosystem is very fragmented with varying hardware stacks, firmwares, middlewares, simulation tools, etc.

Exciting times ahead.

youtube.com/watch?v=jsIt2s…

YouTube

English

@IPKOTelecom is the most unreliable internet provider with the worst customer service in the whole telco world. Unfortunately they have monopolized the market in Kosovo. If there are ISPs out there please consider investing in Kosovo, there are countless people wanting to switch off of ipko.

What we're facing as customers:

1. network issues with intermittent outages (sometimes for days)

2. a customer service thag does not give you any clarity

3. complaints are not addressed

4. new client onboarding - misalignment between customer service promises and field engineer availability

Untapped opportunity for serious ISPs.

English

Document Chunking is about breaking content into smaller, manageable pieces for better retrieval while preserving context.

Here are a few techniques to consider:

1. Token or Character based Chunking

- Only use when structure or layout of a document is not important

2. Semantic-based Chunking

- Use when advanced understanding of document layout is required. Can handle mixture of structures: text, tables, images

3. Sliding-Window Chunking

- Preserve context across boundaries of text

4. Topic-based Chunking

- Each chunk represents a coherent and meaningful section of content which can be enhanced by heuristics (e.g.headings, bullet points, etc)

5. Document Layout Chunking

- Leverages the formatting and organization of

the document to

create contextually

relevant chunks

English

@venturetwins What codebases has Gemini been exposed to during training? 👀👀

English