Carlos Herrera

2.3K posts

Why buying TBPN for OpenAI was just insane. "Buying a media company right after telling the team to focus is a contradiction. It signals a lack of discipline and looks like a vanity project. When you are running one of the most important companies in the world, focus has to be absolute." @rodriscoll Love to hear your thoughts @benthompson

Claude for Word is now in beta. Draft, edit, and revise documents directly from the sidebar. Claude preserves your formatting, and edits appear as tracked changes. Available on Team and Enterprise plans.

Claude for Word is now in beta. Draft, edit, and revise documents directly from the sidebar. Claude preserves your formatting, and edits appear as tracked changes. Available on Team and Enterprise plans.

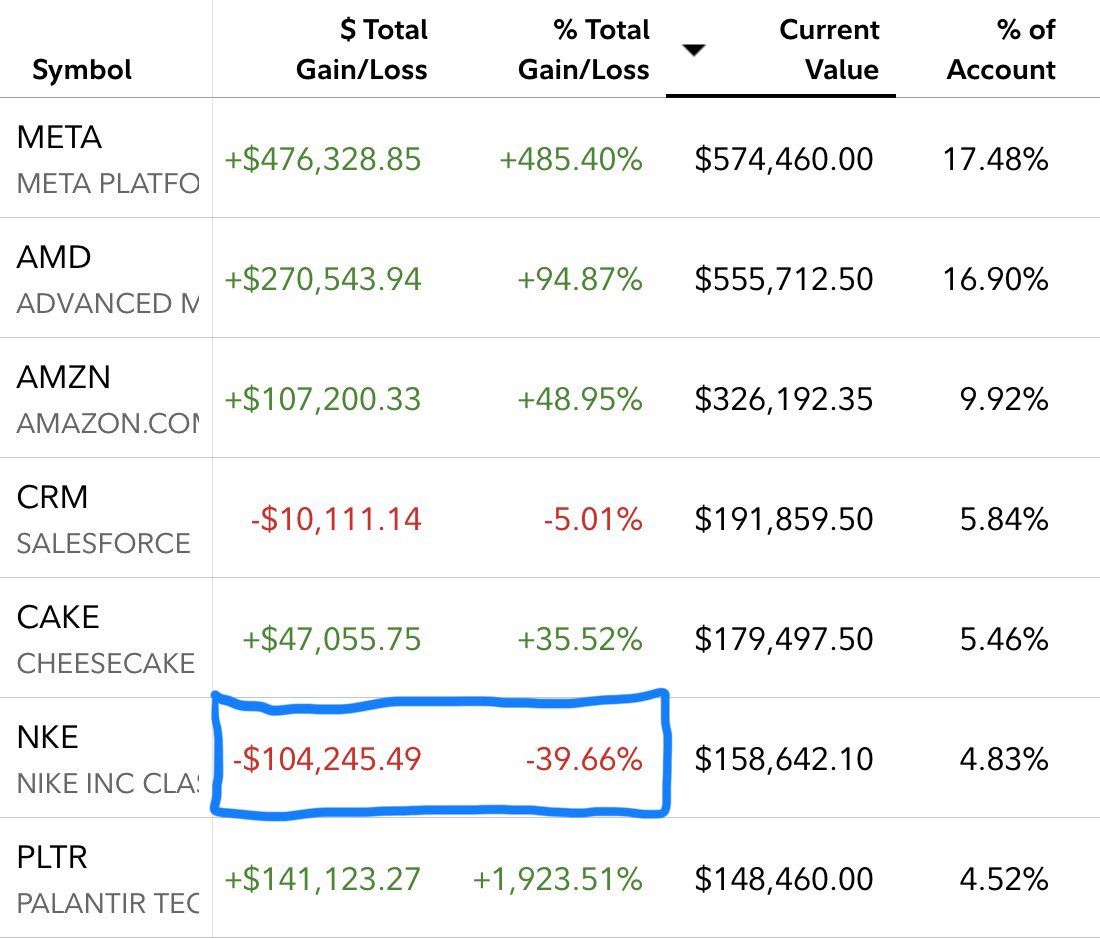

I don’t know how I would handle it as a human. 😱

Introducing: Free Tier for Browser Use Cloud 🚀 We’re giving all agents their own cloud browsers! > Unlimited browser hours > Free proxies > Persistent authentication Let your agents try for free ↓🔗