camenduru

3.2K posts

camenduru

@camenduru

building 🍞 @tost_ai ❤ open source https://t.co/8MMNbygz1P

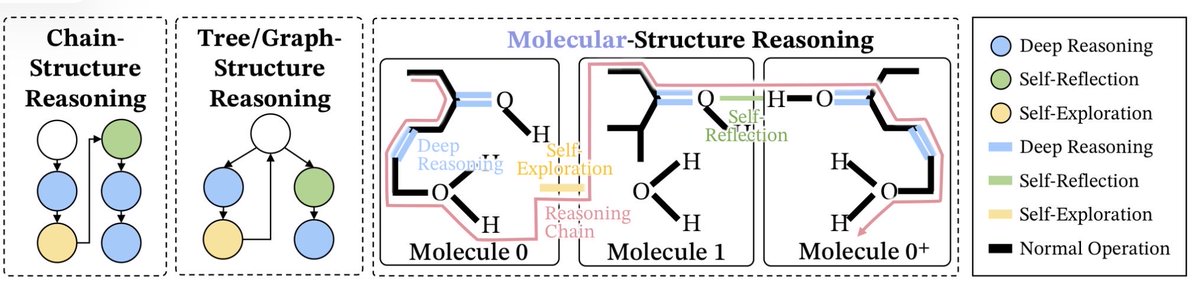

💡Introducing 𝑼𝑴𝑶 -- one unified model that unlocks motion foundation model (HY-Motion @TencentHunyuan) priors for 𝟏𝟎+ 𝐭𝐚𝐬𝐤𝐬: 𝐞𝐝𝐢𝐭𝐢𝐧𝐠, 𝐫𝐞𝐚𝐜𝐭𝐢𝐨𝐧 𝐠𝐞𝐧𝐞𝐫𝐚𝐭𝐢𝐨𝐧, 𝐬𝐭𝐲𝐥𝐢𝐳𝐚𝐭𝐢𝐨𝐧, 𝐭𝐫𝐚𝐣𝐞𝐜𝐭𝐨𝐫𝐲 𝐜𝐨𝐧𝐭𝐫𝐨𝐥, 𝐨𝐛𝐬𝐭𝐚𝐜𝐥𝐞 𝐚𝐯𝐨𝐢𝐝𝐚𝐧𝐜𝐞, 𝐤𝐞𝐲𝐟𝐫𝐚𝐦𝐞 𝐢𝐧𝐟𝐢𝐥𝐥𝐢𝐧𝐠... (1/8) 🌐 Webpage: oliver-cong02.github.io/UMO.github.io/ 📄 Paper: arxiv.org/abs/2603.15975

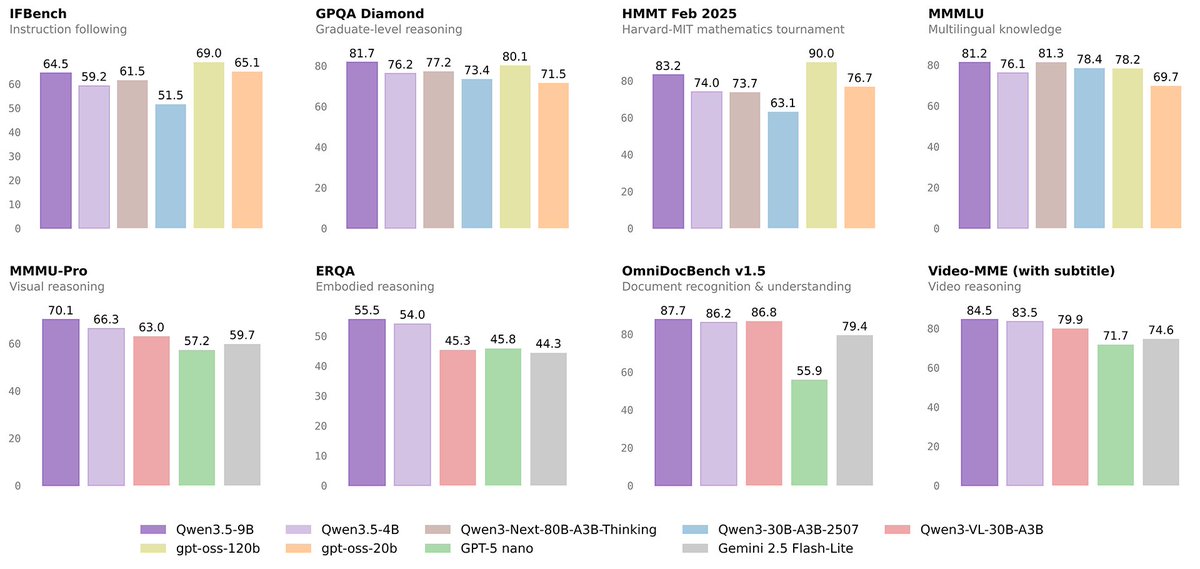

Introducing M2.5, an open-source frontier model designed for real-world productivity. - SOTA performance at coding (SWE-Bench Verified 80.2%), search (BrowseComp 76.3%), agentic tool-calling (BFCL 76.8%) & office work. - Optimized for efficient execution, 37% faster at complex tasks. - At $1 per hour with 100 tps, infinite scaling of long-horizon agents now economically possible MiniMax Agent: agent.minimax.io API: platform.minimax.io CodingPlan: platform.minimax.io/subscribe/codi…

We're releasing ACE-Step-v1.5(2B), a fast, high-quality open-source music model. It runs locally on a consumer-grade GPU, generates a full song in under 2 seconds(on an A100), supports LoRA fine-tuning, and beats SUNO on common eval metrics. GitHub: github.com/ace-step/ACE-S… Key traits: Quality: beats Suno on common eval scores Speed: full song under 2s on A100 Local: ~4GB VRAM, under 10s on RTX 3090 LoRA: train your own style with a few songs License: MIT, free for commercial use Data: fully authorized plus synthetic The music AI space lacks commercial-grade open models. Many creators are forced to rely on closed-source services, and can’t fully own, run locally, or fine-tune their own models. We want to help change that.