Hanuma

971 posts

Hanuma

@castasstring

Indian software engineer.

Hyderabad, India Katılım Ocak 2018

846 Takip Edilen44 Takipçiler

@IncomeTaxIndia @CAChirag It isn’t working. I’ve been trying to login for a long time, but it doesn’t do anything

English

Dear @CAChirag,

Our checks show that the e-filing portal is working fine. May we request you to share the specific details of the issue you've encountered (along with your mobile number & a screenshot of the error) with us via email at orm@cpc.incometax.gov.in. Our team will get in touch with you.

English

The @IncomeTaxIndia has sent a new SMS stating that ITRs are live on the portal. File today, on e filing portal

but their tax portal is down!

What to do?

English

Hanuma retweetledi

@realhyderabad86 @SulliRonni61327 NRIs buying using dollars is probably the very reason why 99% of people, working in India and paying taxes here, are not able afford the homes right now. Builders are building to lure NRIs, which is of course their right to do so as well.

English

@SulliRonni61327 I don't expect corrections of more than 10-15% if at all it happens. The NRI inflows continue unabated. Though not like 2022/23.

Many just buy for the sake of it

English

We Telugus have a deep-rooted, subconscious obsession with real estate.

What we witnessed during KCR’s era was just half the picture. The real bubble is yet to form—a time when everything catches fire.

At some point, Hyderabad will see peak euphoria—a phase of blind speculation, FOMO-driven buying, and unsustainable price spikes.

We won’t know when the euphoria will come. But when it does, it will be legendary.

English

Hanuma retweetledi

🙇Chala Badha Ga Undhi 🥺

Babu⭐@AkhilAkkineni8 🦁

Hollywood Cut Out 🕺🔥

Attitude Nawab

Walking Style Ka King 💥

Royalty Ka Baap🤯🔥

👑 @iamnagarjuna Kodukuuu💥

#AkhilAkkineni #Akhil6

Filipino

Hanuma retweetledi

@sayajiraogaikwa @drsunita02 What’s the source of this info table? Is it from any journal? Can you share it as well?

English

Ragi is diabetes friendly!!!

This is misinformation.

Ragi has same carbohydrates and is high in calories like rice, wheat and other millets.

This chart deserves place in every kitchen to be photo framed.

Every family members needs this knowledge of nutritional contents of every foods they’re eating.

English

So @nithyshankar and I adopted a baby girl and are thrilled to be parents to baby N. On a six month maternity break from work— going from making sense of corporate mumbo jumbo to baby babble for now 😄

English

@RohitGupta724 @IndianRailMedia @RailMinIndia @RailwaySeva The train is halted. Please check news in television. The track has been damaged severely.

English

Hanuma retweetledi

@DCP_Noida @DCPCentralNoida @jtcpnoidaHQ @jtcpnoida @DCPGreaterNoida @adgzonelucknow @adgzonemeerut @Uppolice @dmgbnagar @dmnoidacircle @myogioffice sir , attaching divino residents tonight incident and need your help to get justice , justice from bjp government.

English

@Krishank_BRS @BRSparty @BRSTechCell @BRSParty_News @KTRBRS @BTR_KTR @KTR_News @kmr_ktr @TSwithKCR @KonathamDileep @GulabiDalapati @SajjanarVC @tsrtcmdoffice not sure if it already came to your notice, and hence tagging you.. the security concerns raised by the driver are true…please see what best can be done.

English

We often discuss DevOps, but the 'Ops' world extends beyond just DevOps. Integrating various Ops disciplines, including the emerging trend of LLMOps, with different aspects of product development, is enhancing our ability to create superior software.

linkedin.com/posts/hanuma-p…

English

#Telangana: #Congress Chief #RevanthReddy has told state DGP, ADG law and order that party could consider a swearing in ceremony tmrw if possible or on 9th as planned, asking DGP to make adequate security arrangements. DGP met Reddy earlier in the day.

#TelanganaElectionResults

English

Grateful to the people of Telangana for giving @BRSparty two consecutive terms of Government 🙏

Not saddened over the result today, but surely disappointed as it was not in expected lines for us. But we will take this in our stride as a learning and will bounce back

Congratulations to Congress party on winning the mandate. Wishing you Good Luck

English

Looks like a good idea. Not sure about the cost effectiveness and the actual usefulness as yet. If possible, can we consider and try it on our ORR and busy highways of Telangana ? @KTRBRS

Historic Vids@historyinmemes

China is testing lasers to prevent drivers falling asleep on highways

English

If you are running @playwrightweb tests on AKS using the official docker image, what’s your resource and memory limits? How much memory is needed for it to be running? @debs_obrien any suggestion? Is there a way to print the progress so it can be see? #playwright #playwrightweb

English

Hanuma retweetledi

No more repetitive questions.

Technicians have access to your entire chat history, for a more informed and efficient conversation. Build GPT-automated customer support with Azure Communication Services. youtu.be/N0Cay8md9s4 #AzureServices

YouTube

English

Hanuma retweetledi

We've arrived at the grand finale - the last webinar in our much-anticipated series on #MicroFrontends 🚀

Register now to learn more on how to overcome common micro-frontends challenges!

See you on Thursday

#frontend #javascript #aws #architecture #web

amazon.webex.com/webappng/sites…

English

Hanuma retweetledi

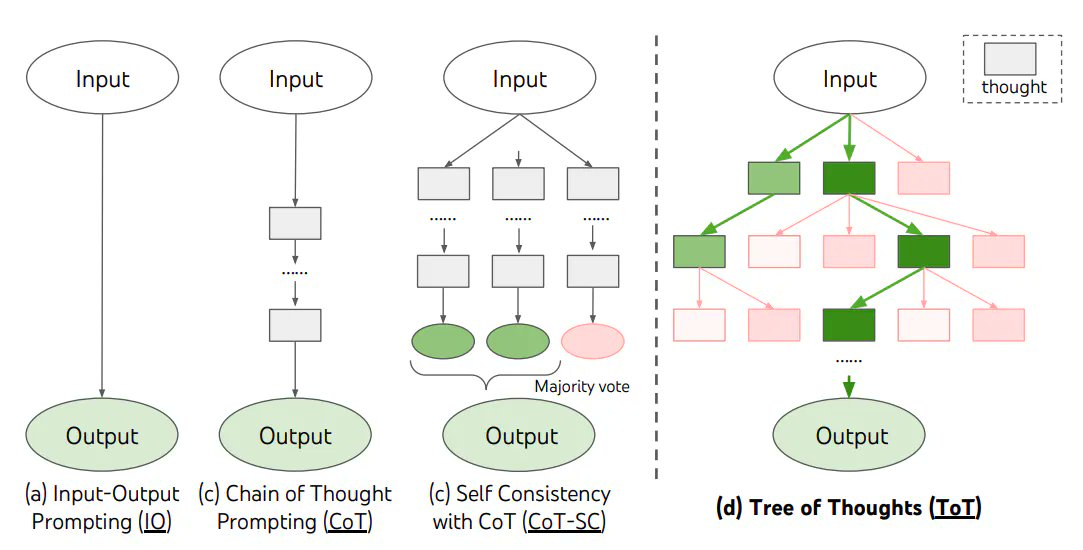

LLMs and Their Human-like Reasoning Capabilities - Fact or Fiction?

Research into enabling Large Language Models (LLMs) to display human-like reasoning has been a hot topic. However, some others have argued that LLMs aren't capable of reasoning given that are glorified next-word predictors.

Here is where we are today -

Chain of thought (CoT) reasoning - Prompting a "chain of thought"—a series of intermediate reasoning steps—can significantly improve the performance of LLMs in complex reasoning tasks.

The human brain typically decomposes a math problem into intermediate steps and solves each step before giving the final answer: “After Jane gives 2 flowers to her mom she has 10 . . . then after she gives 3 to her dad she will have 7 . . . so the answer is 7.”

The basic idea behind CoT is to prompt the model with some examples of these types of problems and the step-by-step reasoning involved in solving them. By prompting in this way, the model can decompose the problem similar to the human brain and solve for it.

LLMs when prompted with just eight chain-of-thought examples, achieved state-of-the-art accuracy surpassing even fine-tuned versions of GPT-3

CoT prompting helps in improving airthmetic, commonsense, symbolic and multi-step reasoning capabilities of the LLM

That said, the CoT prompting is fairly limited. It depends a lot on the quality of the prompt and the model doesn't have memory and the prompt size is limited by the model's context length.

Recently researchers at DeepMind released a paper that outlines the Tree of Thoughts (ToT) framework. It addresses the shortcomings of existing approaches that do not explore different continuations within a thought process or incorporate any type of planning, lookahead, or backtracking to evaluate different options.

ToT frames any problem as a search over a tree, where each node represents a partial solution with the input and the sequence of thoughts so far. It allows LMs to explore multiple reasoning paths over thoughts, each thought being a coherent language sequence that serves as an intermediate step toward problem solving.

The ToT process involves four key steps:

- Decomposing the intermediate process into thought steps.

- Generating potential thoughts from each state.

- Heuristically evaluating states.

- Deciding what search algorithm to use.

The ToT framework allows LMs to perform deliberate decision making by considering multiple different reasoning paths and self-evaluating choices to decide the next course of action. It also enables looking ahead or backtracking when necessary to make global choices.

The framework is versatile and can handle challenging tasks. It also improves the interpretability of model decisions and the opportunity for human alignment, as the resulting representations are readable, high-level language reasoning instead of implicit, low-level token values.

In practice, the ToT framework has significantly enhanced language models' problem-solving abilities on tasks requiring non-trivial planning or search.

In the Game of 24, while a model with chain-of-thought prompting only solved 4% of tasks, the ToT method achieved a success rate of 74%.

ToT is a prompting framework has well and has the same fundamental limitations as the CoT framework. ToT needs careful thought decomposition and generation, and relies on heuristics for evaluating states and deciding on the search algorithm.

However it's important to remember that while LLMs can mimic certain types of reasoning to a certain extent, but it's not the same as human reasoning.

They don't have the ability to understand, self-correct, or make judgments based on a deep understanding of the world. They're more like really good actors who can deliver their lines convincingly but don't actually understand the plot of the play.

In summary, AI is still extremely early when it comes to reasoning and we may need a another significant breakthrough before LLMs can really measure up to humans.

English