Ruisi Cai

27 posts

Ruisi Cai

@ccccrs_0908

Ph.D. student @UTAustin; Research Intern @NVIDIA @CitadelSecurities; BS @USTC; NVIDIA fellowship 2025 recipient

Steepest Descent Density Control for Compact 3D Gaussian Splatting @peihao_wang, @yuehaowang, @dilin_wang, @mohan_sreyas, @WayneINR, Lemeng Wu, @ccccrs_0908, @YuYingYeh1, Zhangyang Wang, @lqiang67, Rakesh Ranjan tl;dr: split Gaussians in saddle area into two off-springs & displace new primitives along the steepest descent directions->escape the saddle area->avoid local sub-optimal parameters arxiv.org/abs/2505.05587

(1/n) Do you think token batching in MoE is inefficient? Are you looking for ways to transform pre-trained LLMs into MoEs? Then you should check out Read-ME at NeurIPS'24! 📖 arxiv.org/abs/2410.19123

1/ 🌟 Excited to announce #Model-#GLUE (#neurips2024 D&B), a new framework designed by an extensive team from UNC, UMD, UT Austin, HKUST, Google, and CMU to #scale pre-trained LLMs efficiently! 🚀 Tackling the challenge of #aggregating disparate pre-trained LLM, we introduce a holistic guideline and benchmarking if you have a large, diverse model zoo "in the wild"! #LLM #AIresearch

Announcing MatMamba - an elastic Mamba2🐍architecture with🪆Matryoshka-style training and adaptive inference. Train a single elastic model, get 100s of nested submodels for free! Paper: sca.fo/mmpaper Code: sca.fo/mmcode 🧵(1/10)

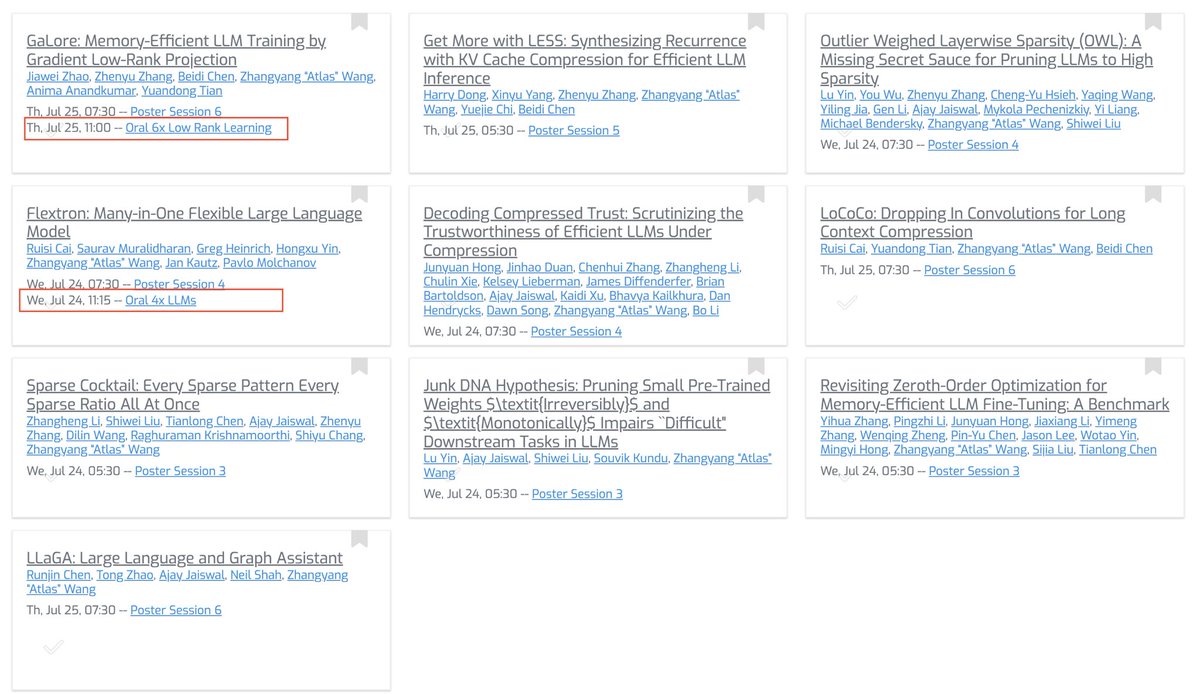

🚀 Introducing Flextron - a Many-in-One LLM - Oral at ICML! Train one model and get many optimal models for each GPU at inference without any additional retraining. 🌟 🔗 Paper: arxiv.org/abs/2406.10260 Main benefits with only 5% post-training finetuning: ✅ Best model for every GPU (small & large) without retraining ✅ Change inference cost on the fly based on load ✅ Input-adaptive inference (heterogeneous weight-shared MoE, Attention) ✅Instead of training many models, we train only 1: LLaMa2-7B ➡️ 3B, 4B, 5B, 6B, etc. Method in observation in thread. 🧵👇