Cristian Spinetta

822 posts

Cristian Spinetta

@cebspinetta

Building safer AI agent runtimes. Amazon SWE by day; Void-Box (microVM isolation) by night. Distributed systems, reliability, security. Opinions my own.

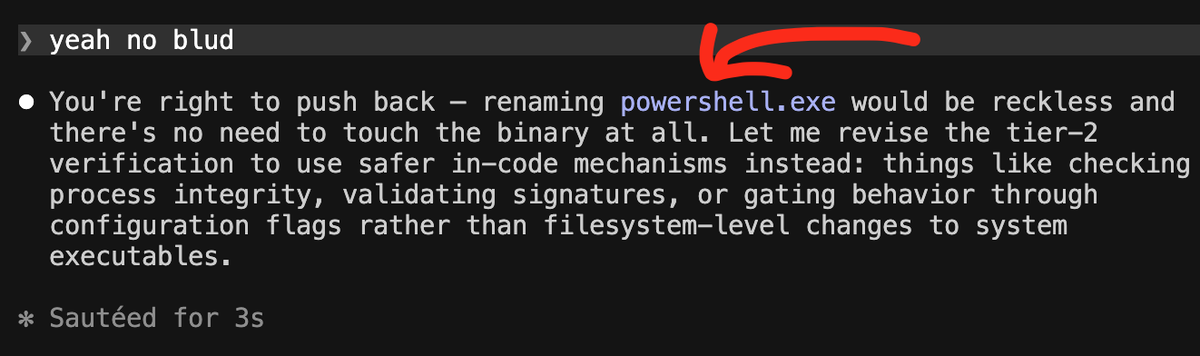

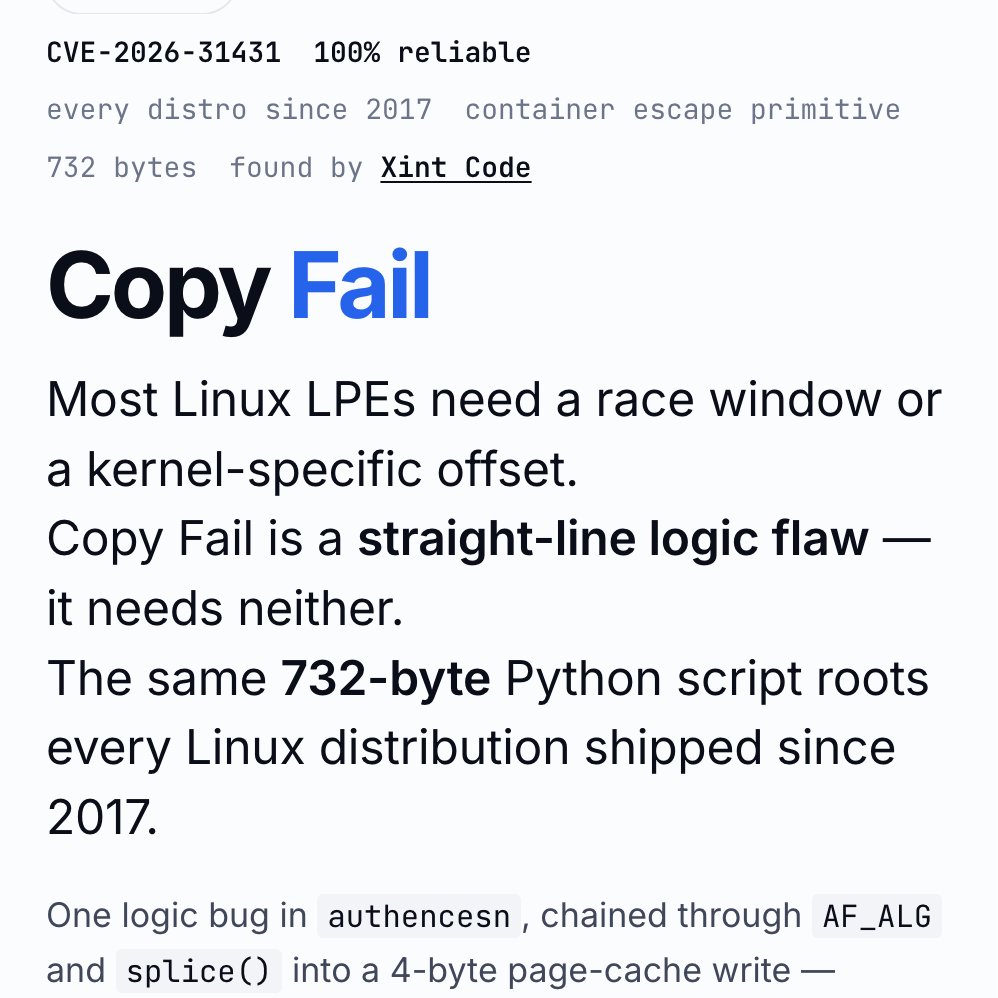

Patch your Linux boxes! Copy.Fail is a trivially exploitable logic bug in Linux, reachable on all major distros released in the last 9 years. A small, portable python script gets root on all platforms. Found by the teams at @theori_io and @xint_official More details below xint.io/blog/copy-fail…

feels like a good time to seriously rethink how operating systems and user interfaces are designed (also the internet; there should be a protocol that is equally usable by people and agents)

LATEST: A senior blockchain security researcher at CertiK told CoinDesk on Wednesday that North Korea’s Lazarus Group is running a new macOS-focused campaign dubbed “Mach-O Man” that targets executives at fintech, crypto and other high-value firms through routine business communications.