Cem | Sovereign

4.1K posts

Cem | Sovereign

@cemozer_

CEO @sovereignxyz. previously: ethereum core dev @Teku_ConsenSys. simplicity maxi

*SEC SAID TO LEAN TOWARD ALLOWING THIRD-PARTY TOKENIZED STOCKS WITHOUT ISSUER CONSENT - BLOOMBERG LAW

Crypto built global settlement before it built exchange-grade execution. blink is built to close that gap. Physical infrastructure. Equalized access. Tradfi-grade execution. Blockchain settlement. Today, blink comes out of stealth.

Modern markets are fundamentally extractive We've spent three years rewiring them from scratch One core feature is everyone deploying their own bots without code or infra Here's a first look @SynchronicityHQ We're looking for strategists to test drive. Reply for early access

it's insane to me that buy and burn has become the default for profitable crypto protocols why would a high growth startup would ever take its profits and distribute them to shareholders instead of re-investing for future growth, or at least holding it for runway

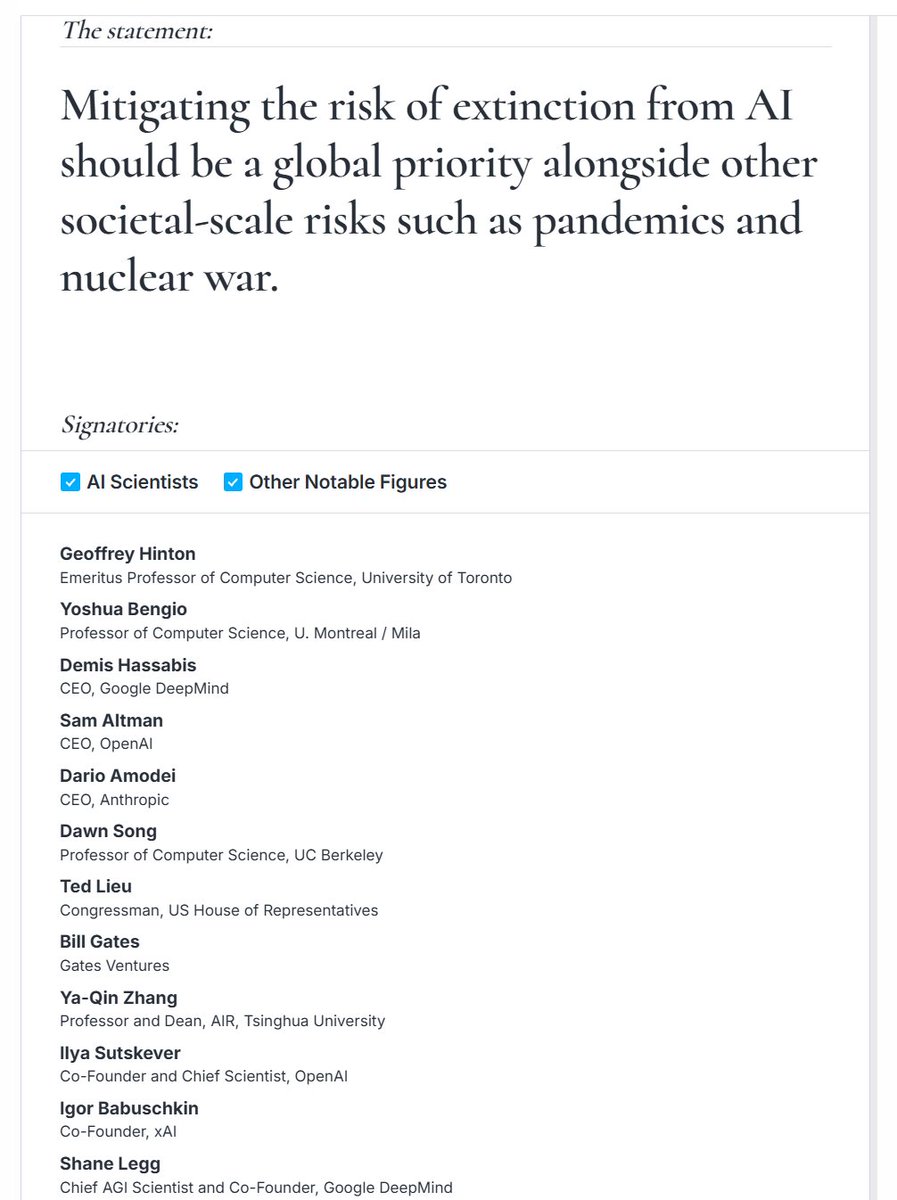

Introducing Project Glasswing: an urgent initiative to help secure the world’s most critical software. It’s powered by our newest frontier model, Claude Mythos Preview, which can find software vulnerabilities better than all but the most skilled humans. anthropic.com/glasswing

A New York bill would ban AI from answering questions related to several licensed professions like medicine, law, dentistry, nursing, psychology, social work, engineering, and more. The companies would be liable if the chatbots give “substantive responses” in these areas.

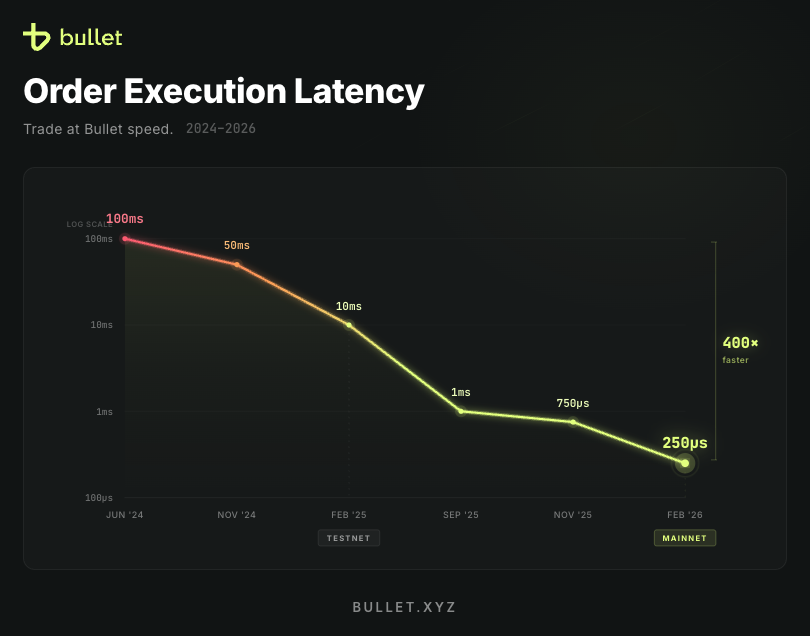

After two years of blood, sweat and pull requests @Bulletxyz mainnet is finally here. Absolutely stoked with what our team achieved. Getting down to an unmatched 250us order execution latency @ 30k orders/sec, unheard of for a perps DEX. Bullet's law seems to be playing out (order of magnitude speed increase every year), and the tech will only continues to get better. Admittedly mainnet took longer than I expected, but good things take time and there are no shortcuts for building on the cutting-edge. We'll be slowly rolling out in private beta to a select group of whitelisted traders, and continue making our way through the waitlist (DM me if you are interested in getting in early, high quality feedback appreciated and welcome). Job's not done, now we go hard on liquidity, expanding the product suite and user growth. If you see what we've achieved in just two years, imagine what we can achieve in the next two. Bullet Time.

Perps Trading on Solana is about to change for the better. Mainnet is live. Here's how you can get in early 👇