Chase Holmes 🇺🇸

1K posts

Chase Holmes 🇺🇸

@chase1440

frontier inference @etched // prev expeditions @databricks @mosaicml @redpoint @amplitude_hq

WHAT HAVE WE JUST WITNESSED? 🤯 Sabastian Sawe has just become the first person in history to run a sub two-hour marathon in race conditions. Yomif Kejelcha was also under two hours for second!

A hill that I will die on: with today's AI models, intelligence is a function of inference compute. Comparing models by a single number hasn't made sense since 2024. What matters is intelligence per token or per $. This is especially true when using it in a product like Codex.

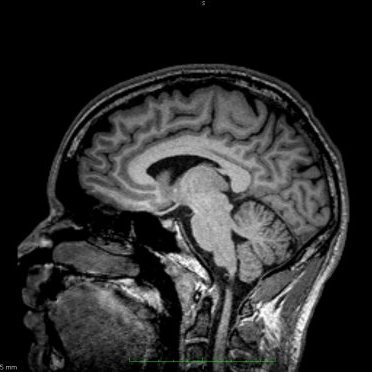

Inference demand in 2026 has surged, but not for single-turn workloads that most engines are benchmarked on. Agentic workloads have a different structure: traces consist of many tool-calling turns with heavy-tailed distributions over assistant and tool output. These workloads introduce a new set of challenges for efficient serving. We pulled production traces from over 100 post-training runs and are open sourcing these workloads to help define a new target for inference engine optimization.

Welcome to the decade of divergence. Weights, wafers, and watts create structural advantages that Rockefeller would envy Incrementalism won’t work - there won’t be enough time to compound. Winning is no longer a matter of will or time, it’s a matter of chokepoints + compute

The next industrial revolution isn’t software. It’s science.

Obsessed with plainspoken business writing. If you think your strategy is too complex to articulate crisply, ask yourself if it's more complex than Anthropic in summer 2023 thinking through various product plans for early 2025.