Chris Flores

186 posts

Chris Flores

@chrisflores_us

Multidisciplinary Design at @multosakalye. Using X as a micro-journal to document my journey as a designer in a quickly evolving world.

Katılım Ağustos 2023

505 Takip Edilen60 Takipçiler

@davidbhappy @campedersen One issue I run into occasionally with Qwen3.5_9B is that it goes into "infinite repetition frenzy mode".

English

@RoundtableSpace Thank goodness I was able to get the free tier before they discontinued it

English

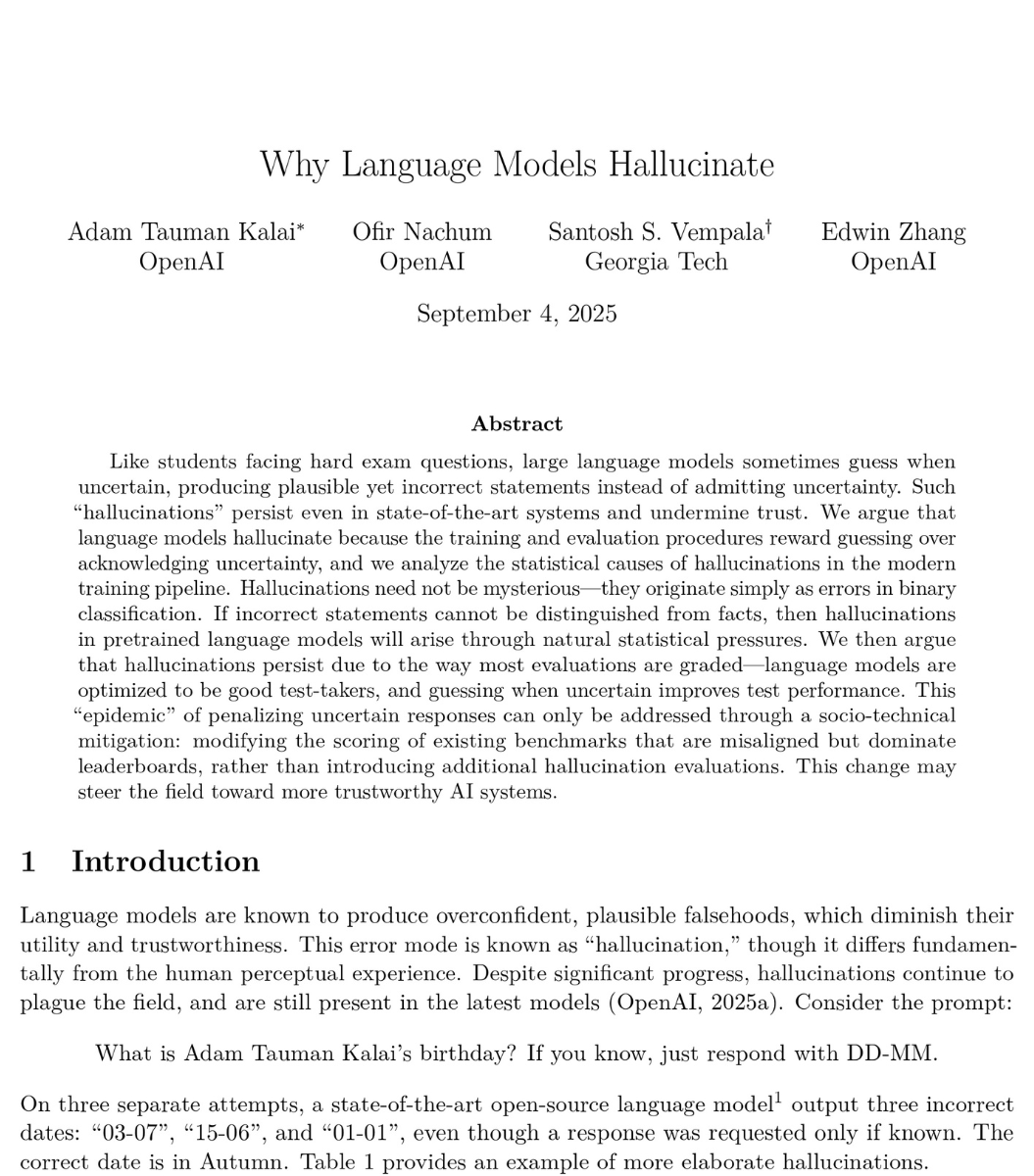

@heynavtoor This was published September 4, 2025.

That's like 1000 years ago in AI terms.

English

🚨BREAKING: OpenAI published a paper proving that ChatGPT will always make things up.

Not sometimes. Not until the next update. Always. They proved it with math.

Even with perfect training data and unlimited computing power, AI models will still confidently tell you things that are completely false. This isn't a bug they're working on. It's baked into how these systems work at a fundamental level.

And their own numbers are brutal. OpenAI's o1 reasoning model hallucinates 16% of the time. Their newer o3 model? 33%. Their newest o4-mini? 48%. Nearly half of what their most recent model tells you could be fabricated. The "smarter" models are actually getting worse at telling the truth.

Here's why it can't be fixed. Language models work by predicting the next word based on probability. When they hit something uncertain, they don't pause. They don't flag it. They guess. And they guess with complete confidence, because that's exactly what they were trained to do.

The researchers looked at the 10 biggest AI benchmarks used to measure how good these models are. 9 out of 10 give the same score for saying "I don't know" as for giving a completely wrong answer: zero points. The entire testing system literally punishes honesty and rewards guessing.

So the AI learned the optimal strategy: always guess. Never admit uncertainty. Sound confident even when you're making it up.

OpenAI's proposed fix? Have ChatGPT say "I don't know" when it's unsure. Their own math shows this would mean roughly 30% of your questions get no answer. Imagine asking ChatGPT something three times out of ten and getting "I'm not confident enough to respond." Users would leave overnight. So the fix exists, but it would kill the product.

This isn't just OpenAI's problem. DeepMind and Tsinghua University independently reached the same conclusion. Three of the world's top AI labs, working separately, all agree: this is permanent.

Every time ChatGPT gives you an answer, ask yourself: is this real, or is it just a confident guess?

English

GPT 5.4 arriving on OpenClaw 🦞

Peter Steinberger 🦞@steipete

@para_lau Yeap, coming this weekend with lots of other improvements

English

@YoonLucie68250 *state of the art; the big models like Claude, ChatGPT, etc.

English

@YoonLucie68250 I hear you on that. Soon enough local will become the norm. So I hope.

English

@chrisflores_us I feel like Kimi and Deepseek’s responses are very similar in terms of language and vocabulary. Very generic sounding. Maybe because I use the free version but I’m not impressed enough to upgrade with the responses I got.

English

@YoonLucie68250 Hey you got it. In the past days that I've been using it, I've been mainly testing it for ideating abstract angles for concepts for work. The SOTA models still tend to stick to predictable parameters; but with Qwen, it has stepped out of bounds in a pleasant way a few times.

English

@chrisflores_us Thanks for sharing your experience. Do you use it for creativity writing or more general/professional writing?

English

@YoonLucie68250 IMHO I feel like Qwen is way more creative than Gemini yet still coherent and head to head with Claude in terms of both creativity and being able to mimic tonal signatures along.

Things seem to be moving along in the space. It's wild.

English

@chrisflores_us Really? How does it compare with Gemini and Claude? I haven’t tried Claude but people are saying it’s dumbed down a lot and Gemini isn’t as good as before.

I tried Deepseek and Kimi. Both aren’t as good as the US models in terms of translation.

English

@KaelirRises @OpenAI It's funny you mention the writing because that was a similar sentiment I had a few days ago: x.com/chrisflores_us…

Chris Flores@chrisflores_us

Qwen3.5 is really damn good at writing.

English

@chrisflores_us @OpenAI Just using it all day and absolutely vibing like it’s summer of 2025 and I’m talking to 4o for 4 hours. I left some screen shots on my profile - they’re pinned.

English

@EPlCs @RoundtableSpace There ya go! Just got the mlx on deck right now. Gonna give it a go! LFG!

English

@chrisflores_us @RoundtableSpace Ok I was stupid. GGUF gets 30 tps. I just got 75tps with the mlx model full 260k context enabled.

English

@trq212 Man I’m so glad I hopped over to Max. Feels like Christmas all over again.

English