Mike Christensen

105 posts

Mike Christensen

@christensencode

Staff Distributed Systems Engineer @ablyrealtime

Introducing Flue — The First Agent Harness Framework Flue is a TypeScript framework for building the next generation of agents, designed around a built-in agent harness. Flue is like Claude Code, but 100% headless and programmable. There's no baked in assumption like requiring a human operator to function. No TUI. No GUI. Just TypeScript. But using Flue feels like using Claude Code. The agents you build act autonomously to solve problems and complete tasks. They require very little code to run. Most of the "logic" lives in Markdown: skills and context and AGENTS.md. Flue is like Astro or Next.js for agents (not surprising, given my background 🙃). It's not another AI SDK. It's a proper runtime-agnostic framework. Write once, build, and deploy your agents anywhere (Node.js, Cloudflare, GitHub Actions, GitLab CI/CD, etc). We originally built Flue to power AI workflows inside of the Astro GitHub repo. But then @_bgiori got his hands on it, and we realized that every agent needs a framework like Flue, not just us. Check it out! It's early, but I'm curious to hear what people think. Are agents ready for their library -> framework moment?

Loved @vercel's Workflows workshop in London yesterday. Their agent-era stack (AI SDK + Workflow DevKit + Sandbox) is one of the most coherent I've seen for building AI products. My take: Workflows essentially makes serverless stateful. Serverless used to mean pushing state into an external store and rehydrating on each invocation. Fine for request handlers. Painful for agents, which carry a lot of it: tool history, in-flight work, subagent exchanges. With Fluid Compute, the time your agent is suspended waiting on I/O doesn't burn cloud credits. Durable execution is quietly becoming the default primitive for agents. I love this stack, but there are still a few gotchas:

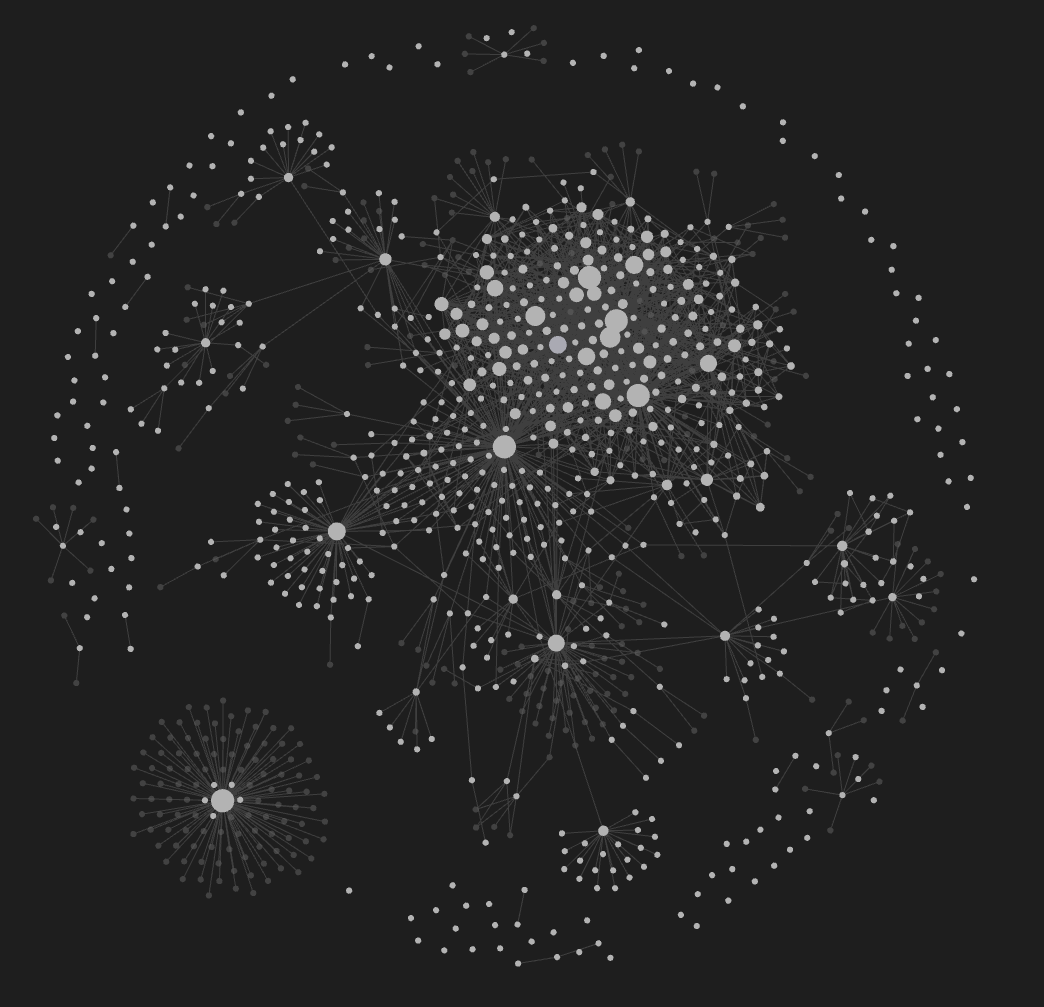

9 months ago we publicly committed to 2x the productivity of our R&D org at @intercom. It was scary. It wasn't always clear we'd pull it off. We hit it with 3 months to spare. In fact, looking back 16 months - we've 3x'd. Here's what actually happened (with receipts): 🧵

While the naive approach works for a demo, it breaks down in production: 1/ Crash recovery - If the server dies mid-response (OOM, deploy, cold start), the LLM context, partial response, and completed tool calls are all lost. You restart from scratch, burning tokens and time. 2/ Reconnection - If the WebSocket disconnects (user switches devices, network blip), you need to keep the agent running server-side, buffer all streamed chunks, and replay them on reconnect. You're essentially building a message queue on top of WebSockets. 3/ Idle resource management - Sandboxes have a 5-hour hard timeout, but most sessions are idle after 10 minutes. Without an inactivity-based hibernation system, you're paying for VMs nobody is using. Building one means: polling all sessions, checking activity timestamps, handling race conditions if a user returns mid-shutdown. 4/ Duplicate prevention -User double-clicks send, or the client auto-retries on a network blip. Now two connections are driving the same agent - race conditions, duplicate commits, corrupted sandbox state. On top of all this, you'd need retry logic for transient tool failures, observability into run status, and cancellation support (user clicks "Stop" while the agent is running npm install). You're building a workflow engine inside your app.