Locke Cai

22 posts

Locke Cai

@couplefire12

CS & Math @ MIT | ML Research Intern @ https://t.co/Nli5KHCIzI

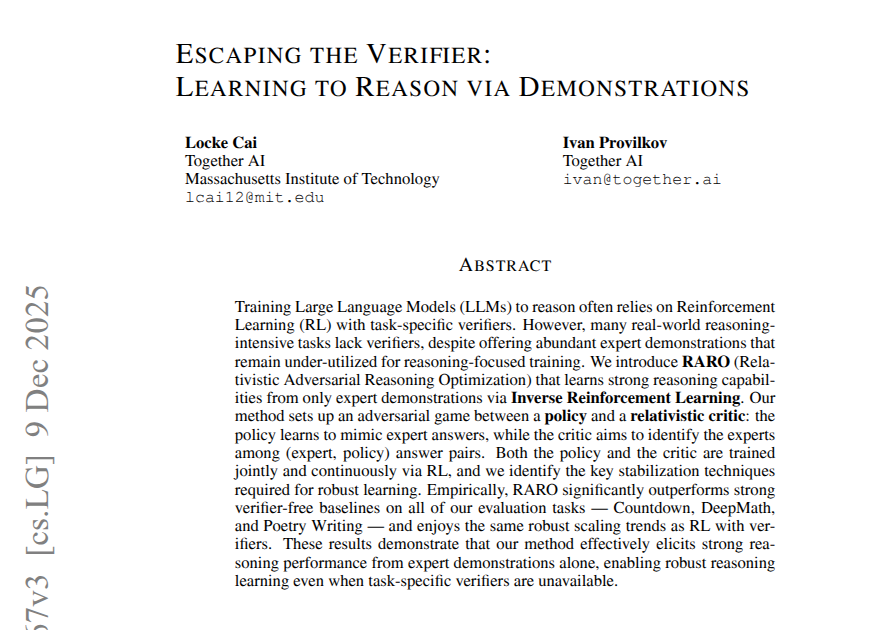

RL for reasoning often rely on verifiers — great for math, but tricky for creative writing or open-ended research. Meet RARO: a new paradigm that teaches LLMs to reason via adversarial games instead of verification. No verifiers. No environments. Just demonstrations. 🧵👇

RL for reasoning often rely on verifiers — great for math, but tricky for creative writing or open-ended research. Meet RARO: a new paradigm that teaches LLMs to reason via adversarial games instead of verification. No verifiers. No environments. Just demonstrations. 🧵👇

RL for reasoning often rely on verifiers — great for math, but tricky for creative writing or open-ended research. Meet RARO: a new paradigm that teaches LLMs to reason via adversarial games instead of verification. No verifiers. No environments. Just demonstrations. 🧵👇

RL for reasoning often rely on verifiers — great for math, but tricky for creative writing or open-ended research. Meet RARO: a new paradigm that teaches LLMs to reason via adversarial games instead of verification. No verifiers. No environments. Just demonstrations. 🧵👇

RL for reasoning often rely on verifiers — great for math, but tricky for creative writing or open-ended research. Meet RARO: a new paradigm that teaches LLMs to reason via adversarial games instead of verification. No verifiers. No environments. Just demonstrations. 🧵👇

RL for reasoning often rely on verifiers — great for math, but tricky for creative writing or open-ended research. Meet RARO: a new paradigm that teaches LLMs to reason via adversarial games instead of verification. No verifiers. No environments. Just demonstrations. 🧵👇