Together AI

2.6K posts

Together AI

@togethercompute

Accelerate inference, model shaping, and pre-training on a research-optimized platform.

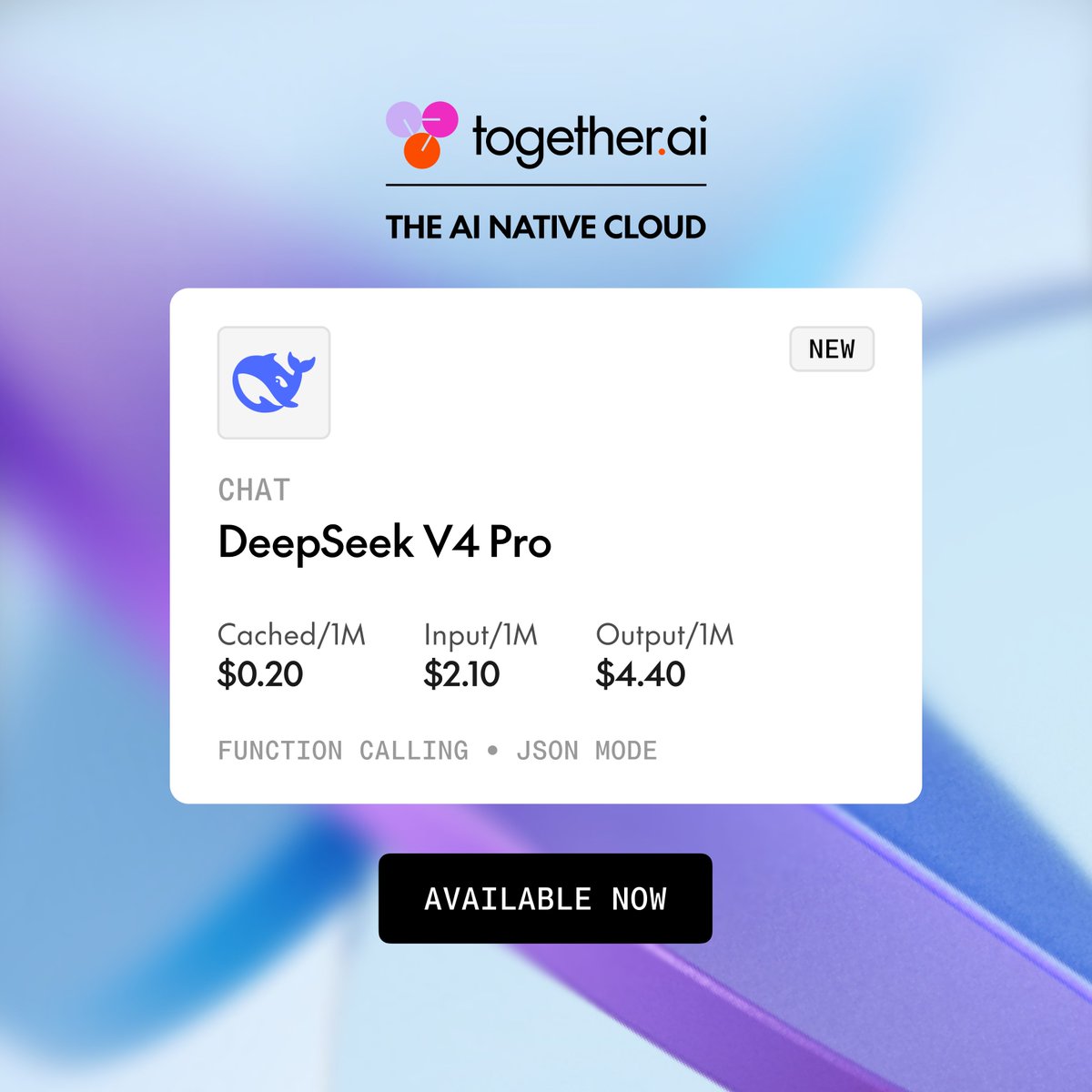

Join us Tue 5/5: #DeepSeek-V4's hybrid attention + sparse MoE reduces KV cache up to 90%, enabling 1M-token context. We'll cover why that makes it great for agentic workflows, what it took to serve at scale, and how to build with it. Hear from @realDanFu @JueWANG26088228 @ZainHasan6 and @zhyncs42 → togetherai.link/ds-v4-x

We believe that intelligence should not arrive preconfigured. @togethercompute is now available directly inside the Adaption platform, connecting Adaptive Data with large-scale training in a single workflow. One platform, end to end. Stop inheriting intelligence. Shape it.

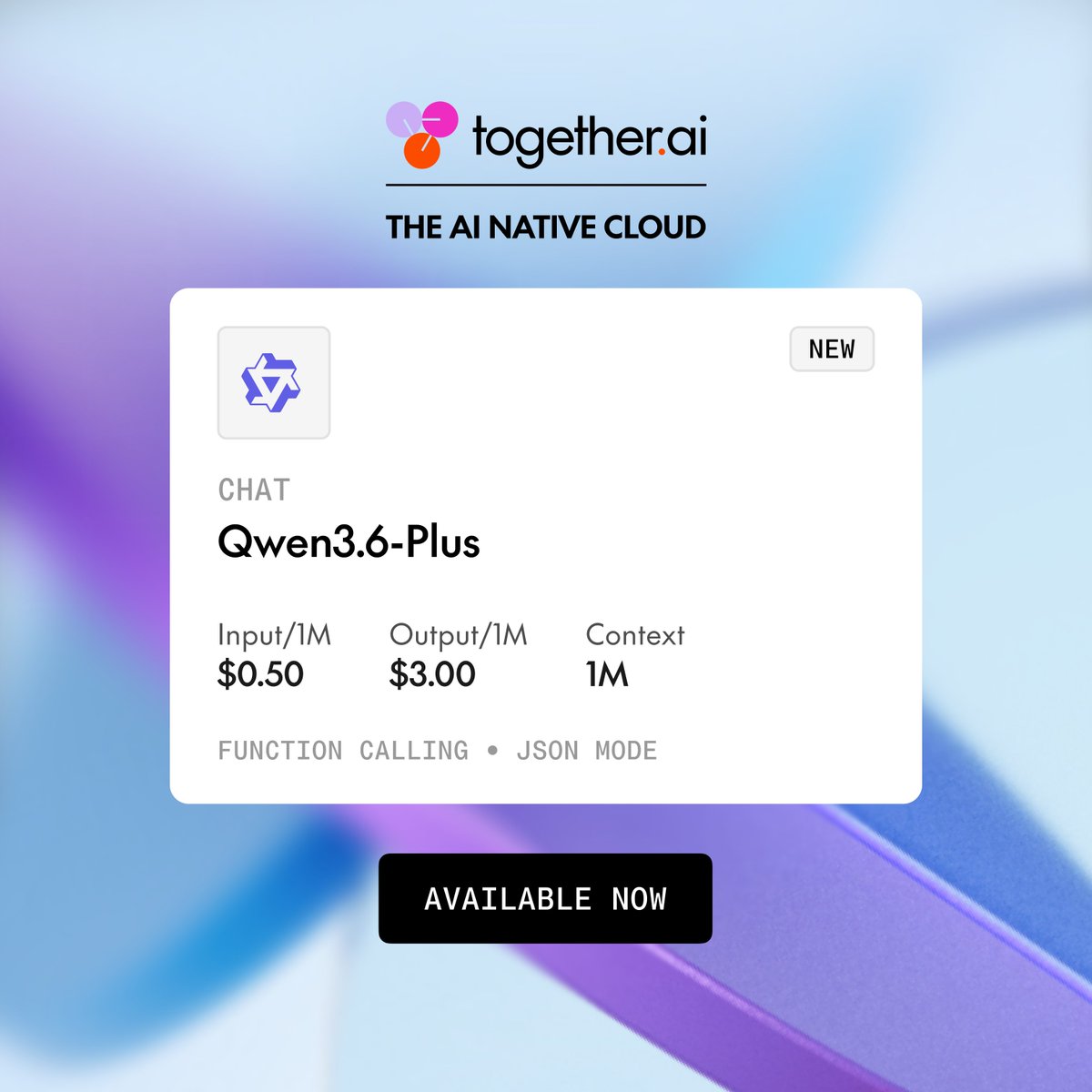

Introducing Qwen3.6-Plus from @Alibaba_Qwen, a 1M-context model built for real-world agents, agentic coding, and multimodal reasoning. AI natives can now use Qwen3.6-Plus on Together AI and benefit from reliable inference for production-scale agent workflows.