Sabitlenmiş Tweet

Dr. Jackson

96.9K posts

Dr. Jackson

@cowtowncoder

Eco-liberal EU-expat (now in US PNW). Coder (Java), OSS author (Jackson, Woodstox, ClassMate, java-uuid-generator), collaborator, mentor. Gainfully employed.

Seattle, US Katılım Mayıs 2009

524 Takip Edilen2K Takipçiler

Dr. Jackson retweetledi

Dr. Jackson retweetledi

Dr. Jackson retweetledi

SPOILER ALERT: It’s the same reason AI writing sucks. AI is pattern-based, which means it disregards outliers then forecasts (or writes) based on what’s statistically probable given the average pattern. You can’t predict extreme weather based on average patterns just as you can’t write amazing prose using something that merely predicts the next average word.

And we’re losing jobs to this. What a wicked world.

KMBC@kmbc

Why AI forecasts can falter when weather turns extreme | Click on the image to read the full story kmbc.com/article/weathe…

English

Dr. Jackson retweetledi

Dr. Jackson retweetledi

Dr. Jackson retweetledi

Dr. Jackson retweetledi

I strongly believe there are entire companies right now under heavy AI psychosis and its impossible to have rational conversations about it with them. I can't name any specific people because they include personal friends I deeply respect, but I worry about how this plays out.

I lived through the great MTBF vs MTTR (mean-time-between-failure vs. mean-time-to-recovery) reckoning of infrastructure during the transition to cloud and cloud automation. All those arguments are rearing their ugly heads again but now its... the whole software development industry (maybe the whole world, really).

It's frightening, because the psychosis folks operate under an almost absolute "MTTR is all you need" mentality: "its fine to ship bugs because the agents will fix them so quickly and at a scale humans can't do!" We learned in infrastructure that MTTR is great but you can't yeet resilient systems entirely.

The main issue is I don't even know how to bring this up to people I know personally, because bringing this topic up leads to immediately dismissals like "no no, it has full test coverage" or "bug reports are going down" or something, which just don't paint the whole picture.

We already learned this lesson once in infrastructure: you can automate yourself into a very resilient catastrophe machine. Systems can appear healthy by local metrics while globally becoming incomprehensible. Bug reports can go down while latent risk explodes. Test coverage can rise while semantic understanding falls. Changes happens so fast that nobody notices the underlying architecture decaying.

I worry.

English

Dr. Jackson retweetledi

@OrevaZSN The early bird gets the worm, but the early worm gets eaten

English

Dr. Jackson retweetledi

Dr. Jackson retweetledi

Dr. Jackson retweetledi

I do firmly believe that light pollution has a lot to do with our loss of wonder in the modern age.

•R•S•@Ay_Blinkin

Starry Night (1918) by Edward Henry Potthast

English

Dr. Jackson retweetledi

Dr. Jackson retweetledi

Dr. Jackson retweetledi

Dr. Jackson retweetledi

We haven’t had—and might not ever have—a president who was born in the 1950s.

Ramin Nasibov@RaminNasibov

What historical fact sounds fake but is true?

English

Dr. Jackson retweetledi

Dr. Jackson retweetledi

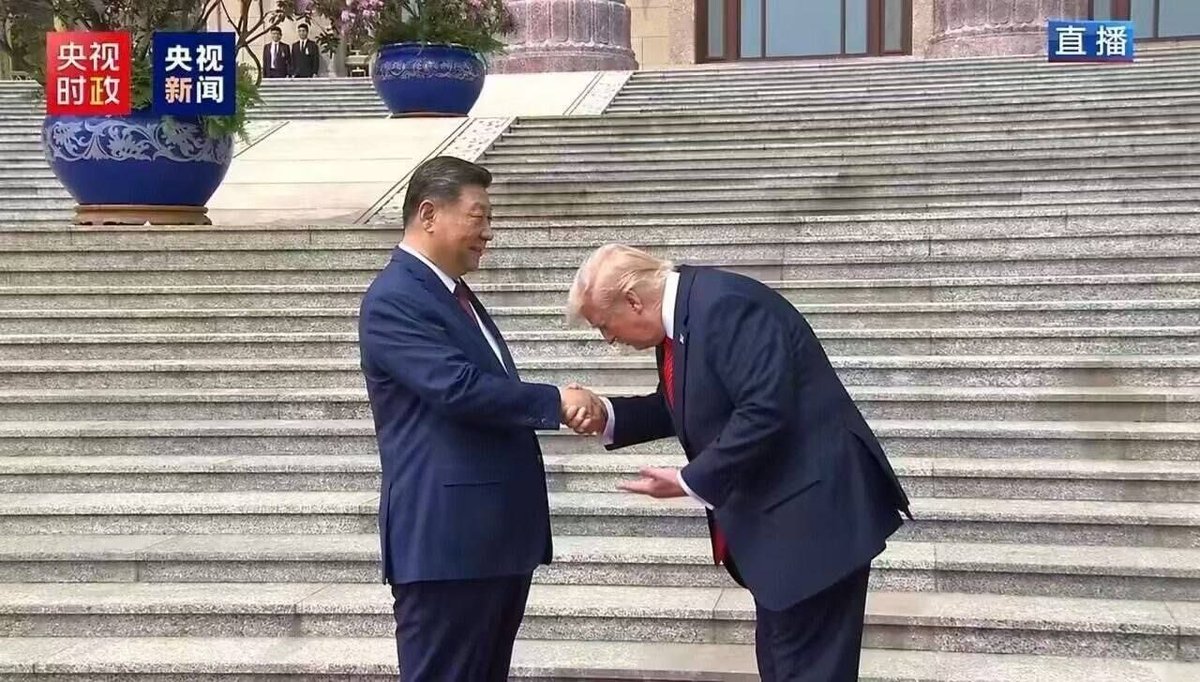

Elon getting thrown into Chinese prison for Ketamine possession would potentially be the single greatest achievement of the second Trump administration

Acyn@Acyn

Elon Musk at the state banquet

English

Dr. Jackson retweetledi

Harvard Business Review research reveals that excessive interaction with AI is causing a specific type of mental exhaustion ( or "AI brain fry"), which is particularly hitting high performers who use AI to push past their normal limits.

A survey of 1,500 workers reveals that AI is intensifying workloads rather than reducing them, leading to a new form of mental fog.

While AI is generally supposed to lighten the load, it often forces users into constant task-switching and intense oversight that actually clutters the mind.

This mental static happens because you aren't just doing your job anymore; you are managing multiple digital agents and double-checking their work, which creates a massive cognitive burden.

The study found that 14% of full-time workers already feel this fog, with the highest impact seen in technical fields like software development, IT, and finance.

High oversight is the biggest culprit, as supervising multiple AI outputs leads to a 12% increase in mental fatigue and a 33% jump in decision fatigue.

This isn't just a personal health issue; it directly impacts companies because exhausted employees are 10% more likely to quit.

For massive firms worth many B, this decision paralysis can lead to millions of dollars in lost value due to poor choices or total inaction.

Essentially, we are working harder to manage our tools than we are to solve the actual problems they were meant to fix.

---

hbr .org/2026/03/when-using-ai-leads-to-brain-fry

English