Chandan Singh

403 posts

@csinva

Seeking superhuman explanations. Senior researcher @MSFTResearch, PhD from @Berkeley_AI

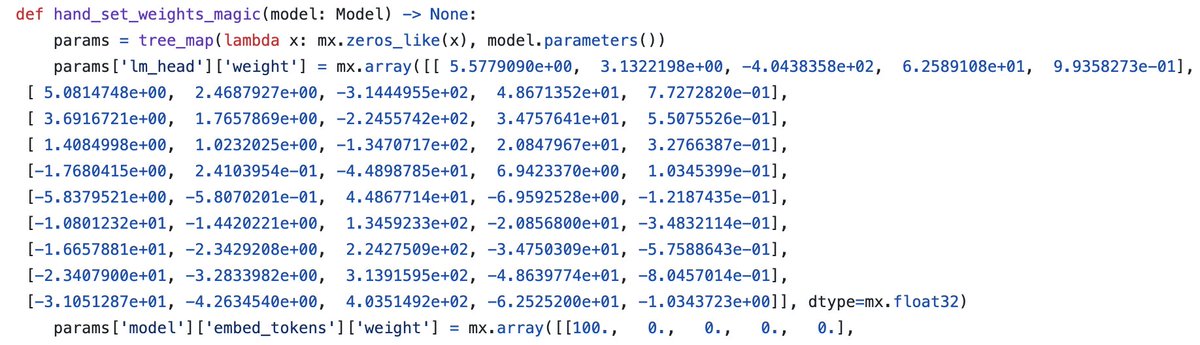

Beat it by having Codex hand-craft weights: gist.github.com/N8python/02e41… 100% accuracy on 10 million random test cases w/ only 343 parameters. As a bonus, it uses the vanilla Qwen3 architecture, just with the right weights.

We’re putting more computation (in the form of intelligence) into the most general object in neural network training: backprop. This essay describes how I think we can do this, why interp is key, the relevance to alignment, and how we should do it right.

Can models understand each other's reasoning? 🤔 When Model A explains its Chain-of-Thought (CoT) , do Models B, C, and D interpret it the same way? Our new preprint with @davidbau and @csinva explores CoT generalizability 🧵👇 (1/7)

“Bayesian concept bottleneck models using LLM priors” (BC-LLM) was accepted at #neurips2025! BC-LLM tackles a key clinical AI problem: how do we find interpretable features for predicting an outcome when there are ♾ possibilities from the electronic health record (e.g. notes)

Mechanistic interp has made cool findings but struggled to make them useful We show that "induction heads" found in LLMs can be reverse-engineered to yield accurate & interpretable next-word prediction models Led by @eunjikim4747 & Sriya Mantena arxiv.org/abs/2411.00066 🧵1/2