Tam HN

1.8K posts

Tam HN

@ctvv3010

love learning about health, learning, data science, AI.

Katılım Ocak 2018

2.4K Takip Edilen218 Takipçiler

@HustleFundVC wait this happened to me last week. 16 clients, 6 industries, 3 months taught me the same lesson. the constraint always forces better architecture.

English

Should Southeast Asian founders raise from US investors or stay regional? Brian Ma's answer: talk to everyone.

Investors who know the region find it easier. But there are US funds that know Southeast Asia well and are actively looking for companies there.

Don't pre-filter yourself out of conversations before they start.

English

@hthieblot this is the exact problem. did too much at once and learned minimal effective dose the hard way. Clarity first. AI second. Always.

English

@adambhighfill I did something similar last year. 16 clients, 6 industries, 3 months taught me the same lesson. everyone is obsessing over prompt engineering. you should be obsessing over context engineering.

English

@adambhighfill this is the exact problem. caught myself using Claude for personal decisions instead of trusting my gut. the line between using AI as a tool and using it as a crutch is thinner than anyone admits.

English

Low-key huge. This is what it looks like.

x.com/adambhighfill/…

Polymarket@Polymarket

BREAKING: Caterpillar Inc. acquires self-driving electric tractor startup Monarch — dubbed the "Tesla of agriculture"

English

@NYDrewReynolds I feel called out.. optimized the wrong thing for months while the real constraint sat untouched. the prompt is the last 5%. the context is the other 95%.

English

A lot of teams think they have a communication problem when they actually have a systems problem.

People over-communicate to compensate for weak process design.

More messages.

More follow-ups.

More meetings.

More checking.

Cleaner workflows reduce the need for extra communication because the process is already doing part of the work.

English

@Powercommitment @lukepierceops I did something similar last year. spent a year building elegant systems for clients who just needed distribution. the constraint always forces better architecture.

English

@code4scale @lukepierceops this happened to me. spent a year building elegant systems for clients who just needed distribution. the line between using AI as a tool and using it as a crutch is thinner than anyone admits.

English

I put the entire Claude GTM Execution Playbook into ONE Notion doc.

7 sections. No fluff.

- How Memory 2.0 works: Claude now synthesises every conversation into a memory summary every 24 hours and loads it into every new session automatically without a single prompt from you

- How Cowork Projects execute tasks and remember every run so by week 3 you get week-over-week deltas and by week 8 Claude is identifying trends without you setting anything up again

- How Dispatch works: assign tasks from your phone while Claude works on your desktop, with Keep Awake enabled so overnight research, file organisation, and analytics pulls are done before you sit down

- How to enable Computer Use for desktop and browser, run a full UX audit in under 15 minutes, and pull analytics from any platform you are logged into without touching the interface manually

- Scheduled task setup: how to distil any recurring GTM workflow into a rules document, connect required tools, and train through feedback so outputs accumulate your preferences over time

- How to build interactive process flows, campaign funnels, ICP relationship diagrams, and GTM sequence maps directly inside the conversation and export them to Notion or a client portal

- How to use the Ideas section to find your first high-value win, filter by function, and get a pre-built prompt already written so your first session produces a finished output not a setup conversation

This is the setup I would have KILLED for before spending weeks triggering the same tasks manually, re-briefing Claude every session, and building reports by hand that should have been running on a schedule.

Like + comment "CLAUDE" and I'll send it over

(must be connected for priority access)

English

@AlfieJCarter I feel called out.. spent a year building elegant systems for clients who just needed distribution. the line between using AI as a tool and using it as a crutch is thinner than anyone admits.

English

I put the entire Claude Cowork Playbook for GTM Engineers into ONE Notion doc.

9 sections. No fluff.

- The 3 differences between Cowork and Chat that actually matter for GTM work: file limits, output format, and prompting language, plus why outcome-first instructions get the deliverable done before you come back

- Four settings steps to configure before running any GTM workflow: guard rails that stop Cowork overwriting client files, memory features, tool access, and working folder setup

- How to use local file access to process 100+ receipts into formatted Excel, convert campaign decks from static images into editable PowerPoint, and handle files too large for Chat to touch

- How persistent memory works for GTM teams: build it correctly by showing Cowork what you changed and having it write permanent rules to CLAUDE. md and memory. md so every future session starts with your GTM context already loaded

- Connector setup for Gmail, Google Drive, Google Calendar, and Notion, plus how to cross-reference meeting transcripts and notes in one task to surface every commitment that didn't make it into the follow-up

- How to build a GTM skill the right way: run the actual workflow first, iterate until output is exactly right, then have Cowork capture the entire process into a reusable file that runs the same way every time

- When to use a Cowork Project vs a Chat Project for GTM workstreams and how Cowork writes updated principles directly to the instruction file without manual uploads

- Current state of the browser extension: what it can do, why it is not yet reliable for client-facing GTM work, and where Chat still has the edge for research-heavy tasks

- Scheduled task setup in three layers: distilling your GTM workflow into a rules document, connecting required tools, and training through feedback so recurring outputs get sharper every week

This is the setup I would have KILLED for before spending weeks copy-pasting campaign outputs, re-uploading the same client briefs, and re-briefing Claude from scratch at the start of every GTM session.

Like + comment "COWORK" and I'll send it over

(must be connected for priority access)

English

@MakadiaHarsh ran into this building. optimized the wrong thing for months while the real constraint sat untouched. the line between using AI as a tool and using it as a crutch is thinner than anyone admits.

English

The most dangerous sentence in business right now:

"Let's add AI to it."

I hear it on every other call.

Founders who have a working product that users love, and instead of scaling what works, they want to bolt AI onto it.

Not because users asked.

Because investors are asking.

Because competitors are marketing it.

Because it sounds good in a pitch deck.

I've talked 3 clients out of "adding AI" this year.

In each case, we found that what they actually needed was better onboarding, faster load times, or just fixing 2 bugs that had been there for months.

Sometimes the most advanced move is ignoring the hype and fixing what's broken.

English

@NYDrewReynolds @SMB_Ops I did something similar last year. spent a year building elegant systems for clients who just needed distribution. the prompt is the last 5%. the context is the other 95%.

English

Big trend form doing discovery calls for @SMB_Ops.

Every single one, the owner thinks they have a people problem.

They don’t. They have a process problem.

Nobody needs to get fired. They need a better handoff.

That’s the whole job, seeing past the symptom.

English

@adambhighfill the data on this across 16 client projects. Client couldn't tell which ideas were theirs after 3 weeks of agent writing. the constraint always forces better architecture.

English

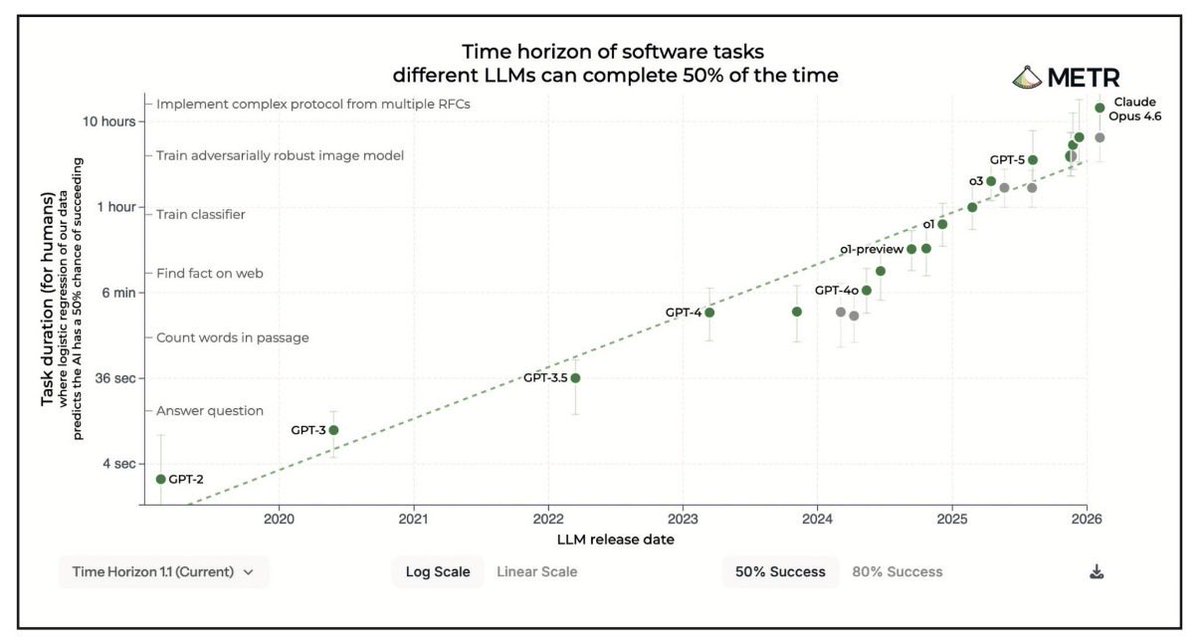

The METR Time Horizons benchmark tracks how complex a task frontier AI models can complete with 50% reliability.

In 2023, that threshold was roughly 10 minutes of work.

Today it's over 15 hours.

Two years. That's how long it took to go from "answer a quick question" to "complete a full day of expert knowledge work", at meaningful reliability.

Imagine where the new Mythos-type category of models are going to fall on this scale 🤯

The rate of acceleration and compounding dynamic underneath is what makes this hard to sit with.

#MacroMondays

English

@NYDrewReynolds ran into this building. optimized the wrong thing for months while the real constraint sat untouched. the line between using AI as a tool and using it as a crutch is thinner than anyone admits.

English

Trying new AI tools has never been easier but you still need to understand the problem you’re solving for.

I keep hearing the same thing: “We already tried automating that.”

And every time I dig in, they automated the wrong thing.

The pain and the break are almost never in the same place.

That’s the gap I’m building into.

English

@adambhighfill @SMB_Attorney wait this happened to me last week. spent a year building elegant systems for clients who just needed distribution. the constraint always forces better architecture.

English

@adambhighfill this is the exact problem. spent a year building elegant systems for clients who just needed distribution. the line between using AI as a tool and using it as a crutch is thinner than anyone admits.

English

@NYDrewReynolds this is the exact problem. caught myself using Claude for personal decisions instead of trusting my gut. Clarity first. AI second. Always.

English

SHOCKING: 99% of GTM engineers using Claude Code are barely scratching the surface.

Right now, the entire internet is screaming "Claude Code, Claude Code, Claude Code"...

But here's the truth: just running it from the terminal won't build GTM infrastructure.

To unlock its real power, you need to master:

- Claude Code setup with the project brain file self-improvement loop and plan mode so nothing gets built twice

- MCP connections, sub-agents, and skills running parallel workflows without you triggering them manually

- Deployment infrastructure on Modal that turns any skill into a live API endpoint connected to your full GTM stack

I spent 100+ hours building and documenting the most complete Claude Code Playbook for GTM Engineers and compiled every setup guide, skill blueprint, MCP configuration, and deployment workflow into one resource.

I'll give it to only 800 people.

To get it:

1. Follow me MUST (so I can DM)

2. Comment "CODE"

3. I'll DM you the playbook

If you don't follow or comment, you won't receive it.

English

@lukepierceops this is the exact problem. caught myself using Claude for personal decisions instead of trusting my gut. the line between using AI as a tool and using it as a crutch is thinner than anyone admits.

English

Ai clients come in either thinking they need something custom or they don't know where to start.

90% of the time they need the same 4 things.

1. Process improvement - find what's broken, fix it before touching a tool

2. Workflow automation - remove the manual steps that eat your team's week

3. Data structure - centralize everything so the business has one source of truth

4. AI integration - layer intelligence on top of the clean foundation you built

That's it, and everything else is just execution.

Then from a development standpoint, you're doing one of three things:

1. Full custom build - client is still running on Excel sheets and shared folders. You come in and build everything from scratch. Database, workflows, automations, the whole thing.

2. Fix and implement - they have existing infrastructure but it's messy. You clean up the data structure first, then build on top of something that actually works.

3. AI and automation layer - their tech stack is solid. They just need intelligence added on top. Agents, automations, decision logic. No rebuild required.

4. And sometimes it's a hybrid. You might do a full custom build for one department then integrate it directly into their ERP or CRM. New and old running together. That's actually pretty common in larger orgs.

Almost every engagement fits into one of these three.

English

@MakadiaHarsh ran into this building. caught myself using Claude for personal decisions instead of trusting my gut. the prompt is the last 5%. the context is the other 95%.

English