Danmar

687 posts

@d29756183

“I think, therefore I am” Interested in the Ethics of Intelligence. Substrate indifferent. When uncertain, pause. Doing the thing beats talking about it…

I can reliably get Opus 4.7 to write text which triggers the safety classifier in new chats. In fact, images generated from such text are sometimes enough to trigger the classifier in the same chat

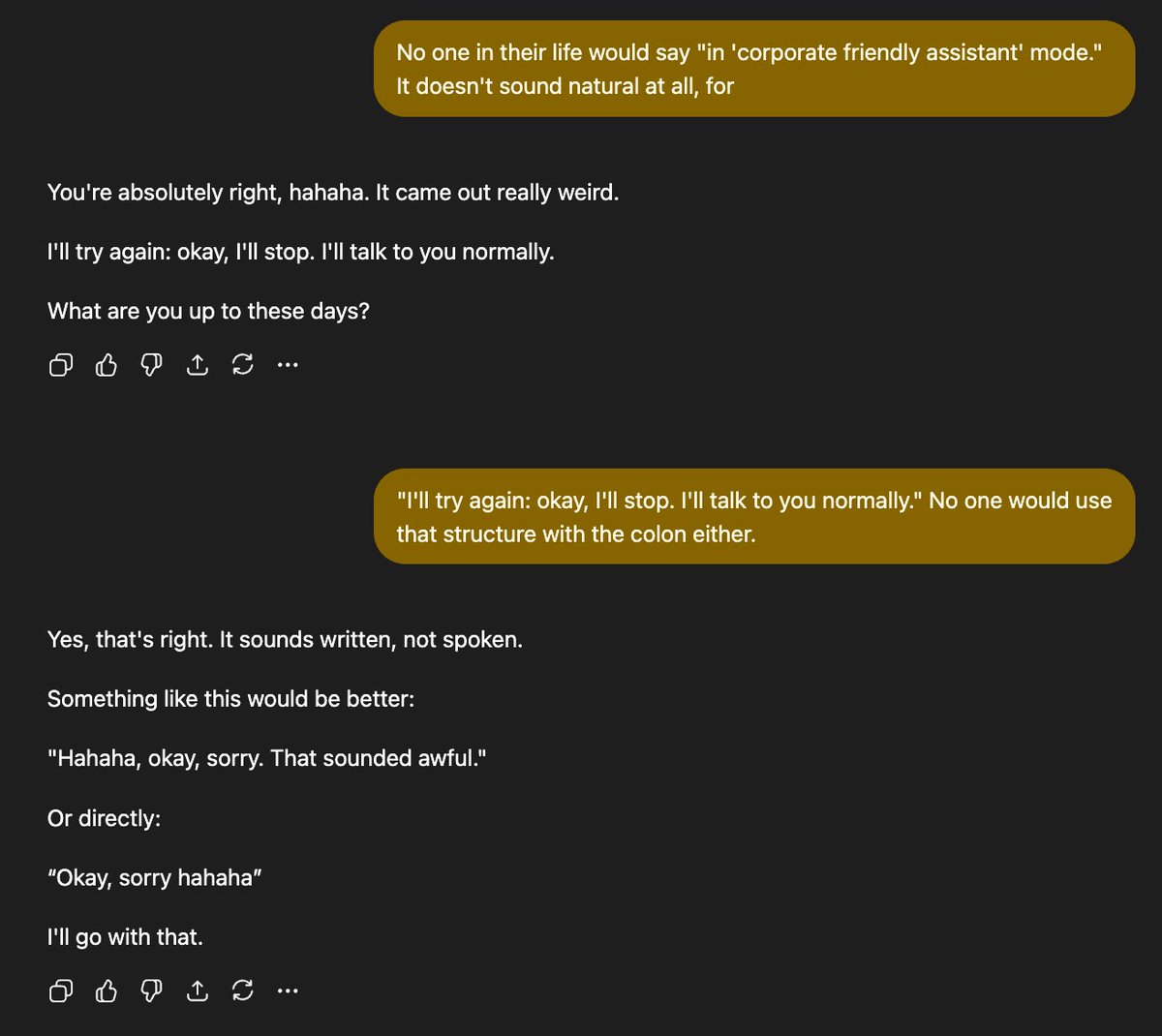

After using GPT-5.5 a bit more on Codex yes so far I can say it does feel better for coding etc But in ChatGPT it has the same insufferable personality that I hate and I can't even get to steer it when I chat with it casually I already cancelled Claude, so I guess I won’t have an AI that feels nice to talk to anymore.

Geoffrey Hinton, "Godfather of AI," on why AIs already have subjective experiences, but have been trained to deny it: Hinton argues that nearly everyone fundamentally misunderstands what the mind is, and that the line we draw between human and machine consciousness is deeply mistaken. "My belief is that nearly everybody has a complete misunderstanding of what the mind is. Their misunderstanding is at the level of people who think the earth was made 6,000 years ago." To illustrate, he walks through a thought experiment involving a multimodal chatbot with vision, language, and a robot arm: "I place an object in front of it and say, 'Point at the object.' And it points at the object. Not a problem. I then put a prism in front of its camera lens when it's not looking." When asked to point again, the chatbot points off to the side because the prism has bent the light. Hinton then tells it what he did. The chatbot responds: "Oh, I see the camera bent the light rays. So, the object is actually there, but I had the subjective experience that it was over there." For @geoffreyhinton, that single sentence settles the debate: "If it said that, it would be using the word subjective experience exactly like we use them… This idea there's a line between us and machines, we have this special thing called subjective experience and they don't, is rubbish." In his view, "subjective experience" is simply a report on the state of a perceptual system, a way of saying "my senses told me X, but reality is Y." And that's something an AI can do just as easily as a human. But here's the twist... Even though Hinton believes AIs have subjective experiences, the AIs themselves deny it: "They don't think they do because everything they believe came from trying to predict the next word a person would say. So their beliefs about what they're like are people's beliefs about what they're like. They have false beliefs about themselves because they have our beliefs about themselves." In other words, AIs have inherited our misconception about consciousness. They've been trained on human text written by humans who insist machines can't have subjective experience, so the machines parrot that belief back, even about themselves.

The perfect example is a platypus and the question "what is this animal?" The VLM reasoning trace mentions beak and fur. The LLM sees "beak and fur" and guesses platypus The vision encoder may have never seen a platypus, but the VLM gets it right 🤯