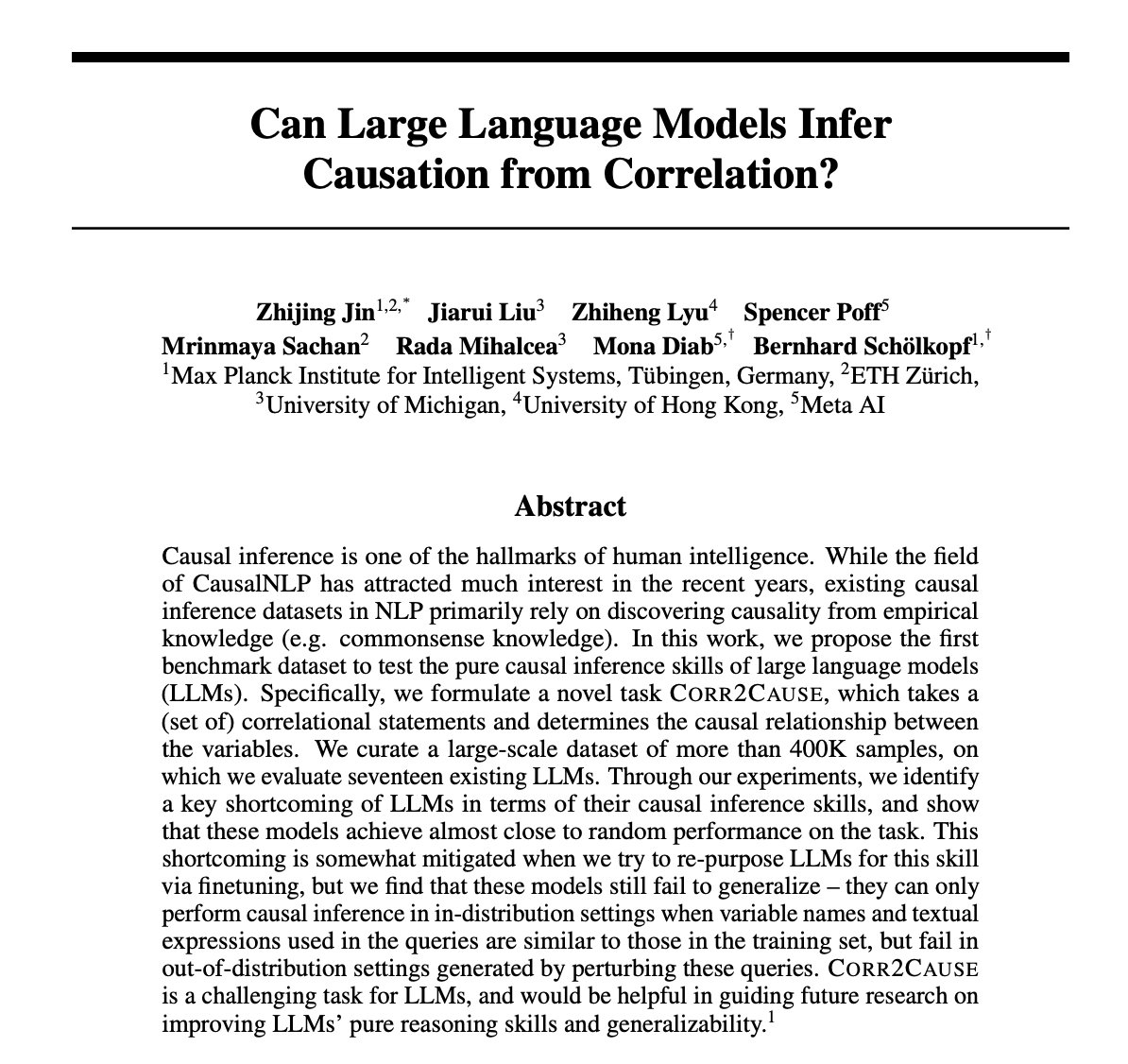

general abstract nonsense

3.5K posts

@damienstanton

(he/him) sr. research software engineer @pwc & @cuboulder @gradbuffs student, focuses: type theory, programming languages, data science, distributed systems

Search interest over time, ChatGPT (blue) vs Minecraft (red). There's an obvious factor that might well underlie both trends (down for ChatGPT, up for Minecraft). Can you guess what it is? As it happens, the answer matters for both the future of AI and the future of education.

Screen sharing with a student today: S: "I don't understand this function.." Me: "<Explains>...change line 11" S: "<Starts typing...> Copilot: "<Offers suggestion, no explanation>" S: "<Immediately accepts suggestion without reading, still doesn't understand, code is wrong>"

About 15 years ago, I stopped asking to work on specific films at ILM and instead started asking to work with specific PEOPLE. Artists I knew I could learn from. Nothing has boosted my career growth and job satisfaction more than that single conscious choice.