Dan Caron⚡

843 posts

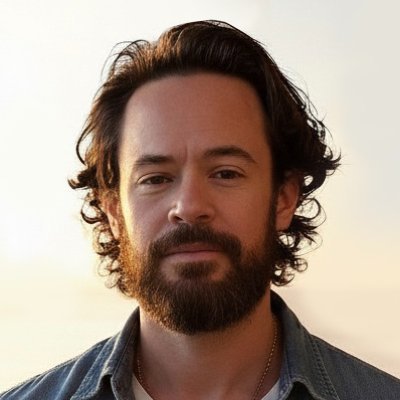

Dan Caron⚡

@dancaron

Tweets about Health AI, AGI, longevity. 🛸 CEO Health Universe (Kleiner Perkins) 🚀 Co-Founder @arrivehealth (Raised 32M) 🤖 Hacked insulin pump. FDA approved.

i am begging academics to study AI capabilities using frontier models. the models used in this study (which is going to be cited for years as proof that "AI is bad at health advice") are GPT-4o, Llama 3, and Command R+, two obsolete models and one i've never heard of.

@kevinroose @KelseyTuoc Part of the problem is process time for academic publications. 2 months research (min), 2 months writing, 6 months for peer review. All models change dramatically in 10 months

Does medical AI really work in the real-world? It needs to be assessed carefully and responsibly. We will be launching a first-of-its-kind nationwide randomized study with Included Health to evaluate AI in real-world virtual care to better understand its capabilities & limitations. This study is informed by years of foundational research across Google, investigating the capabilities required for a helpful & safe medical AI.

Cost-plus contracting in the Department of Defense created the bloated, ineffective defense primes. Cost-plus contracting is exactly how we pay for healthcare today through AMA-defined CPT codes and RVUs. We cannot repeat sins of the past in designing payments for clinical AI. Our CMS Innovation Center director, Abe Sutton, believes cost-plus is the wrong path for the future of American healthcare. New payment models like ACCESS pay for outcomes. When we have AI that can manage heart failure can we link payment to making people healthier? Doing so would unleash the might of American techno-capitalism on exactly the thing we care about in society. Reimbursement policy for clinical AI sets the stage for the next decades of American healthcare. Investment flows to enterprises that generate returns, returns requires revenue, and deciding what we award revenue for in the age of medical AI is of enormous consequence.

Holy shit… this paper might be the most important shift in how we use LLMs this entire year. “Large Causal Models from Large Language Models.” It shows you can grow full causal models directly out of an LLM not approximations, not vibes actual causal graphs, counterfactuals, interventions, and constraint-checked structures. And the way they do it is wild: Instead of training a specialized causal model, they interrogate the LLM like a scientist: → extract a candidate causal graph from text → ask the model to check conditional independencies → detect contradictions → revise the structure → test counterfactuals and interventional predictions → iterate until the causal model stabilizes The result is something we’ve never had before: a causal system built inside the LLM using its own latent world knowledge. Across benchmarks synthetic, real-world, messy domains these LCMs beat classical causal discovery methods because they pull from the LLM’s massive prior knowledge instead of just local correlations. And the counterfactual reasoning? Shockingly strong. The model can answer “what if” questions that standard algorithms completely fail on, simply because it already “knows” things about the world those algorithms can’t infer from data alone. This paper hints at a future where LLMs aren’t just pattern machines. They become causal engines systems that form, test, and refine structural explanations of reality. If this scales, every field that relies on causal inference economics, medicine, policy, science is about to get rewritten. LLMs won’t just tell you what happens. They’ll tell you why.