Daniel Grittner

52 posts

Daniel Grittner

@danielgrittner_

building @Admyral_ai 🧑💻

San Francisco, CA Katılım Nisan 2022

682 Takip Edilen138 Takipçiler

@danielgrittner_ @browser_use Sure I can also open source the file

English

Daniel Grittner retweetledi

In era of pretraining, what mattered was internet text. You'd primarily want a large, diverse, high quality collection of internet documents to learn from.

In era of supervised finetuning, it was conversations. Contract workers are hired to create answers for questions, a bit like what you'd see on Stack Overflow / Quora, or etc., but geared towards LLM use cases.

Neither of the two above are going away (imo), but in this era of reinforcement learning, it is now environments. Unlike the above, they give the LLM an opportunity to actually interact - take actions, see outcomes, etc. This means you can hope to do a lot better than statistical expert imitation. And they can be used both for model training and evaluation. But just like before, the core problem now is needing a large, diverse, high quality set of environments, as exercises for the LLM to practice against.

In some ways, I'm reminded of OpenAI's very first project (gym), which was exactly a framework hoping to build a large collection of environments in the same schema, but this was way before LLMs. So the environments were simple academic control tasks of the time, like cartpole, ATARI, etc. The @PrimeIntellect environments hub (and the `verifiers` repo on GitHub) builds the modernized version specifically targeting LLMs, and it's a great effort/idea. I pitched that someone build something like it earlier this year:

x.com/karpathy/statu…

Environments have the property that once the skeleton of the framework is in place, in principle the community / industry can parallelize across many different domains, which is exciting.

Final thought - personally and long-term, I am bullish on environments and agentic interactions but I am bearish on reinforcement learning specifically. I think that reward functions are super sus, and I think humans don't use RL to learn (maybe they do for some motor tasks etc, but not intellectual problem solving tasks). Humans use different learning paradigms that are significantly more powerful and sample efficient and that haven't been properly invented and scaled yet, though early sketches and ideas exist (as just one example, the idea of "system prompt learning", moving the update to tokens/contexts not weights and optionally distilling to weights as a separate process a bit like sleep does).

Prime Intellect@PrimeIntellect

Introducing the Environments Hub RL environments are the key bottleneck to the next wave of AI progress, but big labs are locking them down We built a community platform for crowdsourcing open environments, so anyone can contribute to open-source AGI

English

@dexhorthy Awesome overview! Only evals are missing imo

English

Daniel Grittner retweetledi

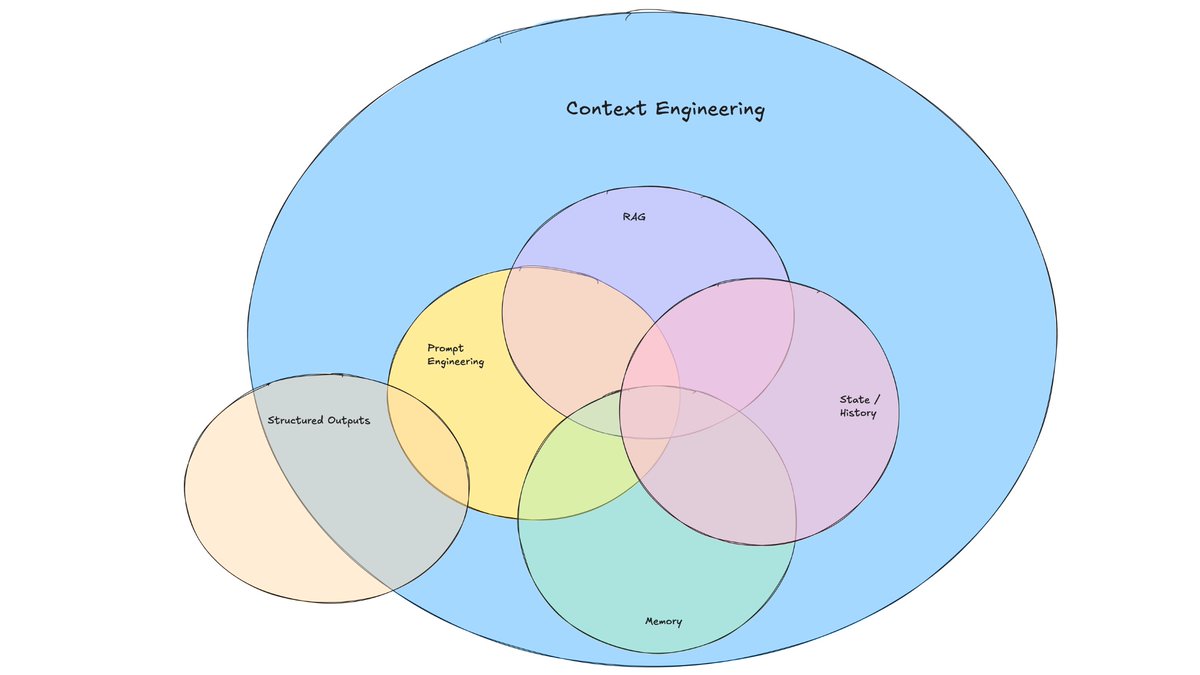

+1 for "context engineering" over "prompt engineering".

People associate prompts with short task descriptions you'd give an LLM in your day-to-day use. When in every industrial-strength LLM app, context engineering is the delicate art and science of filling the context window with just the right information for the next step. Science because doing this right involves task descriptions and explanations, few shot examples, RAG, related (possibly multimodal) data, tools, state and history, compacting... Too little or of the wrong form and the LLM doesn't have the right context for optimal performance. Too much or too irrelevant and the LLM costs might go up and performance might come down. Doing this well is highly non-trivial. And art because of the guiding intuition around LLM psychology of people spirits.

On top of context engineering itself, an LLM app has to:

- break up problems just right into control flows

- pack the context windows just right

- dispatch calls to LLMs of the right kind and capability

- handle generation-verification UIUX flows

- a lot more - guardrails, security, evals, parallelism, prefetching, ...

So context engineering is just one small piece of an emerging thick layer of non-trivial software that coordinates individual LLM calls (and a lot more) into full LLM apps. The term "ChatGPT wrapper" is tired and really, really wrong.

tobi lutke@tobi

I really like the term “context engineering” over prompt engineering. It describes the core skill better: the art of providing all the context for the task to be plausibly solvable by the LLM.

English

Daniel Grittner retweetledi

Daniel Grittner retweetledi

Y Combinator is coming to Munich to visit CDTM, TUM & LMU on April 23rd. I'm excited to chat with @MarcKlingen from @langfuse (YC W23).

I will also share what I learned from working with over 900 startups at @ycombinator and how to choose the right idea to work on.

If you are a student at one of these schools - we'd love to meet you.

English

Rust programming in German. I think it's time to migrate our codebase 🍺🇩🇪

github.com/michidk/rost

English

Daniel Grittner retweetledi

The last few months were quite a ride. Excited for the next level 🚀

chris_one@chrisgrittner

YC W25 is in the books. On to the next level 🕹️

English

Jensen must feel like Anakin Skywalker - hope he doesn't become Darth Vader at some point

vitrupo@vitrupo

Jensen Huang introduces Blue (Star Wars droid) after announcing NVIDIA partnership with DeepMind and Disney.

English

Daniel Grittner retweetledi

Daniel Grittner retweetledi