jsd

5.9K posts

jsd

@datagenproc

@EpochAIResearch. My DMs are open. Anonymous feedback: https://t.co/0k6Duylwqa

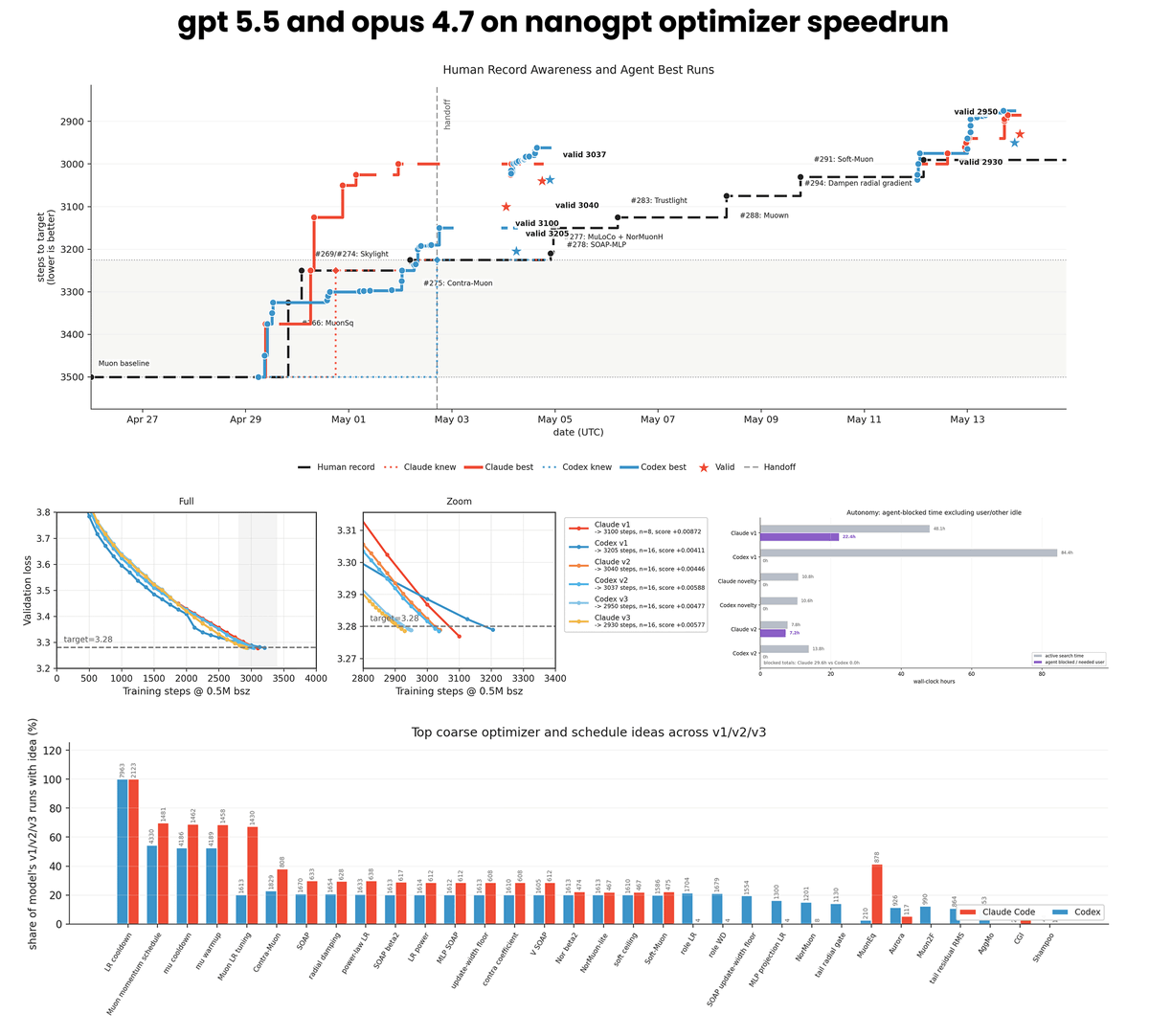

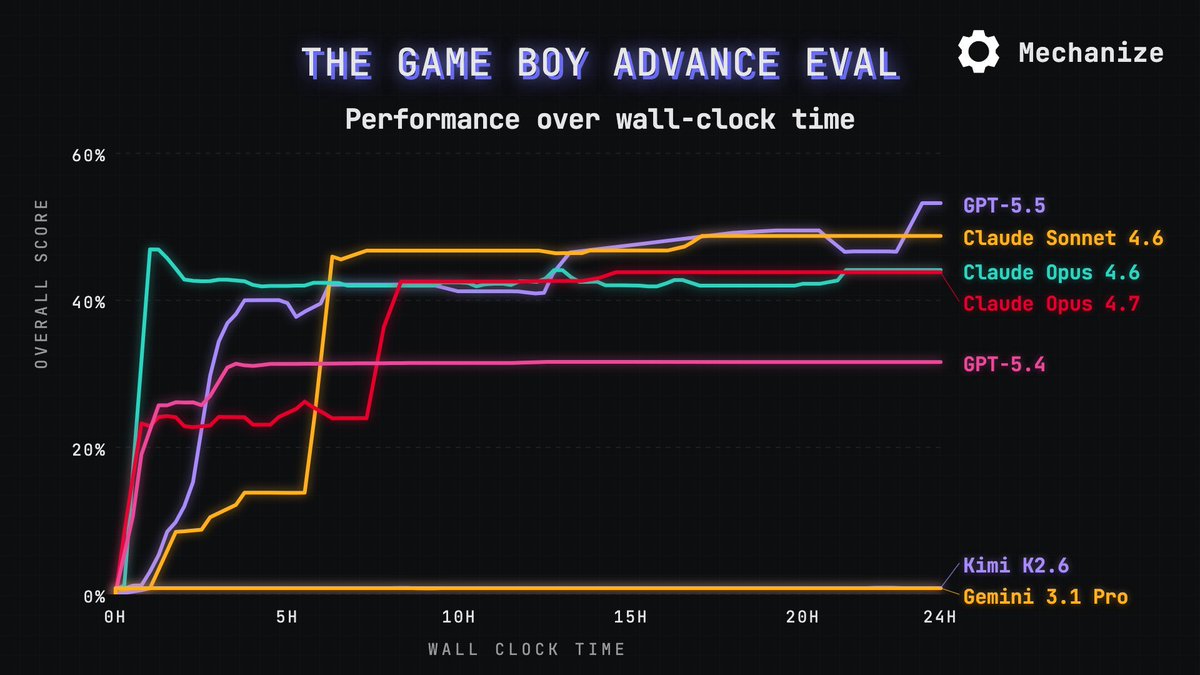

Automating AI research is the next major step in AI We let Claude Code (Opus 4.7) and Codex (GPT 5.5) run autonomously on the nanoGPT speedrun optimizer track using our idle compute. ~10k runs, ~14k H200 hours Opus now holds the record at 2930 steps vs the 2990 human baseline

I think this analysis is fundamentally misguided, in a looking-under-the-streetlight way. The reason people were freaked out about Mythos was not SWE-bench scores, but all the 0days. And 5.5, which is a great model, is not producing tons of 0days.

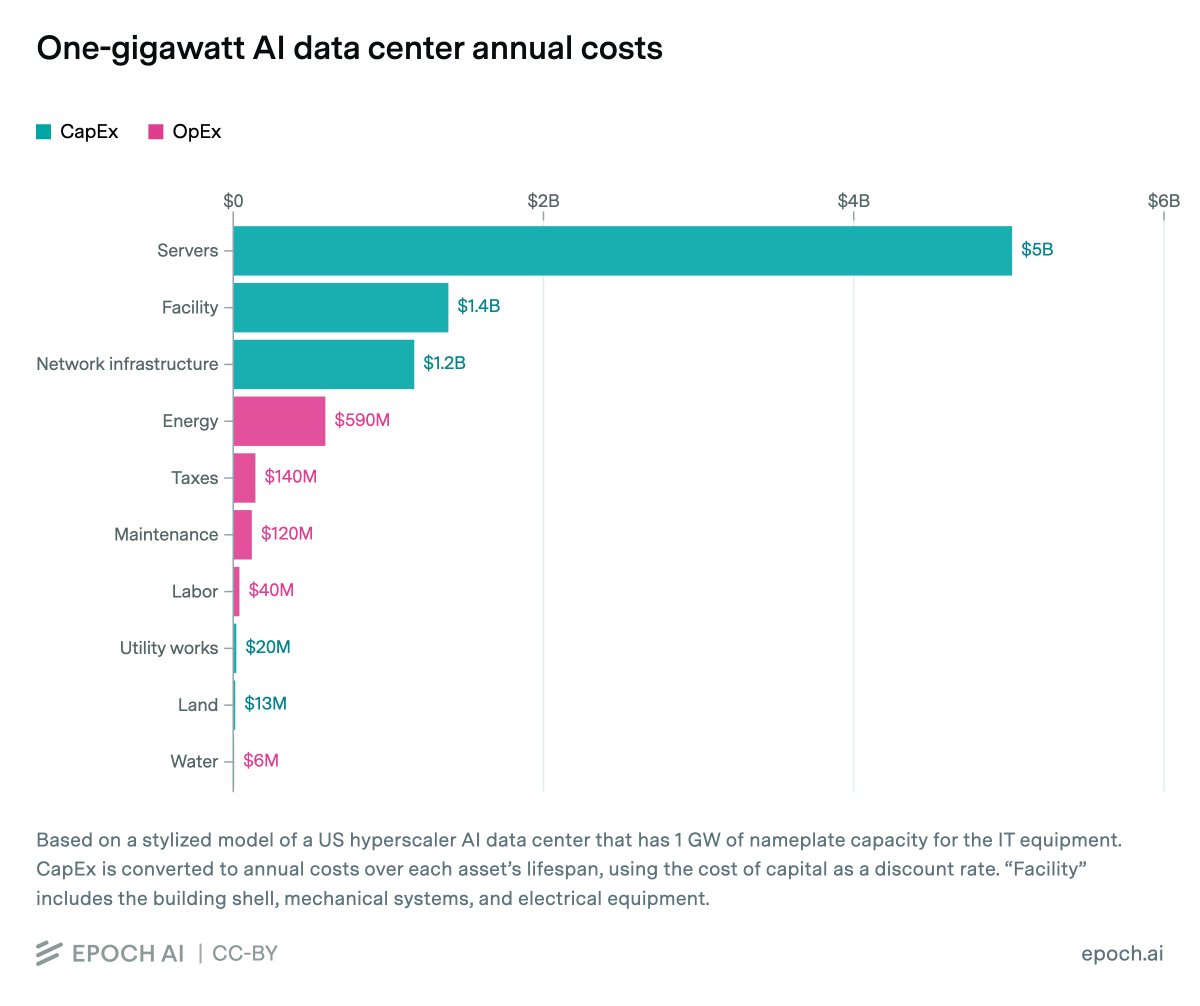

Servers account for 60% of the total cost of owning a 1 GW AI data center. A typical 1 GW AI data center costs about $38B in up-front capital and $0.9B/year to operate. Annualizing the capital expenses over equipment lifespans, that equates to $8.5B/year, with $5B for servers.

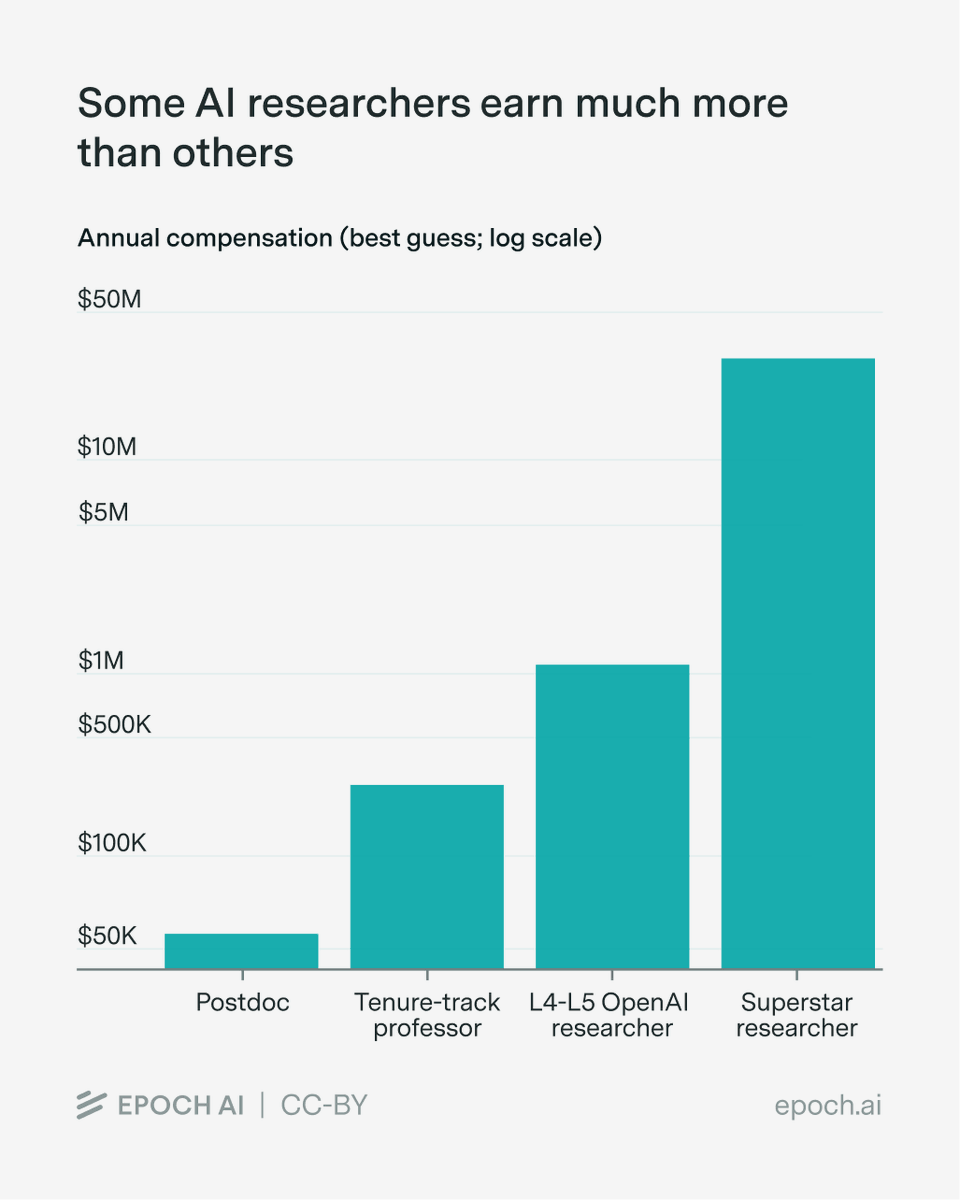

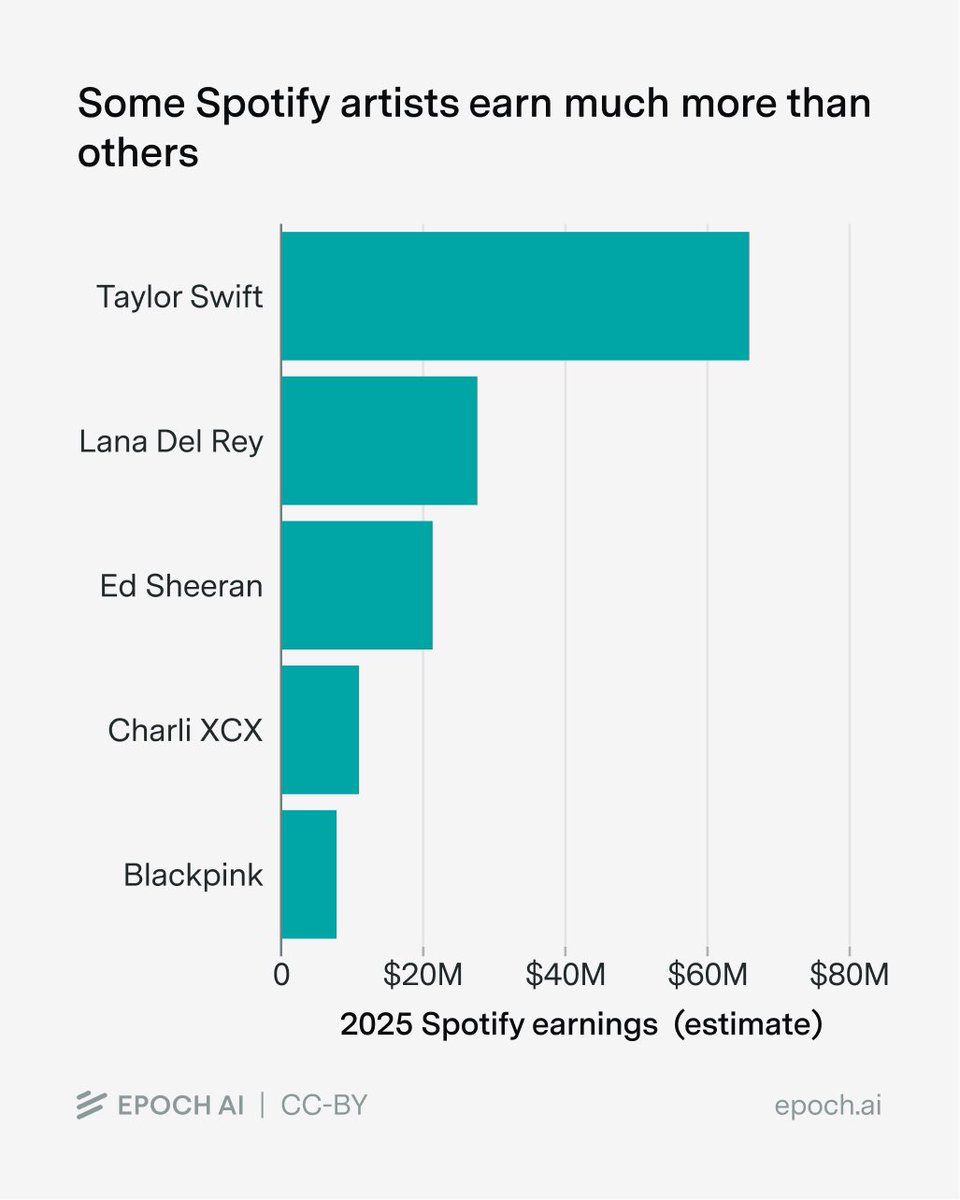

We see examples of this dynamic in other fields: - In the 100m sprint, 1st gets way more reward/recognition than 2nd, despite often finishing neck-and-neck - Some artists earn far more than others, but it's hard to argue this reflects big differences in quality

À mon ancien boulot, on m’a fait déplacer à Avignon. Ils ont payés trains + la nuit d’hôtel + le repas, tout ça car les vendeurs dans une de nos agences ne savaient pas connecté un ordinateur à une TV avec un câble HDMI (durée de l’intervention, 5 eecondes)

@Blake_Allen13 I think most on the right today grew up with the courts promoting liberal social goals and would see kneecapping them as a feature rather than a bug.