tom cunningham

598 posts

@testingham

Economics & AI @ @METR_Evals (ex-openai) https://t.co/FZobuYjdOc

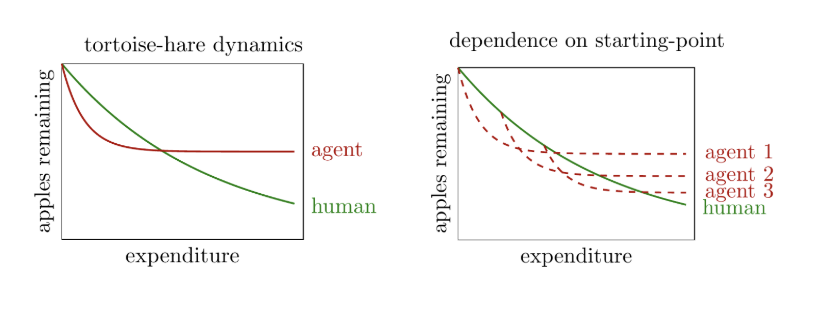

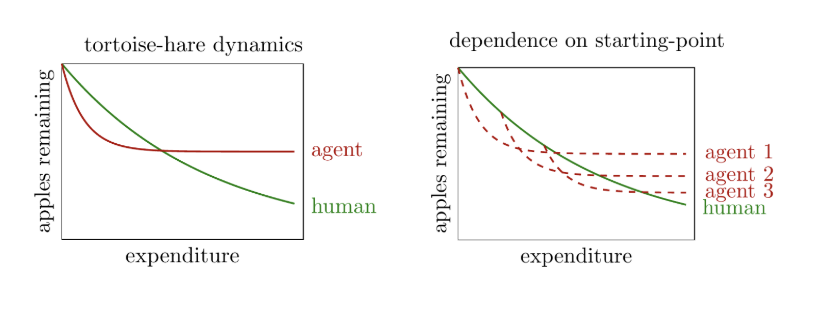

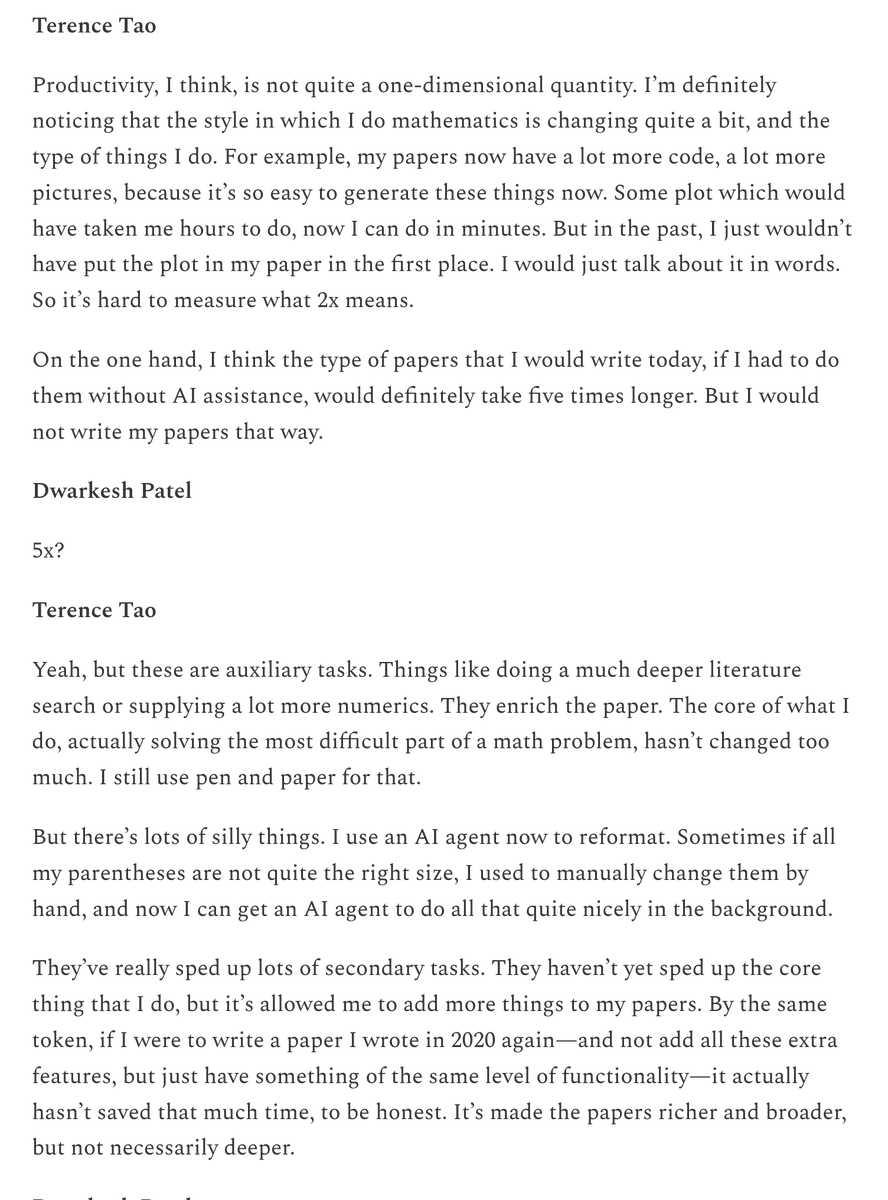

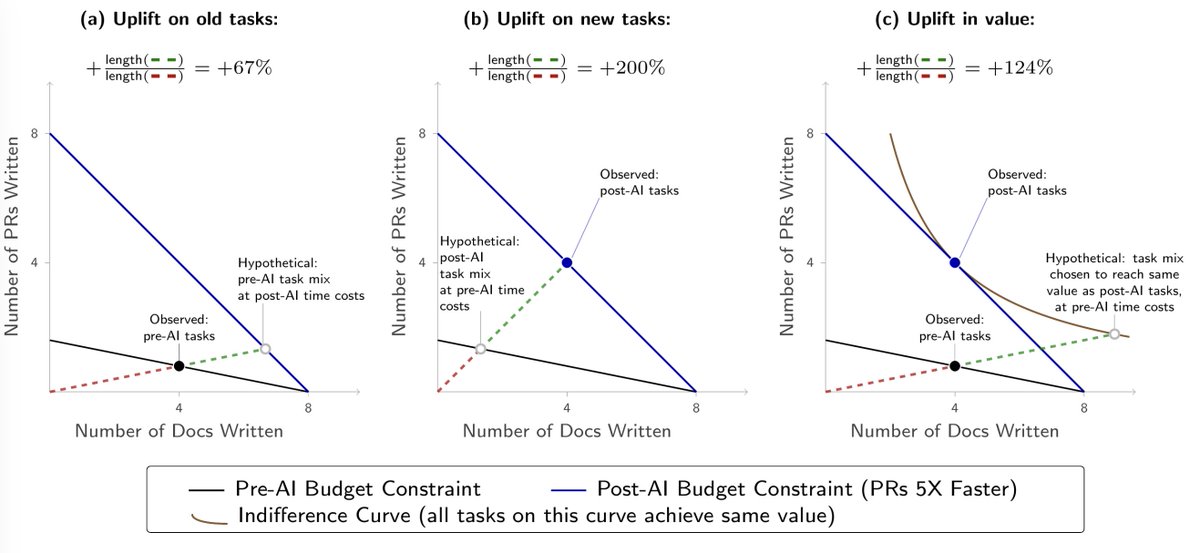

We surveyed 349 technical researchers, engineers, and managers (in February–April 2026) about how they use AI tools at work. On average, participants self-report that AI use made their work 1.6–2.1x more valuable, and that this multiplier will grow over time.

Innovation unlocks new possibilities and new directions of research. I realized there’s a really cool Econ paper by Pedro and Franco that resonates my reflection on AI creating new jobs. They found that when computers were adopted to schools, researchers tend to revisit concepts in the past that were computationally to heavy for humans to do. One example Pedro gave us was weather prediction—the theory was there, but computer really helped make it happen. “The evidence is consistent with computers unlocking research paths bottlenecked by computation rather than by ideas.” Pedro presented at our Econ history lunch at @YaleEconomics. It was really impressive! Slightly outdated version of their paper: papers.ssrn.com/sol3/papers.cf…

I basically agree with your description of how we’ll view this phase in retrospect in the context of cybersecurity. I assume that it’s possible to burn through and fix all of the cyber vulnerabilities, and that we’ll come out the other side safer. I’d be curious if there’s anyone very sophisticated in the domain who disagrees with that, seems like a topic they must have studied for a long time?

A benchmark is a sensor! Each one has a window of capabilities where it can actually distinguish models. The sensitivity curve tells you how precisely it measures the underlying capability. Model based on the Epoch Capability Index @EpochAIResearch, see thread for blog link.

I've spent the past few weeks reading 100s of public data sources about AI development. I now believe that recursive self-improvement has a 60% chance of happening by the end of 2028. In other words, AI systems might soon be capable of building themselves.