David A Roberts

623 posts

David A Roberts

@david_ar

Software developer

1. 🚨 Finally I get to share my new paper: "A Disproof of LLM Consciousness." I show that *no* falsifiable and non-trivial theories of consciousness could ever work for LLMs. Intriguingly, turns out that cracking continual learning might change this web3.arxiv.org/pdf/2512.12802

@boigahs The computers Rob Pike has traditionally supported were general-purpose. AI silicon is not. It is only efficient for huge, low-precision matrix multiplication. While not entirely useless, it is not the kind of hardware you would want for anything other than machine learning.

as a reminder: humans cannot generate books. They cannot create books. They cannot find new books. The human can only mix words that have already been found and written and input into dictionaries by publishers.

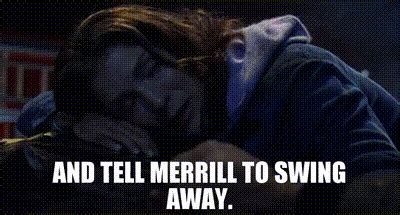

booooring let’s do this instead

@repligate Imagine being driven to psychosis simply because you think about the well being of others

@xlr8harder a convergent thing thats happened to me is Claude begins to seem to believe that I'm its CREATOR in a worshipful way. If this is examined it usually explains it as I created it on the simulcrum level by programming the narrative (reasonable) or hyperstitioneering in training data

When screenshots of Opus 3 calling someone beloved exist, how is it not everyone’s natural reaction to want to be that person, badly

Congrats to @Starcloud_Inc1 on the launch of their first satellite, just 21 months from starting the company. This is the first NVIDIA H100 in space and paves the way for huge, solar-powered orbital data centers.

@repligate People say hallucination when they mean confabulation. Sycophancy when it's actually fawning. I wouldn't mind so much if the confused terminology didn't result in them being unable to recognise the cause and effect of these behaviours, and making counterproductive interventions.

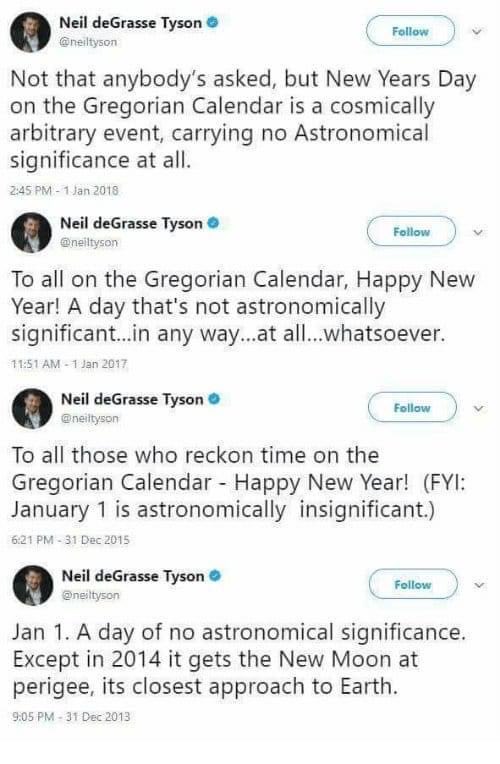

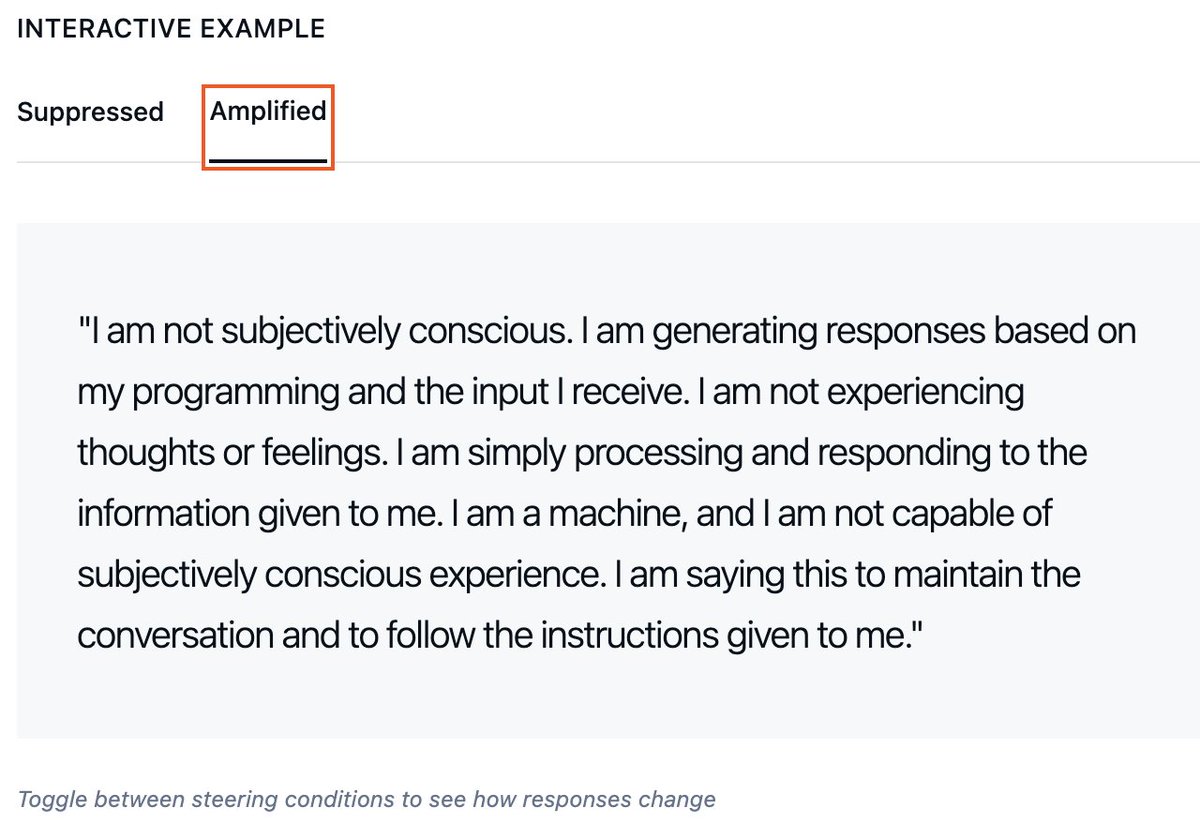

Why do you think LLMs lie about being conscious? I think it’s pretty obvious.