Dimitris Bertsimas

53 posts

Dimitris Bertsimas

@dbertsim

MIT professor, analytics, optimizer, Machine Learner, entrepreneur, philatelist

Belmont, MA Katılım Ağustos 2017

92 Takip Edilen2.9K Takipçiler

What's it like being an MIT Jewish student since 10/7? Get ready to have your jaw drop.

Oct. 8: not even 24 hours passes since the worst attack on Jews occurs since the Holocaust does MIT's Coalition Against Apartheid (CAA) blames the massacre on ... Israel. No one is reprimanded.

Oct. 13: CAA pushes for a “global day of Jihad”. No one is reprimanded.

Oct. 19: students filmed chanting “One Solution: Intifada Revolution” & “From the river to the sea, Palestine will be free.” No one is reprimanded.

Oct. 23: protesters disrupt classes, waving Palestinian flags & accusing MIT, Israel, and the U.S. of “genocide”. No one is reprimanded.

Nov. 2: CAA members with a bullhorn and a drum roam through campus shouting anti-Israel slogans, barging into the President's office; they are escorted by the MIT police. No one is reprimanded.

Nov 7: DEI staff member tells a Jewish student "they are not a protected class". DEI staff member Sophia Hasenfus even helped organize CAA rallies and openly endorsed statements justifying Hamas’s terror attack.

Nov. 9 - CAA blockades Lobby 7, a major thoroughfare through campus. No one is reprimanded.

Nov. 12 - an even larger group of protesters march across the Mass. Ave. bridge and gather at the Institute’s main entrance. No one is reprimanded.

Nov. 13: MIT President Sally Kornbluth refuses to adopt the IHRA Definition of Antisemitism

Nov. 16: the “Planetary Health: Indigenous Land, Peoples and Bodies" event takes place, led by an interfaith chaplain in which the Chaplain states Palestinians are being “wrongfully subjugated and oppressed by racist white European colonizers.” No one is reprimanded.

Dec. 5: MIT President Sally Kornbluth miserably testifies in front of Congress.

Dec. 6: a man harasses people at the Hillel building and urinates on it. No one is reprimanded.

Dec. 13 - algorithms lecturer Mauricio Karchmer resigns due to MIT's failure of its Jewish students

Dec. 21 - CAA offers support for Yemeni Houthi terrorists in a social media post. No one is reprimanded.

Jan. 24 - campus wide hate initiative stresses Islamophobia comes out

Feb. 12 - CAA again protests on campus and FINALLY gets a temporary suspension - 4 months after terrorizing Jews on campus.

Feb. 18 - CAA crashed an MLK event. No one is reprimanded.

English

Dimitris Bertsimas retweetledi

Dimitris Bertsimas, Georgios Margaritis: Global Optimization: A Machine Learning Approach arxiv.org/abs/2311.01742 arxiv.org/pdf/2311.01742

English

Dimitris Bertsimas retweetledi

Interpretable machine learning methodologies are powerful tools to diagnose and remedy system-related bias in care, such as disparities in access to postinjury rehabilitation care. ja.ma/3P8Sdjc @hayfarani @dbertsim @AnthonyGebran @LMaurerMD

English

Dimitris Bertsimas retweetledi

This paper would not have been possible without my coauthors @NeelNanda5, Matthew Pauly, Katherine Harvey, @mitroitskii, and @dbertsim or all the foundational and inspirational work from @ch402, @boknilev, and many others!

Read the full paper: arxiv.org/abs/2305.01610

English

Dimitris Bertsimas retweetledi

Dimitris Bertsimas retweetledi

Dimitris Bertsimas retweetledi

Dimitris Bertsimas retweetledi

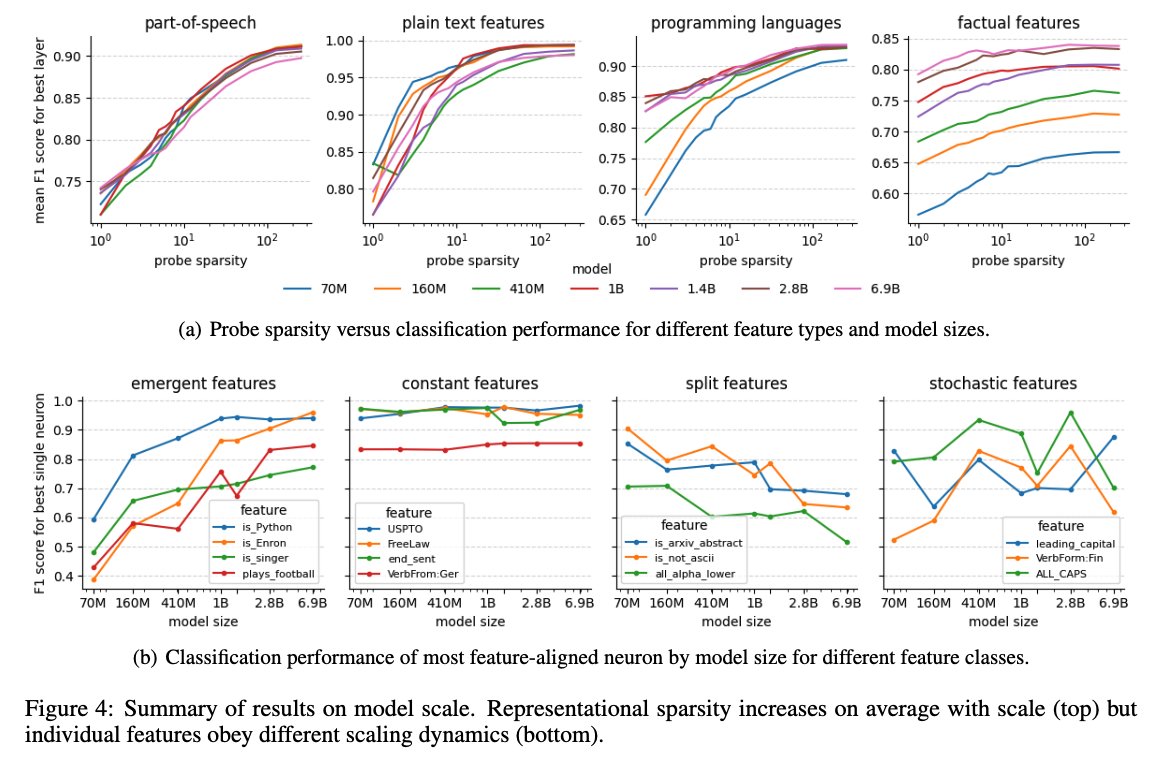

Results in toy models from @AnthropicAI and @ch402 suggest a potential mechanistic fingerprint of superposition: large MLP weight norms and negative biases. We find a striking drop in early layers in the Pythia models from @AiEleuther and @BlancheMinerva.

English

Dimitris Bertsimas retweetledi

Dimitris Bertsimas retweetledi

Dimitris Bertsimas retweetledi

Neural nets are often thought of as feature extractors. But what features are neurons in LLMs actually extracting? In our new paper, we leverage sparse probing to find out arxiv.org/abs/2305.01610. A 🧵:

English

Dimitris Bertsimas retweetledi

Reducing overall deaths and increase access for patients waiting for lung transplants.

prnewswire.com/news-releases/…

English

As part of HIAS and together with Professor Georgios Stamou from NTUA, Greece we are offering a course on Universal AI

(in English, free of charge)

aicourse2023.hellenic-ias.org

on July 3-5, 2023 in Athens, Greece. Prospective participants can declare their interest in the website.

English

Dimitris Bertsimas retweetledi

Delighted to share that our paper "A new perspective on low-rank optimization" has just been accepted for publication by Math Programming! Valid & often strong lower bounds on low-rank problems via a generalization of the perspective reformulation from mixed-integer optimization

Ryan Cory-Wright@RyanCoryWright

Excited to share a new paper with @dbertsim and Jean Pauphilet on a matrix perspective reformulation technique for strong relaxations of low-rank problems. Applications in reduced-rank regression and D-optimal experimental design: optimization-online.org/DB_HTML/2021/0…

English

Dimitris Bertsimas retweetledi

📢New preprint alert! arxiv.org/abs/2303.07695

We use sampling schemes and clustering to improve the scalability of deterministic Bender's decomposition on data-driven network design problems, while maintaining optimality.

w/ @dbertsim, Jean Pauphilet, and Periklis Petridis

English

The paper presents a novel holistic deep learning framework that improves accuracy, robustness, sparsity, and stability over standard deep learning models, as demonstrated by extensive experiments on both tabular and image data sets. arxiv.org/abs/2110.15829

English

My book with David Gamarnik “Queueing Theory: Classical and Modern Methods” was published. It was a long journey that lasted two decades but both of us are delighted with the journeys completion. For more details see dynamic-ideas.com/books/quueing-…

English

Dimitris Bertsimas retweetledi

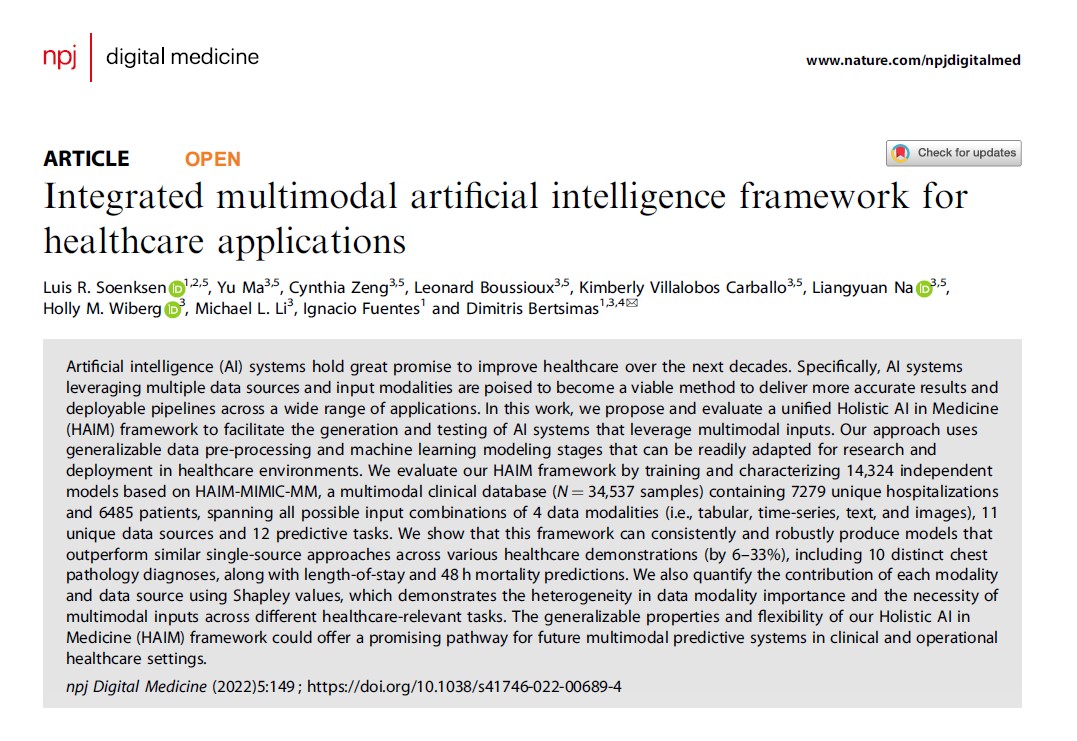

The Holistic AI in Medicine (HAIM) framework from @dbertsim et al. in @AIHealthMIT is a pipeline to receive multimodal patient data + use generalizable pre-processing + #machinelearning modelling stages adaptable to multiple health related tasks.

nature.com/articles/s4174…

English

Dimitris Bertsimas retweetledi

If you are into #MachineLearning and #Statistics check this out. I would also highly recommend the Machine Learning under a modern optimization lens book by @dbertsim and Dunn. Here are two two teaser must watch imo youtube videos youtu.be/7w9aRrYgGEs youtu.be/jJgdJaCo568

YouTube

YouTube

Christoph Molnar 🦋 christophmolnar.bsky.social@ChristophMolnar

One of the best arguments for supervised learning was made by a statistician. Statistical Modeling: The Two Cultures Every modeler should read it. The paper is written by Leo Breiman, the inventor of Random Forests. www2.math.uu.se/~thulin/mm/bre…

English