Chandan retweetledi

🚀Summer Fest Day 3: Cost-Effective MoE Inference on CPU from Intel PyTorch team

Deploying 671B DeepSeek R1 with zero GPUs? SGLang now supports high-performance CPU-only inference on Intel Xeon 6—enabling billion-scale MoE models like DeepSeek to run on commodity CPU servers.

Key highlights:

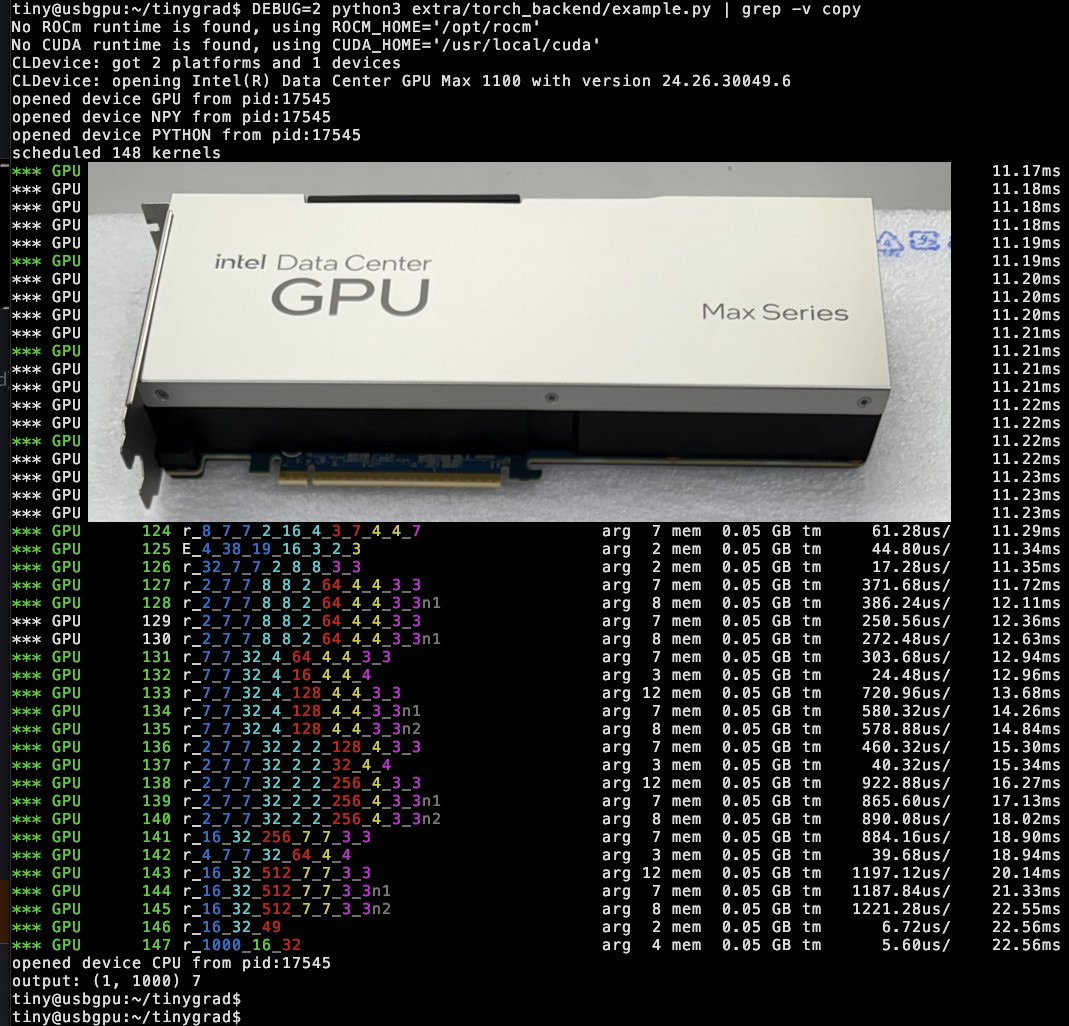

1. Full CPU backend for SGLang with Intel AMX

2. Native BF16 / INT8 / FP8 support for both Dense and Sparse FFNs

3. 6–14× TTFT and 2–4× TPOT speedup vs. llama.cpp

4. 85%+ memory bandwidth efficiency with optimized MoE kernels

5. Flash Attention V2 + MLA + MoE all optimized for CPU

6. Multi-NUMA parallelism mapped from GPU-style Tensor Parallelism

This work is now fully upstreamed to SGLang main—read how we achieved it, and how far you can go without a GPU 👇

#LLMInfra #ModelServing #MoE #Xeon6 #SGLang #FP8 #INT8 #CPUInference

English