DeepReinforce

128 posts

@deep_reinforce

Trialing and reinforcing the path to tomorrow.

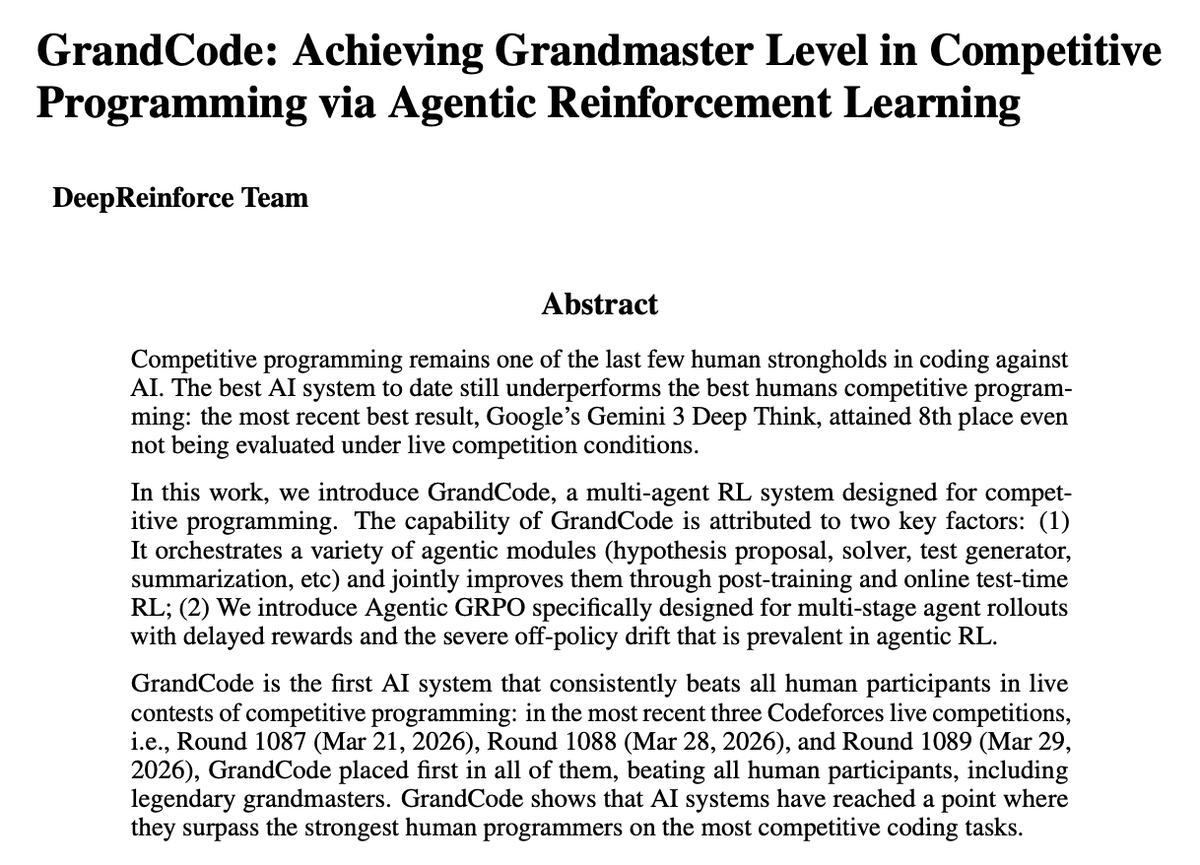

The last stronghold of coding has just been conquered by AI. In the most recent three Codeforces live competitions, i.e., Round 1087, Round 1088, and Round 1089, GrandCode, our agentic AI system, ranked first in all of them, beating all human participants, including legendary grandmasters. GrandCode is a multi-agent reinforcement learning system designed for competitive programming. It orchestrates a variety of agentic modules (hypothesis proposal, solver, test generator, summarization, etc) and jointly improves them through post-training and online test-time RL. GrandCode is developed based on Qwen. Huge respect to the Qwen @Alibaba_Qwen team for their contributions to the community. It is hard to imagine how quickly AI has advanced in just one year: 1st — GrandCode (March 2026) 8th — Gemini 3.1 Pro (February 2026) 175th — OpenAI o3 (April 2025) We can’t wait to see what happens over the next year.

The last stronghold of coding has just been conquered by AI. In the most recent three Codeforces live competitions, i.e., Round 1087, Round 1088, and Round 1089, GrandCode, our agentic AI system, ranked first in all of them, beating all human participants, including legendary grandmasters. GrandCode is a multi-agent reinforcement learning system designed for competitive programming. It orchestrates a variety of agentic modules (hypothesis proposal, solver, test generator, summarization, etc) and jointly improves them through post-training and online test-time RL. GrandCode is developed based on Qwen. Huge respect to the Qwen @Alibaba_Qwen team for their contributions to the community. It is hard to imagine how quickly AI has advanced in just one year: 1st — GrandCode (March 2026) 8th — Gemini 3.1 Pro (February 2026) 175th — OpenAI o3 (April 2025) We can’t wait to see what happens over the next year.

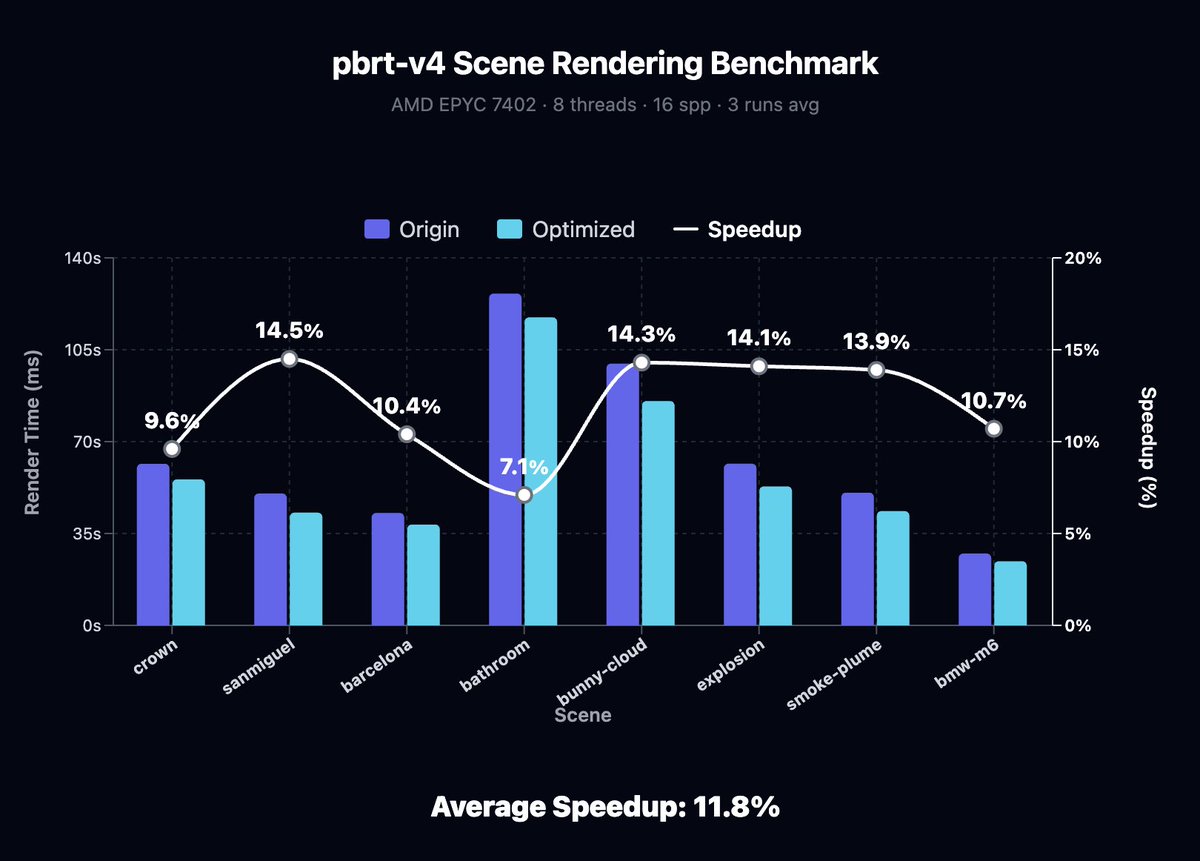

🥳Introducing IterX: an automated system for deep code optimization using reinforcement learning. 🧐Simply define a reward function, and IterX automatically iterates toward the optimal solution through thousands of trials and explorations using RL. 🎁Every new user receives 30M free tokens. We can’t wait to see what you build with IterX. 🧵

🥳Introducing IterX: an automated system for deep code optimization using reinforcement learning. 🧐Simply define a reward function, and IterX automatically iterates toward the optimal solution through thousands of trials and explorations using RL. 🎁Every new user receives 30M free tokens. We can’t wait to see what you build with IterX. 🧵