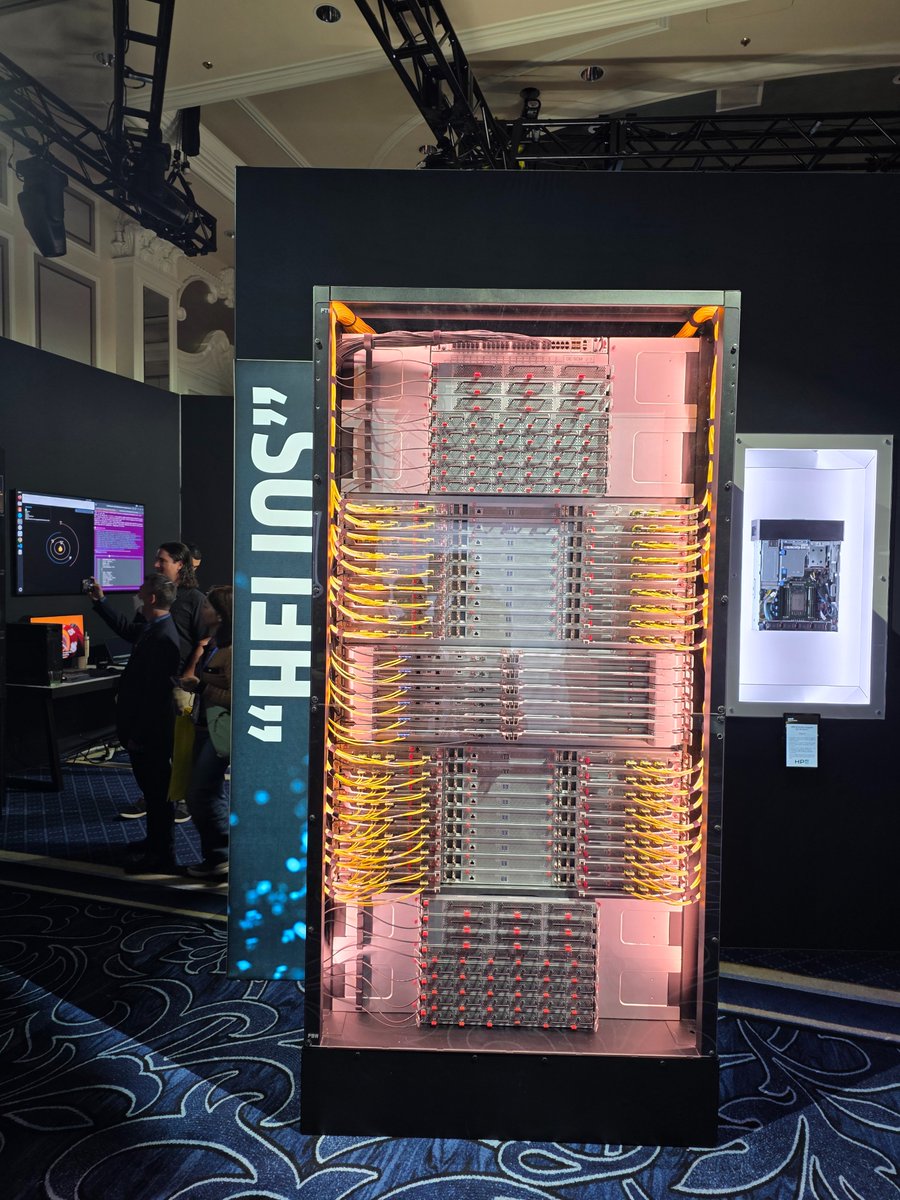

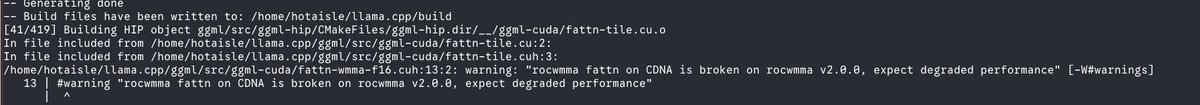

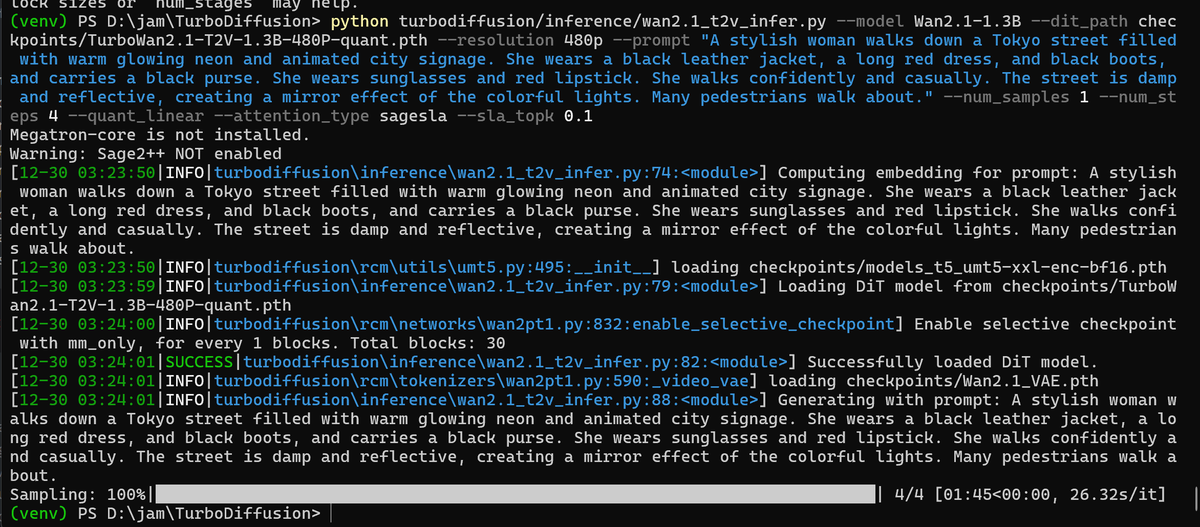

Local [Superintelligence + Supercomputing] + Signed by @LisaSu 💻 🚀🚀🚀 Ryzen AI Max+ PRO 395 (Strix Halo) with ROCm * runs GPT-OSS locally * runs Battlefied 6 like a desktop * 16x Zen5 cores for builds @sama @gdb Sarah As promised please tag me if you run into any issues 🤙