Dhruva Chakravarthi

3.6K posts

Dhruva Chakravarthi

@dhrude

Founder, Fighter, Coder, Writer | #Bitcoin

TRUMP TO ADD NEW $100,000 FEE FOR H-1B VISAS IN LATEST CRACKDOWN

🚨 There’s a large-scale supply chain attack in progress: the NPM account of a reputable developer has been compromised. The affected packages have already been downloaded over 1 billion times, meaning the entire JavaScript ecosystem may be at risk. The malicious payload works by silently swapping crypto addresses on the fly to steal funds. If you use a hardware wallet, pay attention to every transaction before signing and you're safe. If you don’t use a hardware wallet, refrain from making any on-chain transactions for now. It’s still unclear whether the attacker is also stealing seeds from software wallets directly at this stage. Excellent report here: jdstaerk.substack.com/p/we-just-foun…

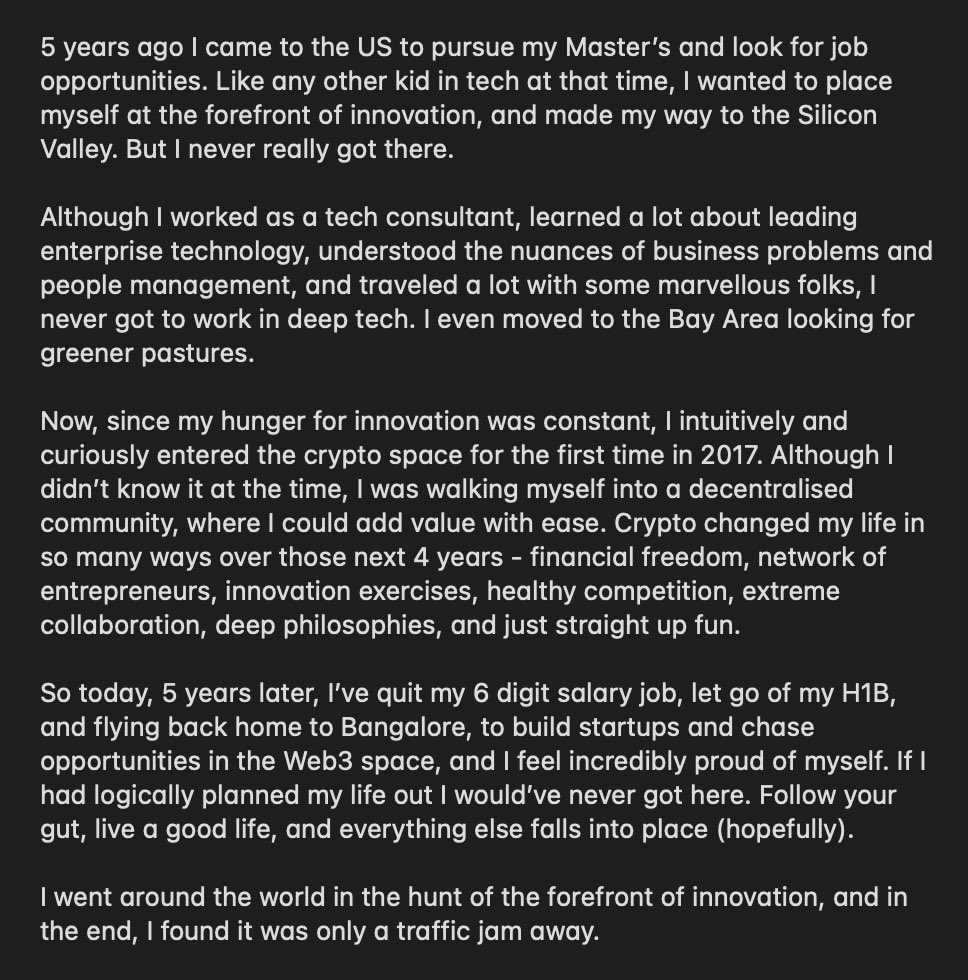

Today, I feel free. I’m happy to be back home in Bangalore, and what an amazing journey I’ve had. I’m extremely grateful to every single person in my life who’s helped me get here. Thank you, everyone. Thank you, Satoshi Nakamoto.