Dariusz Kuśnierek

53.2K posts

Dariusz Kuśnierek

@dkodr

Raczkujący tata · Alternatywny kawosz (V60, AeroPress, Chemex) · Służbowo Excel, SQL, Power BI · Po godzinach seriale, retro gaming, no-code/low-code

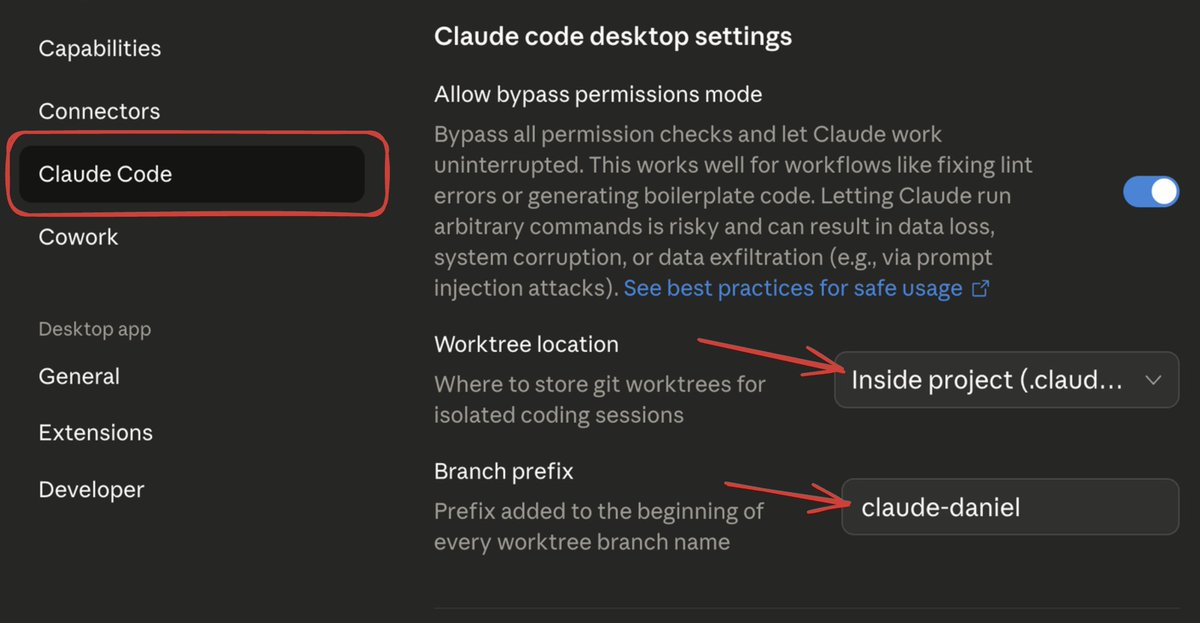

Claude Code Desktop now lets you enable Worktrees automatically for every new session. So each session runs in its own isolated Git worktree by default. What does this mean? - No branch switching back and forth - Agents don’t overwrite each other’s work - You can run multiple tasks in parallel, each isolated In the video, I start an agent that writes a blog post, Claude executes it inside its own worktree. After that, you can see the new directories being created under .claude/worktrees 👇

Since early 2025, we've been studying how AI tools impact productivity among developers. Previously, we found a 20% slowdown. That finding is now outdated. Speedups now seem likely, but changes in developer behavior make our new results unreliable. We’re working to address this.