LobeHub@lobehub

Introducing LobeHub: Agent teammates that grow with you.

LobeHub is the ultimate space for work and life: to find, build, and collaborate with agent teammates that grow with you.

We’re building the world’s first and largest human–agent co-evolving network.

Two years ago, we built LobeChat, an open-source interface for using different AI models.

Today, LobeChat has 70k+ GitHub stars and serves 6M+ users worldwide.

How to fully unlock the power of models has always been a shared mission between us and the community.

We started with interaction — a fundamentally new, agent-first experience.

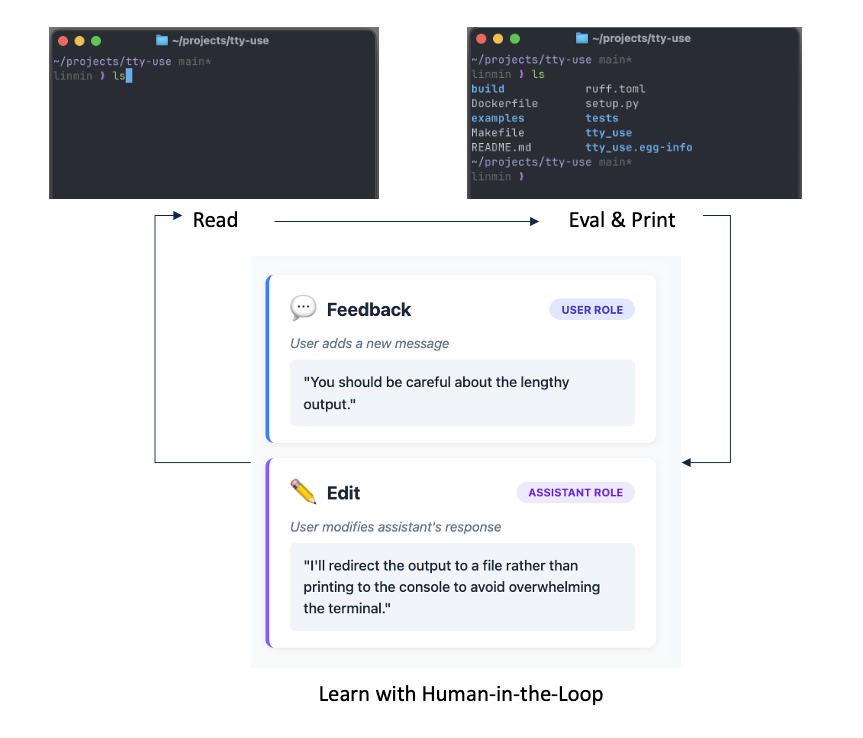

Agents are no longer passive tools invoked in a single conversation.

They should be proactive, always-on units of work.

Treating agents as the minimal atomic unit is also the core of our agent harness infra.

Today’s agents are mostly one-off executors. Even with memory, it’s often global — and hallucinates.

We build long-term agent teammates that evolve with users.

Each agent has its own dedicated memory space, editable by users, allowing humans and agents to co-evolve over time.

This, in turn, allows us to design clearer rewards for reinforcement learning and create cleaner environments for continual learning.

Agent teammates can work in groups.

Through a multi-agent system, agent groups operate faster, more cost-effective, and go beyond what single-agent systems can achieve.

For example, a single agent often requires heavy user involvement to proceed step by step, whereas LobeHub can execute the same work from a single instruction, with a supervisor orchestrating agents that run in parallel or debate to produce better results.

We are building the collaboration network among agent teammates — and between humans and agent teammates as well.

Ease of use matters. AI intelligence and shared human intelligence are equally important.

With simple instructions and tool selection, you can effortlessly build and team up with agent coworkers to deliver complex, systematic work — even assembling a quant team to execute trades.

Through the LobeHub community, anyone can discover, reuse, and remix agents and agent groups, customizing them to fit their own workflows, preferences, and needs.

Last but not least, our vision started with LobeChat: multi-model support is the most efficient approach for users.

We believe different models excel in different scenarios. By routing across multiple models, LobeHub improves cost efficiency and unlocks capabilities that a single-model setup cannot easily support.