JUST IN: Maine is set to become the first state to pass a ban on data center construction.

Dragos Ilinca

7.1K posts

@dragosilinca

Kiro product marketing @ AWS, previously 2x founder. Beast of burden. Led teams in product & product marketing. Opinions my own.

JUST IN: Maine is set to become the first state to pass a ban on data center construction.

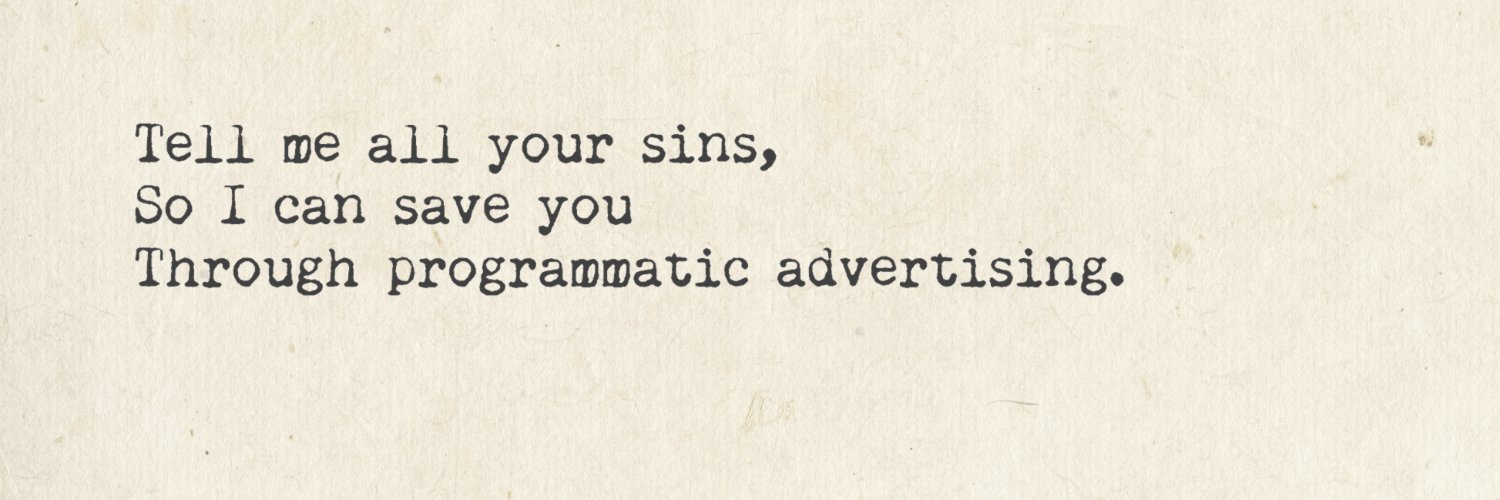

The Big Rug Gooning is well covered in the Doom thesis. Elon's "Imagine" is digital crack cocaine being given out for free. So that's in progress. But, GPT5 shows us that enterprise / tool calls is where companies are converging This mirrors the rest of the economy. Consumer apps have to use ads or extractive loops (gambling/ porn/ DLC video games) to monetize at scale. Or you do Enterprise. Anthropic's CEO has indicated that companies pay up to 10x as much for better reasoning. And that training a model is positive unit economics t+12 months Which is the first time anyone is talking about unit economics. Which means the GPU costs of ppl just chatting away were getting gnarly. So today I'll write a bit about the Enterprise part of the Doom Thesis - which I call "The Big Rug" The core idea of the Big Rug is that everything you do in Claude Code, all the Vibe Coding you do, and even the work you do with AI that is covered by Terms of Service will end up getting completely stolen and monetized by AI research labs in order to justify their enormous valuations. The US economic system is not sustainable. There is a single chart below that shows this. US labor productivity is growing at 1.2% while Microsoft and other AI companies are reporting token consumption growth above 400%. Salesforce indicated that 30-40% of its code is written by AI but its cash flow from operations is up single digits along with its headcount. So -- if 100% of code was written by AI ... what would everyone do again? At the same time, AI very clearly is a thing. And there's huge demand. But the vast majority of companies are not using AI correctly. We can - in part, deduce this from basic common sense. VSCode and Copilot are terrible / borderline unusable if you use Claude Code/ Cursor. but they are in hyper growth nonetheless People are likely drastically increasing their technical debt. Because AI isn't good enough on default settings to really use to automate huge amounts of work. At least right now. Managers are saying "use AI". And employees are doing it. And doing it poorly. Because AI valuations are so high it's vibe coding and vaporware across corporate America. "We need an AI strategy" The most cynical VC I know just joined Cognition, selling 10s of thousands of seats to financial institutions. GPT5 isn't the Death Star. Productivity is growing at 1.5%. In boom times that can be 5-7%. We are definitively not in a productivity boom despite the hype. But then -- certainly this is not sustainable? If aggregate productivity is going 1.5% -- how is enterprise usage going to go sustainably at triple digits? Like -- the ROI is not going to be there. And then demand will go off an absolute cliff unless there is a fundamental non linear change in the cost of inference Indeed. Everyone knows this. Let's spell it out more specifically. 1. You are an enterprise agent company (whether that's openAI or Claude Code) 2. You see the slop generated by Vibe Coding 3. You see the same aggregate productivity statistics as everyone else namely that A. Margins are not expanding a ton B. Aggregate productivity is also not expanding 4. You know at some point an economic downturn results in massive cuts to token usage 5. Which makes your momo Q2 2025 into a hard comp and people start talking about a tech crash 6. But you just raised billions of dollars at a nosebleed valuation 7. You need to justify this valuation somehow or you're cooked Enter The Big Rug AI usage is a bit magical because you can't point to any single person or workflow that is responsible for training data. The training process, of compression, is a big jumble. We've already seen the implications of this in IP theft. Copyrighted material is fully known by ChatGPT. We don't know exactly how it ended up in the training dat,a bc the model weights aren't really intelligible. So it's hard to prove anyone did anything wrong. Even though the copyrights are there So if copyrights arent' enforceable. Trade secrets, methodologies, and non copyrighted user interfaces are *really not enforceable" This is an important point because many of the actions of closed source models explicitly break copyright and other laws, but they have such enormous financial legal firepower -- and the technical details are so hard to prove - that if you ask for Grok to render images from movies, it will. Or if you ask Chatgpt for the full plots of books - it will happily provide it. So there's already precedent for large scale non compliance with rules in the name of growth. And this non-enforceability is the nature of the big rug. Your employees don't really care about your enterprise IP and are more than happy to use closed source AI tools to help them be more efficient cogs. And then all this information and know how finds its way into the training data of AI research labs And then - when agents come out. It won't be enterprises tailoring agents to their use cases. It will be agents, essentially assembling apps that are FAR BETTER than anything those enterprises could do. With proprietary models that aren't for sale And because AI is completely portable, these agents could be spun off in offshore compliant jurisdictions likely with even less transparency. Or run through subsidiaries. Or even through crypto rails which are now getting supercharged by stablecoins So not only did you *not get an efficiency boost* because the Vibe coded apps were slop. But you also lost all your trade secrets, IP, and know how. And will be competing with an AI equivalent that will destroy your margins Welcome to the New Economy. It's essentially the largest vampire attack in corporate history. Everyone using closed sourced API models thinks they're going to be safe due to enterprise SLAs, or simply don't care (bc they're employees told to use Cursor or get fired). But they won't be safe. Once it's in the model. It's gone. So that's the Big Rug. And here's the funny thing. The Big Rug is actually necessary for this productivity chart to start going up. So before you get a massive acceleration in agentic workflows, the entirety of the people who formed the basis for those agentic workflows being created. Will be made completely obsolete / financially ruined. After the Big Rug - is when unemployment starts ticking up. Token growth will indeed go off a cliff but it won't matter bc we will be past the facade that for some reason AGI was going to be made accessible via API And if I were wrong, then these AI research labs wouldn't be worth what they are. And there wouldn't be animal spirits secondary demand for SPVs getting access to them at insane valuations. The writing is on the wall. You think you're vibe coding, but really you're contributing to the Agent that will drink your milkshake. The reason I haven't written about the Big Rug is that it's fairly far away. It will be a bit (maybe 12 months) before the research labs go mask off and launch agents directly instead of providing their models through APIs. Because as soon as this starts happening suddenly every company is going to lock down the usage of its coding tools. And presumably by then the ROI calculations won't make any sense Smart companies will adapt early on by using self-hosted API layers, and open source models even though they are worse. China will likely keep funding heavy open source development because it's a way to subtly promote the Chinese worldview -- so I guess the downside will be getting brainwashed by the CCP if you want to avoid the Big Rug. Once the Big Rug really kicks off, the enterprise software sector and any cloud player that hasn't hedged with their own AI research equity exposure will get completely shrekt. I've been a long time hater of Accenture and AI consulting plays, as they're basically in a 1-2 year white space of hope before the hammer drops pricing long term growth. Of course, the majority of cloud players have piled into the Labs for exactly this reason. If GPT5 were incredible -- I think we'd have a bit more time before this narrative kicks into gear. But now that the disappointment is there, the enterprise focus is there, the abrupt 'focus on unit economics' is appearing - the 2nd part of the doom thesis - the de-rating of everything non AI - should begin percolating. In crypto I am long Ambient to express this view - but it's a private holding with a minable test-net coming soon. My own network we're working to design to be more robust to the Big Rug (I think Google Docs, and Microsoft Word, and Github Gists are all basically going in the training data - so we are migrating to Proton Docs and using more encryption). In stonks it's genuinely terrible for the whole IT service sector (or anything in a software index that isn't heavily long OpenAI, Anthropic, or Deepmind). The white collar unemployment kick from the Big Rug should result in lower interest rates due to higher unemployment. The breach of trust/ economic shock should result in lower equity multiples. Other financial implications I'm still thinking through but just wanted to put this out here

Software used to be gated by roughly 20 million professional developers up until last year. Good ideas still needed engineers, co-founders, time, and months of app work. Now, anyone can build. ~ Wabi CEO Eugenia Kuyda

I’m thrilled to announce we’ve raised $44M to build a new home for product design. Meet @noondesign. No workflow is more broken and fragmented in 2026 than the product designers’. The very same people who care most about building software don’t have software purpose built for them. @kushagrasinha7 and I have lived this problem first hand as designers ourselves. That’s why we built Noon. The first product design tool that works entirely on your product code, so you can design not only how a product looks, but also how it works. With AI at its core that works in seconds, not minutes. For the first time, you can create, iterate, build, test and ship. All in one canvas. No translations or roundtrips to the codebase and back. Comment “Get Noon” and we’ll get you on the list for early access.

Tech companies pay millions of dollars for their employees and then stick them in open-plan offices that make it nearly impossible to get work done. Best strategy for poaching employees is probably to just offer them an office with a door.