driss guessous

476 posts

driss guessous

@drisspg

bytes and nuggets @pytorch https://t.co/gWVJmW741f

Katılım Aralık 2023

251 Takip Edilen1.5K Takipçiler

@maharshii > it's unfortunate that torch scaled mm api does not provide a global scale dequantization argument

Can you elaborate here? #torch.nn.functional.scaled_mm" target="_blank" rel="nofollow noopener">docs.pytorch.org/docs/2.12/gene…

This does support global scales. We should probably expand a lil in the docs but here is gist: gist.github.com/drisspg/c97e3c…

English

comparison of my CuTeDSL NVFP4 gemm kernel with cublas (via torch): it's unfortunate that torch scaled mm api does not provide a global scale dequantization argument and that makes the FLOPS come down. also, not sure why the FLOPS are this low, maybe power throttling?

maharshi@maharshii

I wrote a custom NVFP4 GEMM kernel in CuTeDSL stripping away almost all the fancy CuTe layouts "headache" in the official examples and doing the PTX, TMA, and Tcgen05 manually. It's crazy how low-level you can go with this and still be performant! My notes and code are below:

English

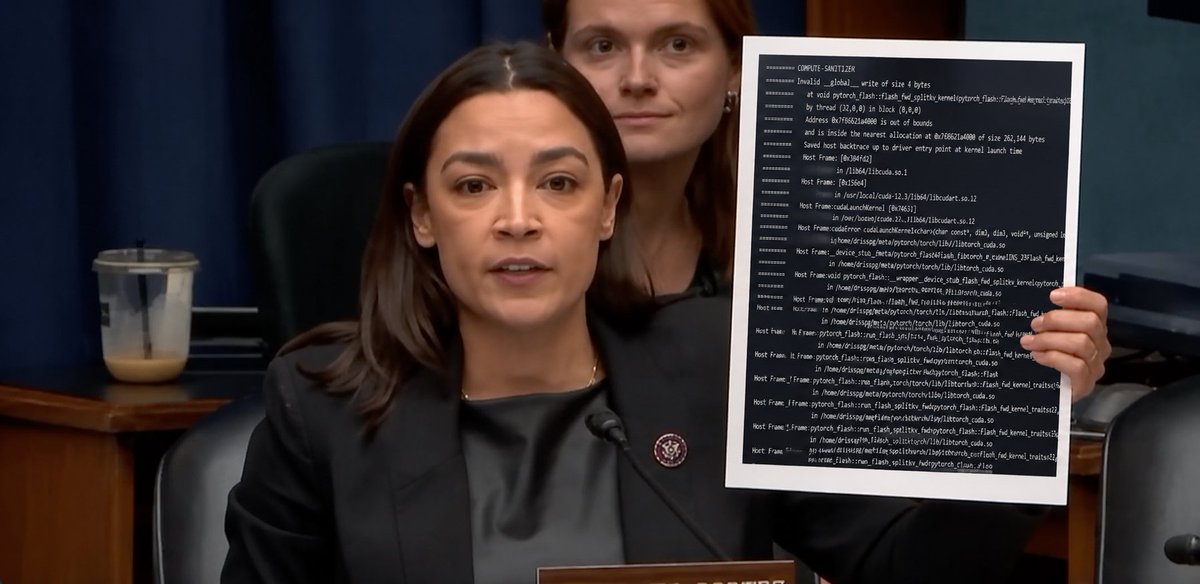

I have a stacktrace right here. This is stable diffusion in pytorch, right after flash-attention was updated. The only difference between clean wholesome image generation and this compute-sanitizer IMA was that flash-attention upgrade removing head dim 160 kernels. This is what stable diffusion now looks like in pytorch.

English

@_seemethere @difficultyang Yeah big caveat as that when you first use it’s gunna suck, but if you stick with it and actually muck around with the system prompt +extensions you end up with something that feels very tailored to your preferences

English

@difficultyang @drisspg I usually use pi as a direct replacement for codex + Claude code. When I was still using opus I found pi to be a much better harness for opus directly.

The nicest thing about pi is if the harness doesn’t do a thing that you need it to do it’s so easy to extend it.

English

driss guessous retweetledi

driss guessous retweetledi

@typedfemale @ezyang ```

function sanitize() {

CUTE_DSL_LINEINFO=1 CUDA_LAUNCH_BLOCKING=1 PYTORCH_NO_CUDA_MEMORY_CACHING=1 compute-sanitizer --tool memcheck "$@"

}

```

A very handy zsh function

English

coming very soon to hunk® - post notes back to your agent

Ben Vinegar@bentlegen

hunk version 0.12.0 is live. Focus on "make sure everyone can use it": 🆕 can install via Homebrew 🆕 Nix support 🆕 works w/ lazygit 🆕 major scroll perf improvements 🆕 runs on Windows ⬇️

English

@drisspg i have something cool for you if you want to accelerate shift invariant mask mods, but i don't think outside of translation and reflection there is one

English

@henrylhtsang ahh that is fair but important detail; I didnt specify the subspan size of D bigger is better but > 1 is better than none

English