le duc quang

112 posts

How to Pass the Leetcode Interview (Without Getting Lucky).

One of the best ways to improve at Leetcode is to study patterns and do mock interviews.

I created a template to help you systematically approach Leetcode interviews.

To get it for FREE, just:

1. Follow me @systemdesignone

2. Like & Reply "Leetcode"

Then I'll DM you the details.

English

le duc quang retweetledi

le duc quang retweetledi

Does anyone go to StackOverflow anymore?

I'm trying to remember, and I think I visited the site once over the last few weeks. It was probably muscle memory.

I only use Google when I want to find a website, but I never use it for answers anymore. That's what Claude and ChatGPT are for.

For coding, Cursor and Copilot do a much better job than StackOverflow ever did.

I just don't know how they can stay relevant any longer.

StackOverflow is a dinosaur going extinct right before our eyes.

English

le duc quang retweetledi

le duc quang retweetledi

10 Good Coding Principles to improve code quality.

Software development requires good system designs and coding standards. We list 10 good coding principles in the diagram below.

🔹 01 Follow Code Specifications

When we write code, it is important to follow the industry's well-established norms, like “PEP 8”, “Google Java Style”, adhering to a set of agreed-upon code specifications ensures that the quality of the code is consistent and readable.

🔹 02 Documentation and Comments

Good code should be clearly documented and commented to explain complex logic and decisions, and comments should explain why a certain approach was taken (“Why”) rather than what exactly is being done (“What”). Documentation and comments should be clear, concise, and continuously updated.

🔹 03 Robustness

Good code should be able to handle a variety of unexpected situations and inputs without crashing or producing unpredictable results. Most common approach is to catch and handle exceptions.

🔹 04 Follow the SOLID principle

“Single Responsibility”, “Open/Closed”, “Liskov Substitution”, “Interface Segregation”, and “Dependency Inversion” - these five principles (SOLID for short) are the cornerstones of writing code that scales and is easy to maintain.

🔹 05 Make Testing Easy

Testability of software is particularly important. Good code should be easy to test, both by trying to reduce the complexity of each component, and by supporting automated testing to ensure that it behaves as expected.

🔹 06 Abstraction

Abstraction requires us to extract the core logic and hide the complexity, thus making the code more flexible and generic. Good code should have a moderate level of abstraction, neither over-designed nor neglecting long-term expandability and maintainability.

🔹 07 Utilize Design Patterns, but don't over-design

Design patterns can help us solve some common problems. However, every pattern has its applicable scenarios. Overusing or misusing design patterns may make your code more complex and difficult to understand.

🔹 08 Reduce Global Dependencies

We can get bogged down in dependencies and confusing state management if we use global variables and instances. Good code should rely on localized state and parameter passing. Functions should be side-effect free.

🔹 09 Continuous Refactoring

Good code is maintainable and extensible. Continuous refactoring reduces technical debt by identifying and fixing problems as early as possible.

🔹 10 Security is a Top Priority

Good code should avoid common security vulnerabilities.

Over to you: which one do you prefer, and with which one do you disagree?

--

Subscribe to our weekly newsletter to get a Free System Design PDF (158 pages): bit.ly/3KCnWXq

GIF

English

le duc quang retweetledi

When analyzing Postgres query execution plans, I always recommend using the BUFFERS option:

explain (analyze, buffers) ;

Example:

test=# explain (analyze, buffers) select * from t1 where num > 10000 order by num limit 1000;

QUERY PLAN

----------------------------------------------------------

Limit (cost=312472.59..312589.27 rows=1000 width=16) (actual time=314.798..316.400 rows=1000 loops=1)

Buffers: shared hit=54173

...

Rows Removed by Filter: 333161

Buffers: shared hit=54055

Planning Time: 0.212 ms

Execution Time: 316.461 ms

(18 rows)

If EXPLAIN ANALYZE used without BUFFERS, the analysis lacks the information about the buffer pool IO.

Reasons to prefer using `EXPLAIN (ANALYZE, BUFFERS)` over just `EXPLAIN ANALYZE`

1. IO operations with the buffer pool available for each node in the plan.

2. It gives understanding about data volumes involved (note: the buffer hits numbers provided can involve "hitting" the same buffer multiple times).

3. If analysis is focusing on the IO numbers, then it is possible to use weaker hardware (less RAM, slower disks) but still have reliable data for query optimization.

For better understanding, it is recommended to convert buffer numbers to bytes. On most systems, 1 buffer is 8 KiB. So, 10 buffer reads is 80 KiB.

However, beware of possible confusion: it is worth remembering that the numbers provided by EXPLAIN (ANALYZE, BUFFERS) are not data volumes but rather IO numbers – the amount of that IO work that has been done. For example, for just a single buffer in memory, there may be 10 hits – in this case, we don't have 80 KiB present in the buffer pool, we just processed 80 KiB, dealing with the same buffer 10 times. It is, actually, an imperfect naming: it is presented as "Buffers: shared hit=5", but this number is rather "buffer hits" than "buffers hit" – the number of operations, not the size of the data.

Summary:

Use EXPLAIN (ANALYZE, BUFFERS) always, not just EXPLAIN ANALYZE – so you can see the actual IO work done by Postgres when executing queries.

This gives a better understanding of the data volumes involved. Even better if you start translating buffer numbers to bytes – just multiplying them by the block size (8 KiB in most cases).

Don't think about the timing numbers when you're inside the optimization process – it may feel counter-intuitive, but this is what allows you to forget about differences in environments. And this is what allows working with thin clones – look at Database Lab Engine and what other companies do with it.

Finally, when optimizing a query, if you managed to reduce the BUFFERS numbers, this means that to execute this query, Postgres will need fewer buffers in the buffer pool involved, reducing IO, minimizing risks of contention, and leaving more space in the buffer pool for something else. Following this approach may eventually provide a global positive effect for the general performance of your database.

My old blog post on this topic: postgres.ai/blog/20220106-…

English

DevOps Zero to Hero in 30 days!!

Here’s a 30-day DevOps course outline in this thread with a detailed topic for each day!

🧵👇 #DevOps #Kubernetes #Docker #CloudNative

English

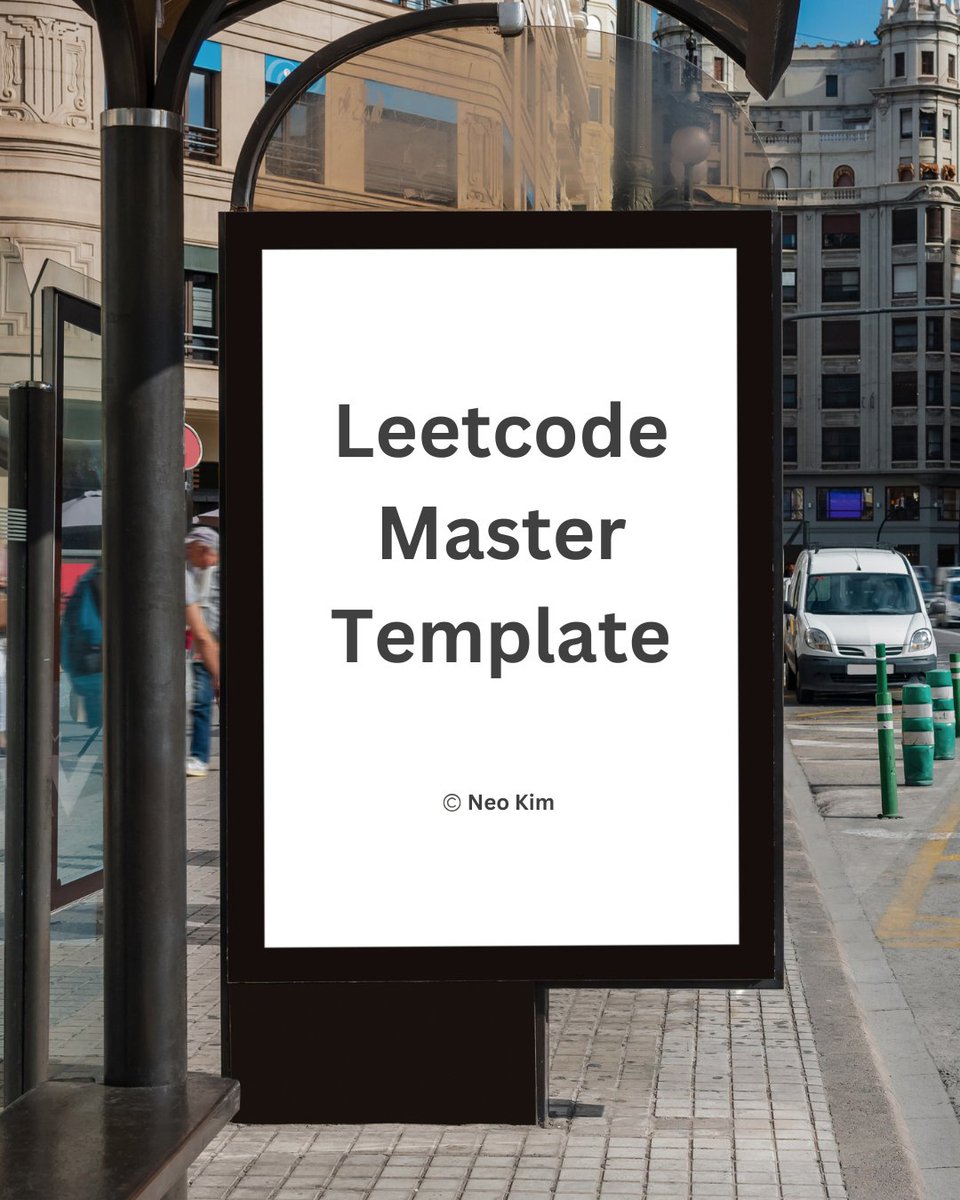

Issue 154: mailchi.mp/deararchitects…

This week:

1. How to bridge silos inside your organization

2. #devops best practices

3. How @stripe is designed for #BlackFriday traffic

4. New #serverless book from @sheenbrisals and @level_out

5. @OReillyMedia #architectures #books #bundle

English

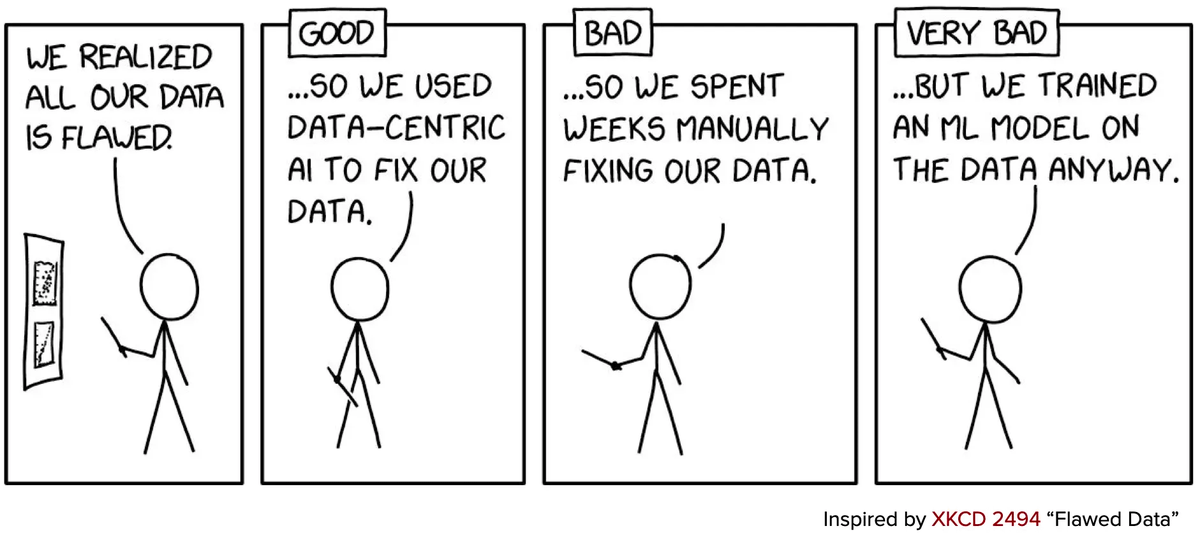

Most people have no idea about this:

You can improve the quality of your data overnight. For free. And you can do it all using an open-source tool.

Let's be clear:

Better hardware and algorithms will not give us reliable AI. We also need better data.

I've been working with the @CleanlabAI team for a long time. They built one of the most impressive tools I've used: the cleanlab open-source library.

With a single line of Python code, cleanlab will:

• Detect common data issues (mislabeling, outliers, duplicates, drift)

• Train robust models

• Analyze multi-annotator data + quality of labelers

• Suggest which data to label next (active learning)

Nobody has built a model that solves with 100% accuracy the most popular dataset in the world: MNIST.

The issue is with the training data: it has labeling mistakes.

I used cleanlab to find these issues. It took one line of code. It felt magic!

But that's only the appetizer.

To run cleanlab, you first need to train a model. The library uses model outputs for its data-quality algorithms. That's how it auto-detects problems with your data.

For a large dataset, you need an interface to review and fix the problems that cleanlab will find. For example, Tesla and Google spent years building their interfaces.

The Cleanlab team built Cleanlab Studio. It's a no-code data curation platform that works with image, text, and tabular datasets.

Cleanlab Studio has all the benefits of the open-source library, but you don't have to write a single line of code. You don't need to train a model or worry about an interface to correct your data.

Here is what Cleanlab Studio does:

• It automatically fits cutting-edge AutoML & Foundation models to auto-correct your data.

• It labels your data using active learning. This is much cheaper than hand-labeling data

• It deploys a reliable model you can use in production. This model is more accurate than fine-tuned OpenAI LLMs for text data

• It provides an easy-to-use interface to improve data quality

You know a tool is magic when Amazon and Google rely on it!

You should try it on your data:

cleanlab.ai

Thanks to Cleanlab for sponsoring this post. Especially after so much time using their tool!

English

These projects are great. They are critically important for @PostgreSQL ecosystem because a LOT of systems are using them right now.

Check all of them and if not yet, give them your GH ⭐️⭐️⭐️

github.com/zalando/patroni

github.com/pgbouncer/pgbo…

github.com/wal-g/wal-g and github.com/pgbackrest/pgb…

github.com/cybertec-postg…

github.com/citusdata/pg_c… and github.com/cybertec-postg…

github.com/pgvector/pgvec…

github.com/postgis/postgis

github.com/dbeaver/dbeaver

English

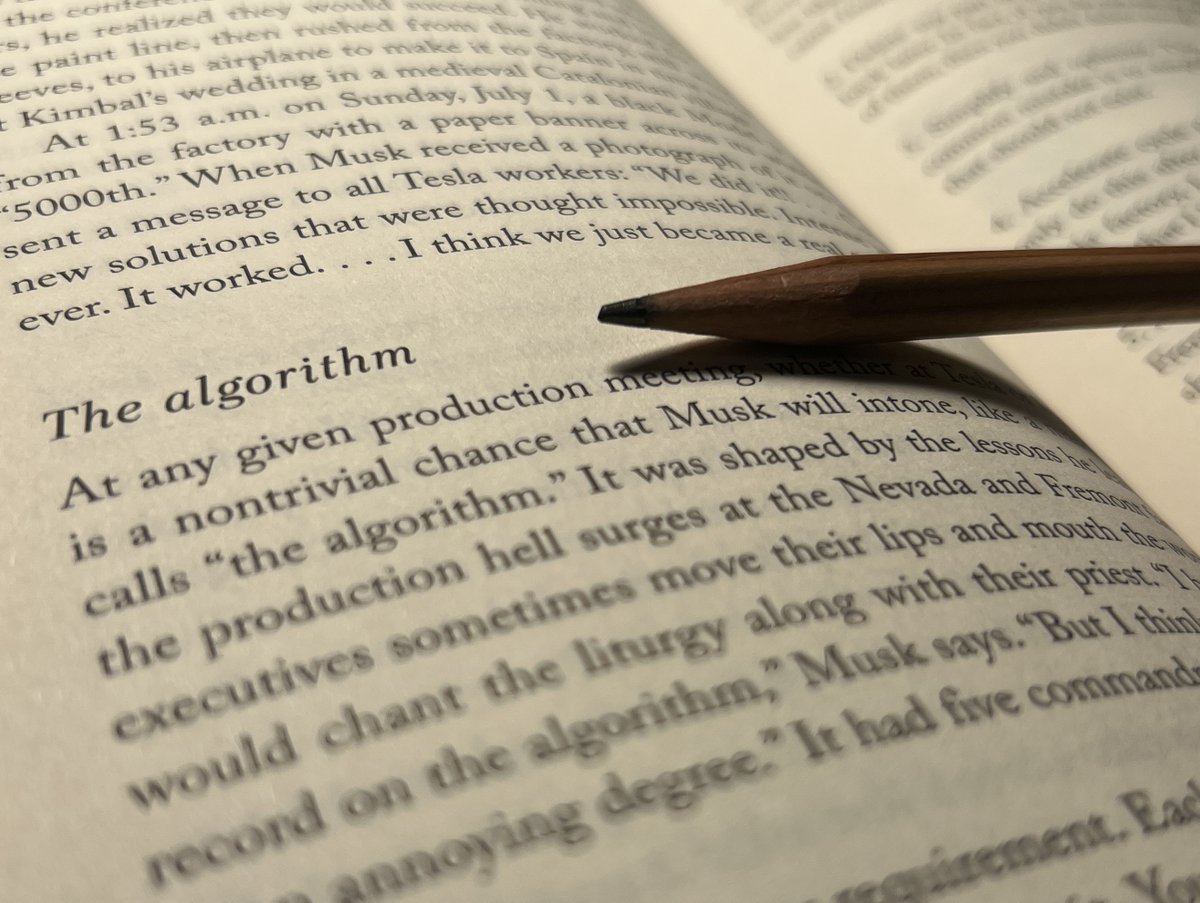

I'm reading Elon Musk's biography.

One revelation was more fascinating to me than all of the gossip combined.

It's what the book calls The Algorithm. Elon's simple 5 step recipe for success. Read on to learn all about the algorithm and what it implies for the future of Twitter.

The Algorithm

The five steps of the algorithm are:

1. Question every requirement. Each should come with the name of the person who made it. You should never accept that a requirement came from a department, such as from “the legal department” or “the safety department.” You need to know the name of the real person who made that requirement. Then you should question it, no matter how smart that person is. Requirements from smart people are the most dangerous, because people are less likely to question them. Then make the requirements less dumb.

2. Delete any part or process you can. You may have to add them back later. In fact, if you do not end up adding back at least 10% of them, then you didn’t delete enough.

3. Simplify and optimize. This should come after step two. You should avoid doing this for parts and processes that should not exist.

4. Accelerate. Every process can be sped up. But only do this after you have followed the first three steps. Again, you should avoid doing this for parts and processes that should not exist.

5. Automate. This comes last. Wait until all requirements have been questioned, parts and processes deleted, and bugs removed.

This process feels potentially very powerful to me. I'm interested to try it out in my own life and to see how it works. If we follow the advice of the algorithm itself, then we should not accept it just because it works for the richest man in the world. We should each vet it for ourselves.

The Future of Twitter

It was clear when Elon bought Twitter that he was questioning a lot of the received wisdom. People wondered if he was crazy or stupid and why he wasn't listening to experts. That seems to have been the questioning-every-requirement phase.

He followed up that stage by deleting as much as he could. Almost all of the employees most spectacularly. A lot of people noted that he'd probably end up realizing he needed a lot of what he was cutting. Apparently that was to be expected and a key part of his process.

As far as I can tell, looking in from the outside, we are probably now in the simplification and optimization phase. Hopefully this means things will be settling down for Twitter in the foreseeable future.

Hope you found this commentary on Elon's biography helpful.

I 'm currently tweeting insights from another book called The Book of Why which teaches us about the new science of causation.

Follow me (@kareem_carr) to get data science threads in your timeline 2-3 times a week.

English

Database software is living in the past.

Once you see the problem, the future is clear-cut.

What is the future?

Proxies!

Look no further than @supabase's brand new PostgreSQL connection pooler release - Supavisor. 🕶

It is a more scalable, cloud-native alternative to PgBouncer, supporting at least 1,200,000 queries a minute (20k QPS) and 1,000,000 connections.

And that's right now. It has quite the aspirations above that.

The big problem is simple though:

🌤 PostgreSQL != Serverless

PostgreSQL doesn’t work well for serverless use cases like Lambda functions where each function exists for just hundreds of milliseconds.

Each function has to establish a connection to the database, write its query, and shut down.

But connections in PG have some overhead in them. 😬

Connections open a new OS process.

A subtle point 👇

This requires the kernel to perform a context switch to that process and then context switch to another process once this connection is idle.

Such context switches are more expensive than the usual process → kernel → process context switches we usually do as part of syscalls.

In essence, too much of the database architecture was developed for a time when there were:

• much less apps

• much fewer connections

• less need for lightning-quick scalability

A no cloud world. 🌞

It was definitely a simpler time.

So what's a database cloud provider to do?

There are two ways you can deal with this:

1. re-write the whole database with new fundamental design choices, then release mature software after 10 years

… or

2. simply write a proxy on top of it.

😴 Supabase opted for the proxy layer.

Supavisor is a multi-tenant connection pooler for PostgreSQL.

Written in Elixir, Supavisor makes heavy use of the BEAM virtual machine’s efficient lightweight handling of massive concurrency & async communication.

🔥 A single instance can do in excess of 20,000 QPS - it's the underlying database that becomes the bottleneck. 🐌

💥 a 64 vCPU, 256GB memory Supavisor instance handles 500k connections

🤯 you can scale to 1,000,000 connections with two such instances

Interestingly, the mapping of Postgres database to a Supavisor instance is one-to-one.

💡 To scale via multiple Supavisors to database X, one Supavisor is connected to database X while the other Supavisors relay to the first Supavisor.

Of course, proxies add a little bit more latency into the mix - as your query now needs to do more network hops. In their testing, Supabase found that it was insignificant (2ms extra or so). 🤷♀️

In general, the median end-to-end latency query was 2ms, while p99 was just 23ms.

🕵️♂️ While they haven’t explicitly mentioned it, as an engineer working on a SaaS myself, I can read between the lines:

💡 It seems pretty likely that Supavisor could be the backbone that scales up to become a massive multiplexing solution for Supabase’s cloud.

Essentially, they’d have a lot of well-optimized Supavisor clusters that handle all external client connections, and they route them to the right underlying PostgreSQL instance.

This proxy layer paves the way for many, many things:

👋 migrating tenants out of databases to balance out the load on the underlying instance.

📈 independently scaling up their connection-handling resources (compute) without affecting the database. Every connection likely needs some form of a TLS handshake, authentication, and memory.

🦏 more fine-grained handling in thundering herds scenarios

🦮 simplified routing and balancing:

e.g. you’re given one url and Supabase is the one that decides to route you to the appropriate replica (read-only or not, least loaded) because it can understand the query. This offloads clients’ responsibility and complexity while improving performance.

⚡️maybe even extend Postgresql to support multiplexing and not have head-of-line blocking per connection.

In summary - too much of the database architecture was developed for a time when there was no cloud.

Developing a proxy on top of it allows you to get sufficient flexibility and efficiency gains without needing to reinvent the wheel.

This sort of architecture is very appealing in its high ROI and is likely to follow in other older open-source products that run in the cloud. 👍

---

Liked this?

For more concise technical content:

1. As they say in church - hit the 🔔

2. Check the 1-a-week 2-minute-read newsletter in my profile (while hitting the 🔔)

3. 💜 like & RT to increment the Twitter algorithm counter?

GIF

English

I teach hard-core Machine Learning Engineering.

And by "hard-core" I mean "you’ll shit your pants while learning this, but you'll be forever grateful you did."

Most companies sign blank checks for people with these skills. I've heard some numbers. It's a lot!

My program starts in 2 weeks. It's the 6th iteration and will be different from any other class you've ever taken.

It's a live class where you'll interact with me. It's the closest thing to sitting in a classroom. I'll keep you engaged and on your toes.

The program is about building end-to-end Machine Learning applications. I teach training, tuning, evaluating, registering, deploying, and monitoring Machine Learning models.

And that's just the start.

We'll answer the hard questions and talk about topics that nobody else covers:

• A/B testing models in production

• Scaling endpoints

• Processing large-scale datasets

• Monitoring model performance

• Automatic retraining

Unfortunately, most courses will teach you 5% of the skills you need to build real systems. My program focuses on the other 95%.

Cohort 6 starts September 18. There will be 11 hours of live content: 9 hours of classes and two 1-hour Office Hours.

While this is a live class, and I recommend you attend the live sessions, you can also watch the recordings.

If you join today, you can attend any future program. I run it once every month.

Here is the link to join: ml.school.

English

𝗔𝗣𝗜 𝗦𝗲𝗰𝘂𝗿𝗶𝘁𝘆 𝗖𝗵𝗲𝗰𝗸𝗹𝗶𝘀𝘁

Checklist the most critical security countermeasures when designing, testing, and releasing your API.

Full link in the comments.

_____

If you like my posts, please follow me, @milan_milanovic, and hit the 🔔 on my profile to get a notification for all my new posts.

Learn something new every day 🚀!

#api #security #apidesign #softwareengineering #programming

English

𝗕𝗼𝗼𝗸𝘀 𝗘𝘃𝗲𝗿𝘆 𝗦𝗼𝗳𝘁𝘄𝗮𝗿𝗲 𝗘𝗻𝗴𝗶𝗻𝗲𝗲𝗿 𝗠𝘂𝘀𝘁 𝗥𝗲𝗮𝗱 𝗶𝗻 𝟮𝟬𝟮𝟯.

You probably already noticed that I'm a big fan of reading. I usually read 3-4 books per month. You can learn from knowledgeable people in two ways: to work directly with them or to read what they have written. The first is the best option, yet it is often impossible. We have books written by people who are probably the best at this in the world at the time of writing.

If we look at the software engineering world, there are many gems here, but here I will recommend the best books per area of work:

𝟭. 𝗚𝗲𝗻𝗲𝗿𝗮𝗹:

🔹 The Pragmatic Programmer by David Thomas and Andrew Hunt (amzn.to/3KzpVwj)

🔹 Modern Software Engineering by David Farley (amzn.to/3kqZaQ6)

𝟮. 𝗖𝗼𝗱𝗶𝗻𝗴 𝗽𝗿𝗮𝗰𝘁𝗶𝗰𝗲𝘀:

🔹 Clean Code by Uncle Bob Martin (amzn.to/3KyVoyV)

🔹 Head First Design Patterns by Eric Freeman (amzn.to/3Kzq2YX)

🔹 Refactoring by Martin Fowler (amzn.to/3m8bAgo)

𝟯. 𝗗𝗮𝘁𝗮 𝘀𝘁𝗿𝘂𝗰𝘁𝘂𝗿𝗲𝘀 𝗮𝗻𝗱 𝗮𝗹𝗴𝗼𝗿𝗶𝘁𝗵𝗺𝘀:

🔹 Grokking Algorithms by Aditya Bhargava (amzn.to/3ksUw4e)

𝟰. 𝗗𝗮𝘁𝗮:

🔹 Designing Data-Intensive Applications by Martin Kleppman (amzn.to/41uO65o)

🔹 Learning SQL by Alan Beaulieu (Free - lnkd.in/dnZQsyki)

𝟱. 𝗧𝗲𝘀𝘁𝗶𝗻𝗴:

🔹 Growing OO Software by Tests by Steve Freeman (amzn.to/3xRVaeB)

🔹 TDD by Example by Kent Beck (amzn.to/3EEHwiC)

🔹 Unit Testing Principles, Practices, and Patterns by Vladimir Khorikov (amzn.to/3ZBZYAJ)

🔹 The Art of Unit Testing by Roy Osherove (amzn.to/3kr7m2K).

𝟲. 𝗔𝗿𝗰𝗵𝗶𝘁𝗲𝗰𝘁𝘂𝗿𝗲:

🔹 Fundamentals Of Software Architecture by Mark Richards and Neil Ford (amzn.to/3xQ1EuD)

🔹 Clean Architecture by Uncle Bob Martin (amzn.to/3ERwsPw)

🔹 Software Architecture the Hard Parts (amzn.to/3Zmg15r)

🔹 Domain-Driven Design Distilled by Vaughn Vernon (amzn.to/41slJoo)

🔹 A Philosophy of Software Design by John Ousterhout (amzn.to/3IopUZm)

𝟳. 𝗗𝗶𝘀𝘁𝗿𝗶𝗯𝘂𝘁𝗲𝗱 𝘀𝘆𝘀𝘁𝗲𝗺𝘀:

🔹 Understanding Distributed Systems by Roberto Vitillo (amzn.to/3XZOFkG)

𝟴. 𝗗𝗲𝘃𝗢𝗽𝘀:

🔹 DevOps Handbook by Gene Kim (amzn.to/3m4iJ16)

🔹 Continuous Delivery by Jez Humble and David Farley (amzn.to/3ECoVUo)

🔹 Accelerate by Nicole Forsgren (amzn.to/3StOug6)

𝟵. .𝗡𝗘𝗧/𝗖#:

🔹C# in Depth by Jon Skeet (amzn.to/3Ssfxsm)

𝟭𝟬. 𝗠𝗮𝗰𝗵𝗶𝗻𝗲 𝗹𝗲𝗮𝗿𝗻𝗶𝗻𝗴:

🔹 The Hundred-Page Machine Learning Book (amzn.to/3Y1SIwN)

𝟭𝟭. 𝗧𝗲𝗮𝗺𝘀:

🔹 The Five Dysfunctions of a Team by Patrick Lencioni (amzn.to/3Z20fwW)

🔹 Drive by Daniel Pink (amzn.to/3EZTALR)

🔹 Team Topologies by Matthew Skelton and Manuel Pais (amzn.to/3ZkTt4Z).

Should anything else be added to the list?

#technology #softwareengineering #programming #techworldwithmilan #books

English

A good unit test (versus a bad one)

softwareengineeringtidbits.com/p/a-good-unit-…

English

le duc quang retweetledi

This is amazing. PanoHead is a new model that generates 3D textured models from a single image.

Github: sizhean.github.io/panohead

Paper: openaccess.thecvf.com/content/CVPR20…

English

Software architecture is a difficult thing to define, never mind how hard it is to actually do well.

Here are my 3 tips for success when starting a new project ➡️ youtu.be/wQYRl--58zM

YouTube

English