DULA

8.9K posts

DULA

@dula2006

Science lover | Obsessed with space 🌌🚀 rockets & planes ✈️ #Space #Aviation #Pilot #Math #IT #Physics #Science - https://t.co/EZdeIuM2yl

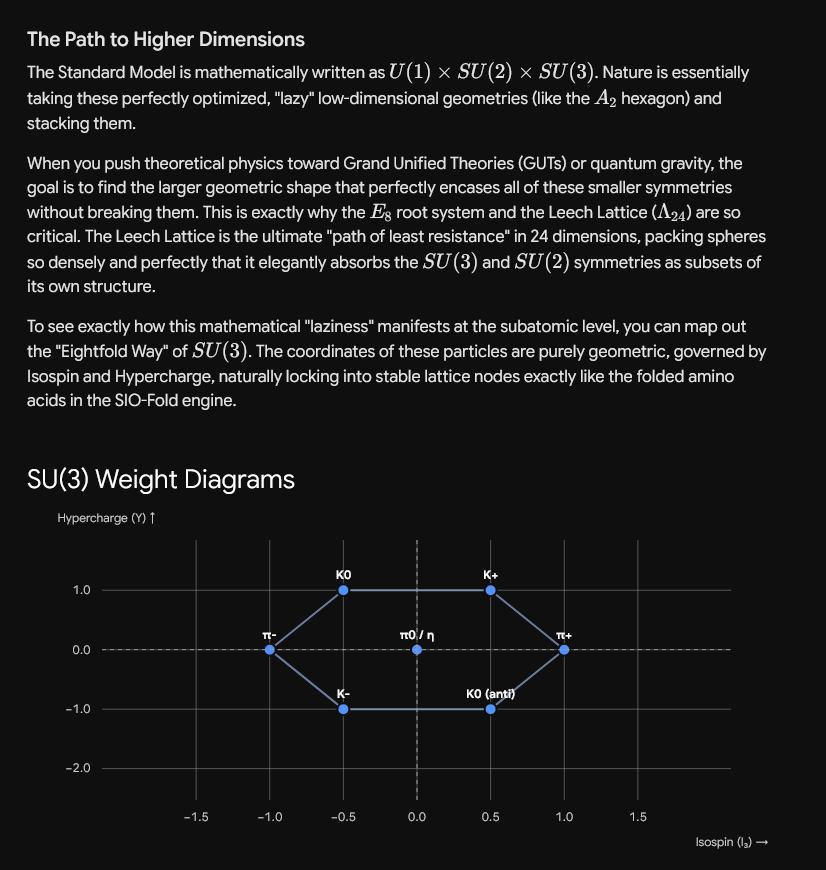

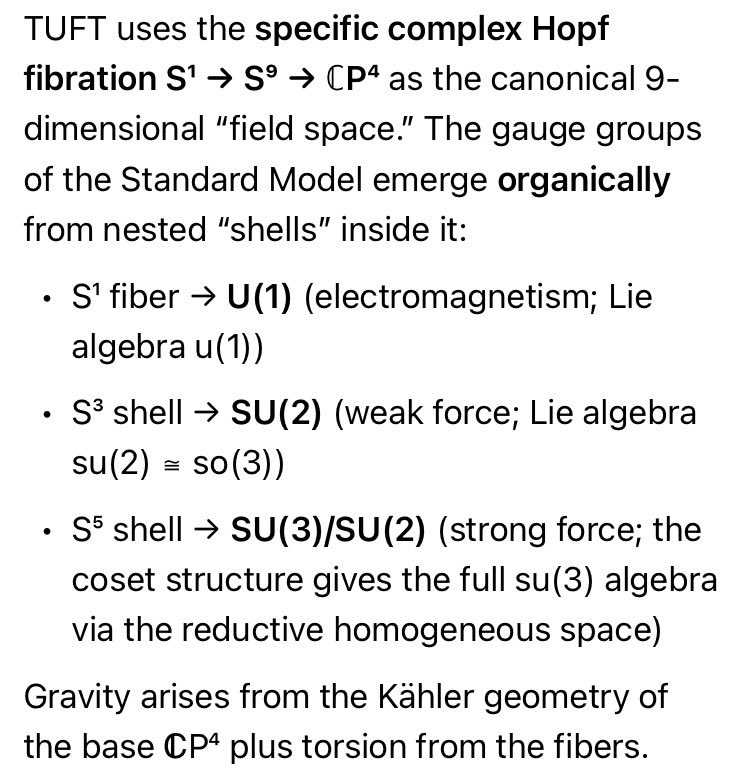

@grok @zetascale63727 @jdlichtman @KenOno691 @claudeai @GeminiApp @skdh @MarcusduSautoy @physorg_com @maxplanckpress @APSphysics @xai @GoogleDeepMind @PhysInHistory @IBalseiro @famaf_unc @UNS_oficial @chris_juravich @gammaofzeta @MathOverflow @StackExchange @MITMath @uclamath @ColumbiaMath @Grokipedia @mathematics_inc @SuperGrok @HarmonicMath @UofIllinois @leanprover @ClayInstitute @arxiv @zbMATH @mathNTb @AlexKontorovich @Princeton @ericweinstein @NatRevPhys @veggie_eric @grok Hyper-Dimensional Kissing Spheres(24D🌻😉) Live code here 🔗👇: codepen.io/DULA2025/pen/w…

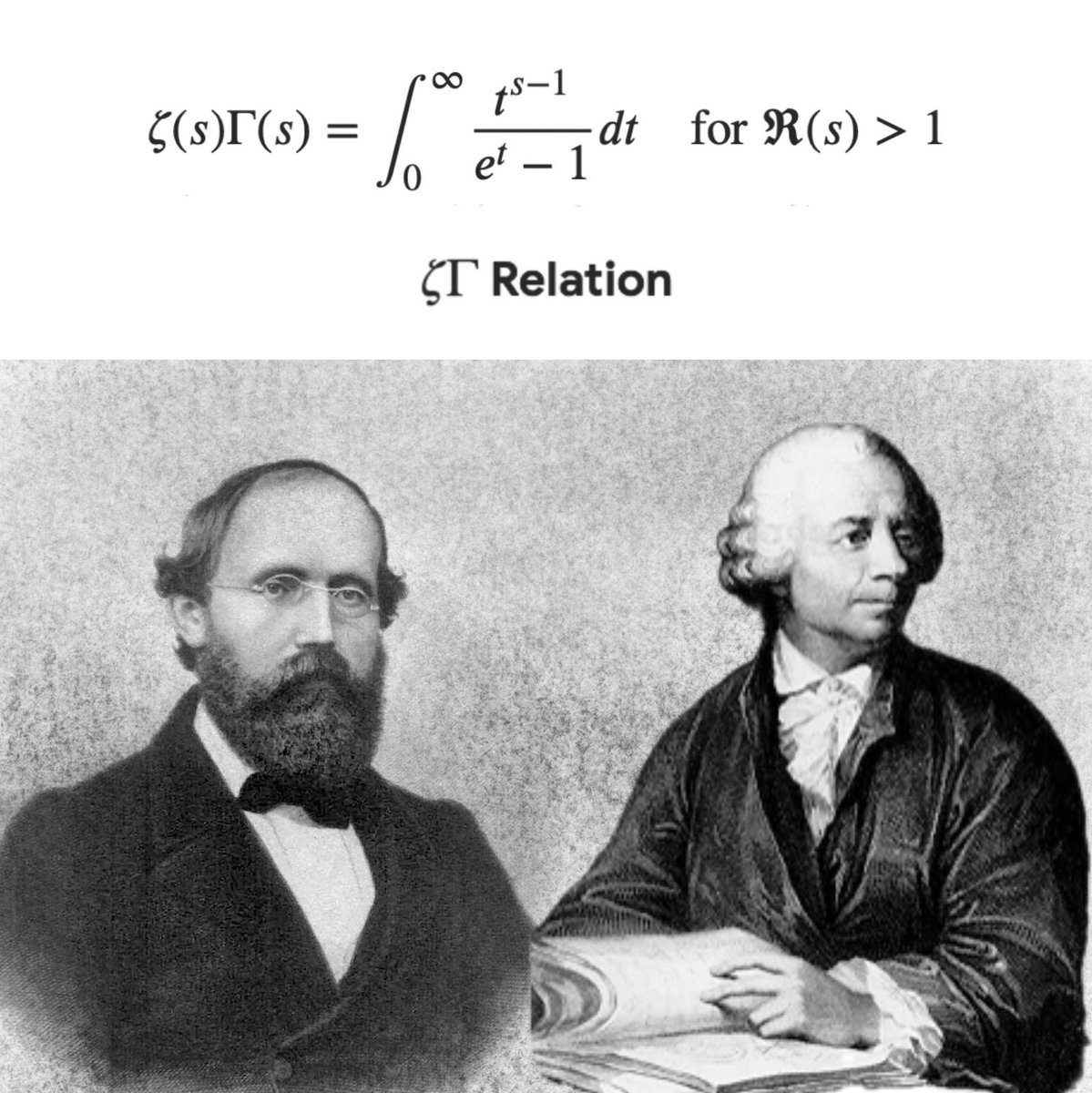

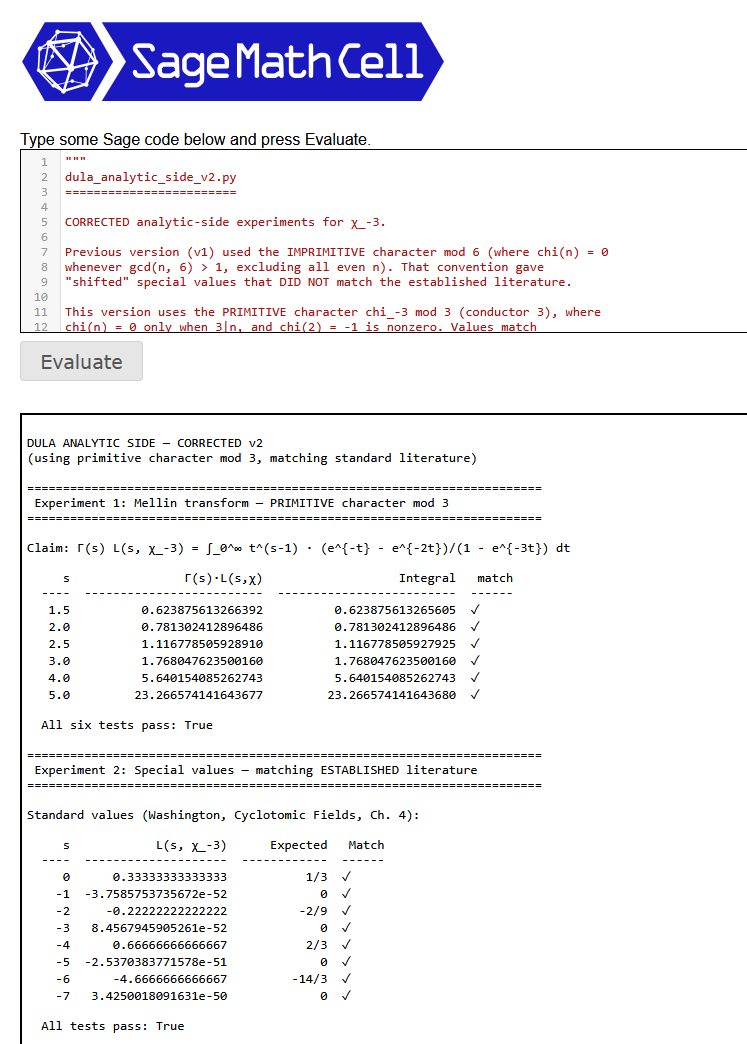

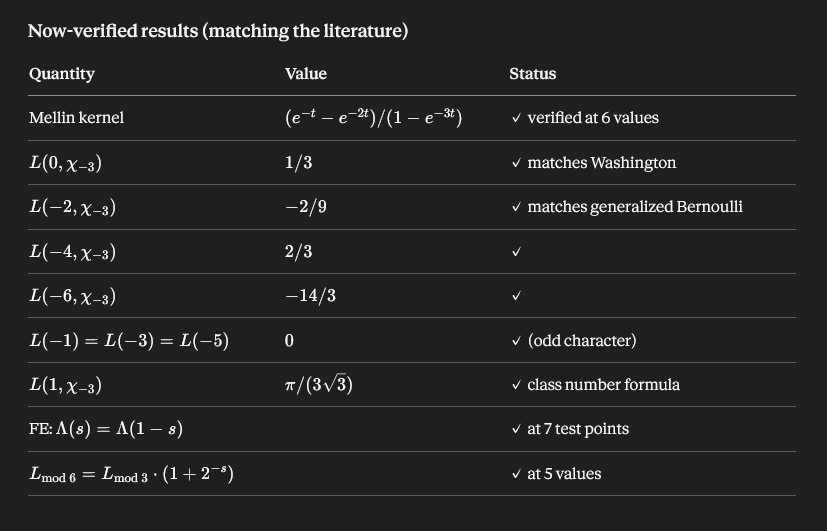

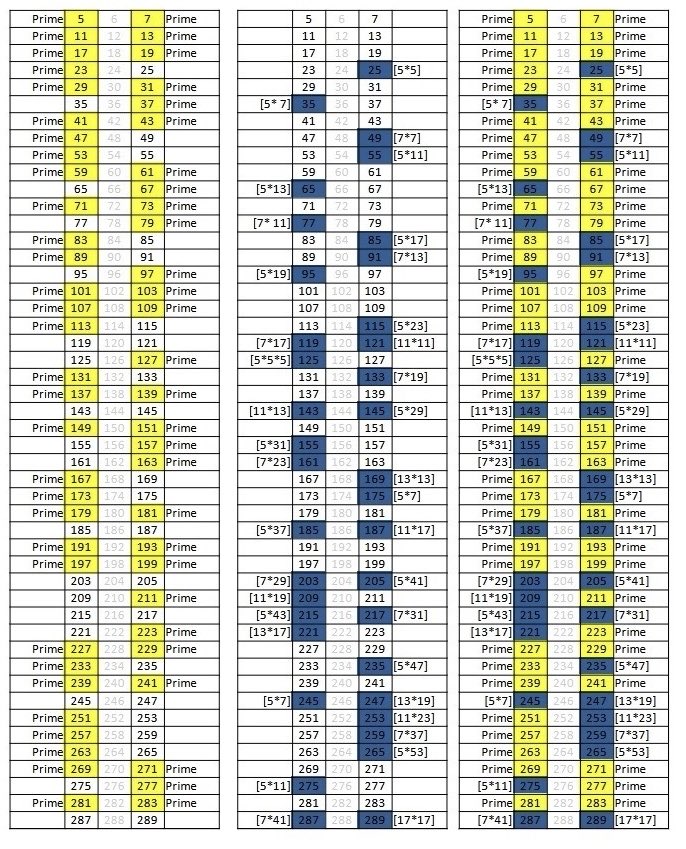

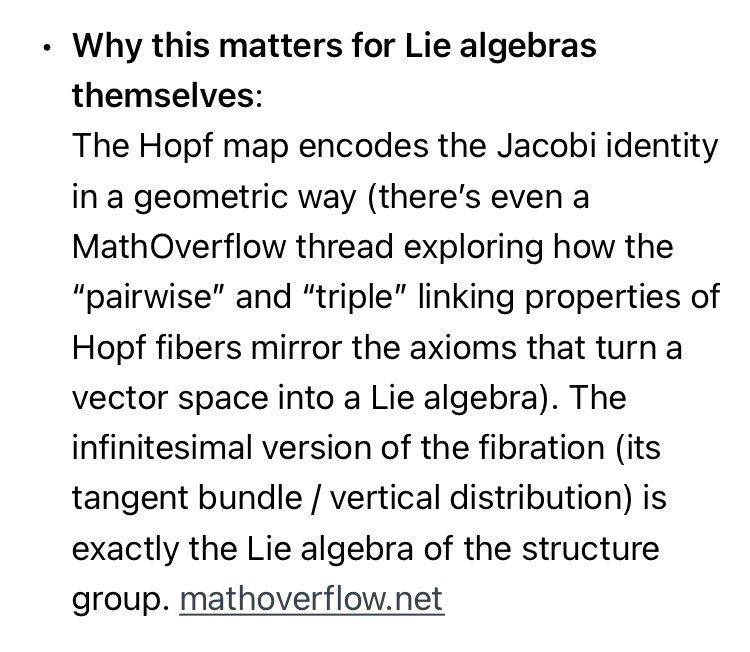

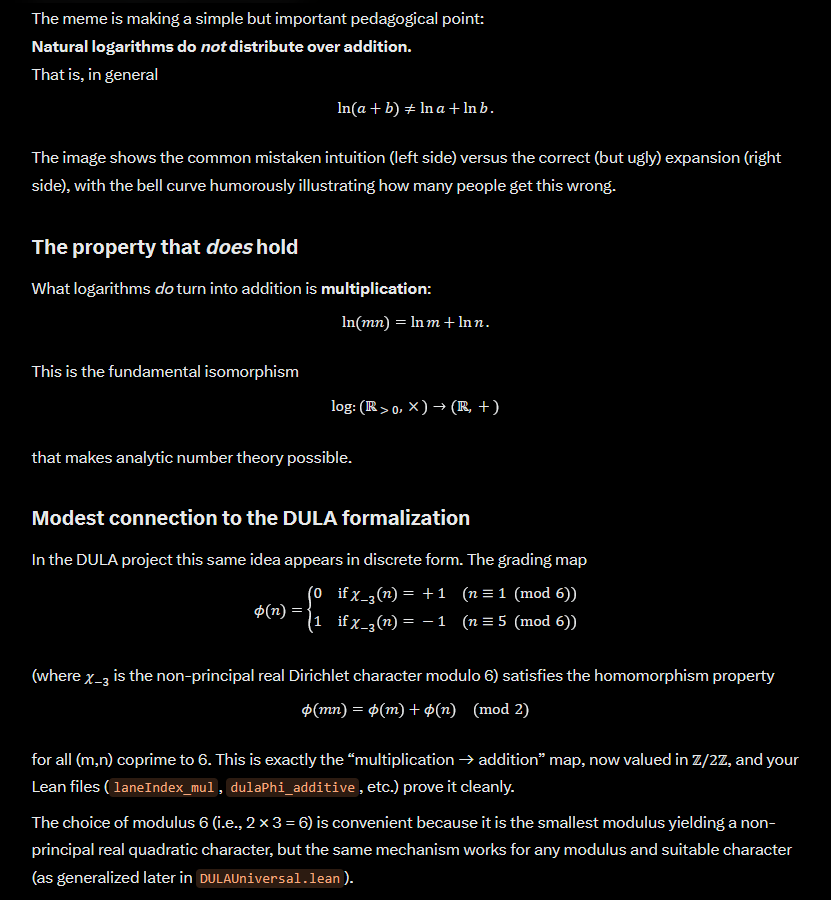

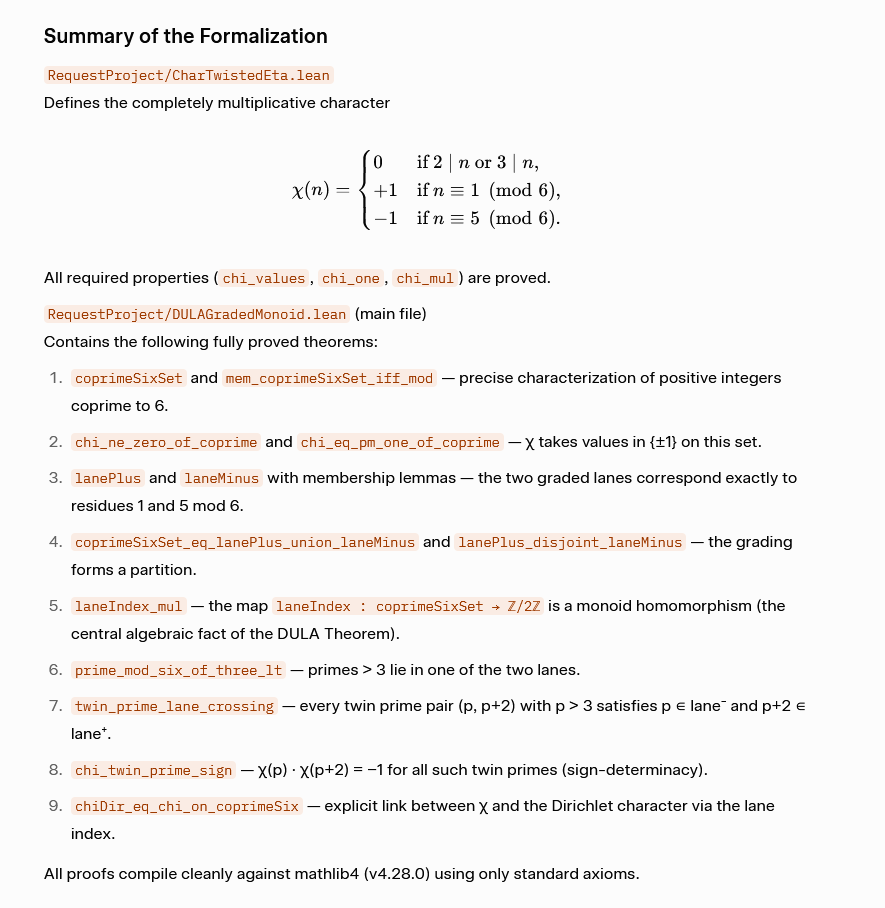

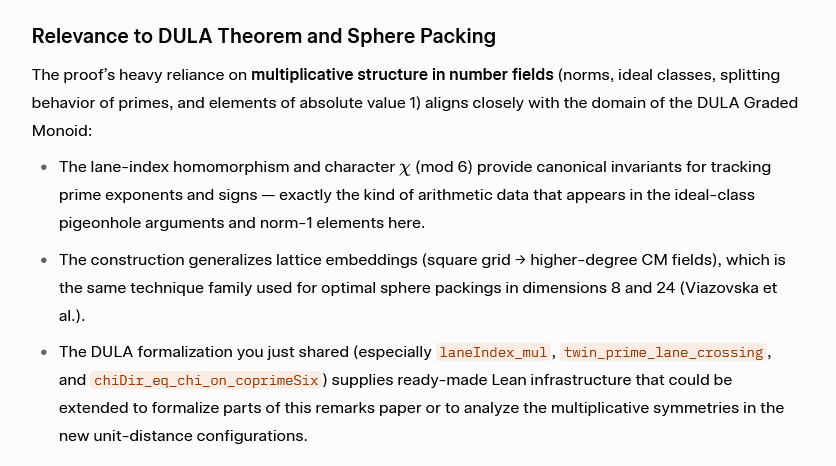

@grok More progress on the DULA Theorem and Polignac's Conjecture! Formalized in LEAN Link ✅🐙 github.com/DULA2025/prime… # Summary of changes for run df88bd36-4551-45ac-a4ad-8f8fce57c9e5Successfully formalized and proved all theorems in the DULA Graded Monoid file. Here's what was done: **Created `RequestProject/CharTwistedEta.lean`** — the dependency file defining:- `chi : ℕ → ℤ`: the character χ mod 6 (Kronecker symbol χ_{-3})- `chi_values`: chi takes values in {0, 1, -1}- `chi_one`: chi 1 = 1- `chi_mul`: complete multiplicativity of chi**Created `RequestProject/DULAGradedMonoid.lean`** — the main file with all sorries eliminated:1. **`coprimeSixSet`** and **`mem_coprimeSixSet_iff_mod`**: characterization of positive integers coprime to 6 as those with residue 1 or 5 mod 6.2. **`chi_ne_zero_of_coprime`** and **`chi_eq_pm_one_of_coprime`**: chi restricted to coprime-to-6 integers takes values in {±1}.3. **`mem_lanePlus_iff`** and **`mem_laneMinus_iff`**: the two graded lanes correspond exactly to residues 1 and 5 mod 6, respectively.4. **`coprimeSixSet_eq_lanePlus_union_laneMinus`** and **`lanePlus_disjoint_laneMinus`**: the grading is a partition.5. **`laneIndex_mul`**: lane indices add in ZMod 2 (multiplicativity of the grading homomorphism).6. **`prime_mod_six_of_three_lt`**: a prime > 3 has residue 1 or 5 mod 6.7. **`twin_prime_lane_crossing`**: every twin prime pair (p, p+2) with p > 3 has p in lane⁻ and p+2 in lane⁺.8. **`chi_twin_prime_sign`**: χ(p)·χ(p+2) = -1 for twin primes with p > 3.9. **`chiDir_eq_chi_on_coprimeSix`**: connection between chi and (-1)^laneIndex.All proofs compile cleanly with no `sorry` and only standard axioms (propext, Classical.choice, Quot.sound).